“Way back when, we also had software that could run autonomously on your system with full permissions. We called it ‘malware.'” — Top comment on r/sysadmin, 2,244 upvotes

On r/sysadmin, the post “OpenClaw is going viral… most people setting it up have no idea what’s inside the image” hit 2,244 upvotes. That comment sits at the exact intersection of humor and truth that makes sysadmins uncomfortable. Because the AI agents you’re deploying in 2026 — OpenClaw, custom builds, internal tools — run code, make network calls, read files, and interact with APIs. Without sandboxing, they’re doing all of that with whatever permissions your host system grants them.

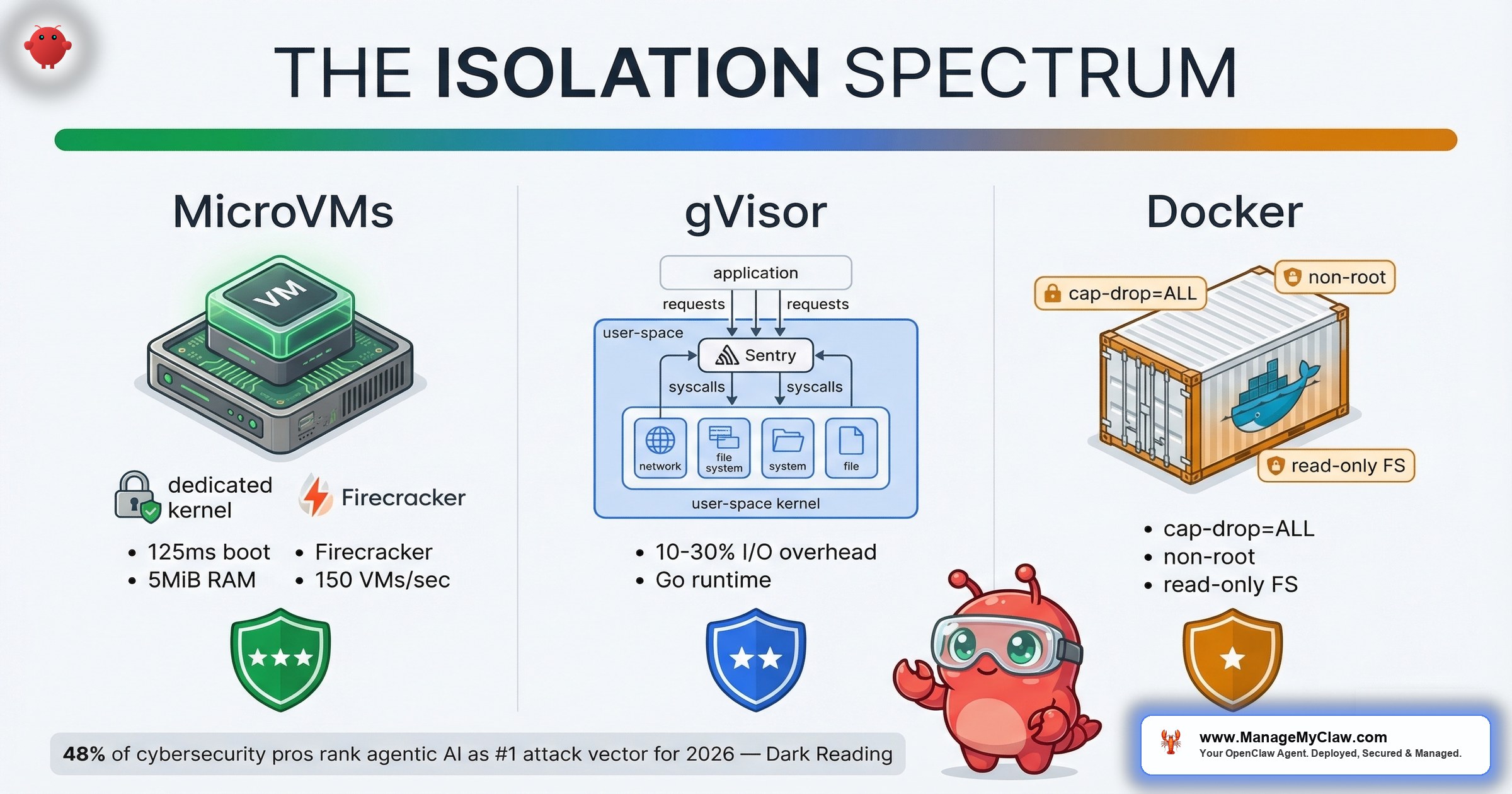

A Dark Reading poll found that 48% of cybersecurity professionals identify agentic AI as the #1 attack vector for 2026. Not phishing. Not ransomware. AI agents. The question isn’t whether to sandbox your agents. It’s which sandboxing approach matches your threat model, your ops team’s capacity, and your budget. There are 3 established options in 2026 — MicroVMs, gVisor, and hardened Docker containers — plus WebAssembly (WASM) emerging as a fourth for specific workloads. Each sits at a different point on the isolation-vs-complexity spectrum. This post breaks down what each one actually does, where the trade-offs fall, and how the industry is moving after GTC 2026 and RSAC 2026 put agent governance at center stage.

The Isolation Spectrum: 3 Layers, 3 Philosophies

Think of agent sandboxing as a building’s security system with 3 tiers. The top floor is the vault — dedicated walls, its own power supply, separate air handling. The middle floor has reinforced doors and security cameras but shares the building’s HVAC. The ground floor uses locked offices with controlled key access. All 3 protect what’s inside. They just do it at different depths, with different costs, and with different failure modes.

Here’s how the 3 sandboxing approaches map to that analogy:

| Dimension | MicroVMs | gVisor | Hardened Docker |

|---|---|---|---|

| Examples | Firecracker, Kata Containers | Google gVisor (runsc) | Docker + security flags |

| Kernel | Dedicated kernel per workload | User-space kernel intercepts syscalls | Shared host kernel |

| Isolation Level | Strongest — VM-level | Strong — syscall filtering | Moderate — namespace/cgroup |

| Startup Time | ~125ms (near-container speed) | Slightly slower than native | Fastest |

| Overhead | <5 MiB per VM | 10–30% I/O overhead | Lowest |

| Ops Complexity | Highest — requires KVM | Moderate — custom OCI runtime | Lowest — standard Docker |

| Best For | Multi-tenant, regulated, high-risk | Cloud-native, moderate risk | SMBs, single-tenant, fast deploy |

| GPU/AI Workloads | Limited — passthrough needed | Supported via platform mode | Full native support |

The table tells you what. The next 3 sections tell you why each approach works the way it does and where it breaks down.

MicroVMs — Dedicated Kernels per Agent

MicroVMs give every workload its own lightweight virtual machine with a dedicated Linux kernel — completely separated from the host. Firecracker, built by AWS for Lambda and Fargate, boots a VM in roughly 125 milliseconds (compared to 1–2 seconds for traditional VMs, and ~50ms for containers). Each microVM consumes less than 5 MiB of memory overhead, and a single host can launch up to 150 VMs per second. Those numbers matter for AI agent workloads: when you need to spin up isolated sandboxes on demand — one per agent invocation — Firecracker’s density and speed make it feasible at scale.

Kata Containers takes the MicroVM concept further by orchestrating multiple VMMs — Firecracker, Cloud Hypervisor, and QEMU — through standard container APIs. This flexibility means you can switch the underlying hypervisor based on infrastructure needs without changing your deployment pipeline. Kata is ideal for multi-tenant workloads, SaaS platforms, code execution environments, and AI sandboxes where you need MicroVM isolation but want to consume it through familiar container tooling.

The key architectural property across both: your agent’s processes never share a kernel with the host or with other agents. A kernel vulnerability in the agent’s VM stays inside that VM. It can’t reach the host. It can’t reach other workloads.

This is the strongest isolation available short of air-gapping. It’s also the approach that NVIDIA’s OpenShell — announced at GTC on March 16, 2026 — builds on. OpenShell provides OS-level sandboxing that goes deeper than container-level isolation, with enterprise partnerships spanning Adobe, Atlassian, Cisco, CrowdStrike, Salesforce, SAP, ServiceNow, and Red Hat. When that many enterprise vendors co-sign an agent security stack, it tells you something about how seriously they’re taking the isolation problem.

The trade-off: each MicroVM carries its own kernel in memory. On a 4 GB VPS running a single agent, that overhead matters. You need KVM or a hypervisor layer, which rules out most shared hosting environments and some cloud instances that don’t expose nested virtualization. If you’re running 1 agent on a dedicated server, MicroVMs are architecturally elegant. If you’re running 12 agents on a $20/month VPS, they’re impractical.

When to pick this: Multi-tenant environments where different clients’ agents share infrastructure. Regulated industries (healthcare, finance) where you need to demonstrate process-level isolation to auditors. Deployments that handle PII and can’t tolerate any possibility of cross-workload contamination.

gVisor — A Kernel Inside Your Kernel

gVisor takes a different approach. Instead of giving each workload its own full VM, it inserts a user-space kernel — called Sentry — between the container and the host kernel. Sentry is written in Go and reimplements a massive subset of Linux syscalls entirely in user space. When an application makes a syscall, gVisor’s ptrace or KVM platform intercepts it and redirects it to Sentry, which handles the call without ever touching the real host kernel. Even if an attacker finds a kernel exploit, they’re exploiting gVisor’s Sentry process — a Go application running in user space — not the actual host kernel. That’s a fundamentally different attack surface.

Google uses gVisor internally. That’s not marketing — it’s the runtime behind Google Cloud’s container-optimized infrastructure. It’s lighter than MicroVMs because there’s no dedicated kernel per workload, but heavier than native containers because every syscall passes through an additional layer of interception and validation.

If MicroVMs are a vault with its own walls, gVisor is a security checkpoint at every door. Nothing gets through without showing ID, but you’re still inside the same building.

The practical consideration for AI agent deployments: gVisor’s syscall interception adds 10–30% overhead on I/O-heavy workloads, while compute-heavy workloads see minimal impact. Agents that read and write files constantly, make high-frequency network calls, or run compute-intensive tools inside their sandbox will feel that I/O penalty. For agents that primarily make API calls and process text — which describes most OpenClaw workflows — the performance impact is negligible.

When to pick this: Cloud-native deployments on platforms that support custom OCI runtimes. Teams that want stronger isolation than Docker provides but can’t justify the infrastructure requirements of MicroVMs. Environments where you’re running untrusted or community-built agent skills alongside production workloads.

Hardened Docker — The Practical Default

Standard Docker containers share the host kernel. That’s the trade-off you accept for the fastest startup times, the lowest overhead, and the widest ecosystem of tooling. But “standard Docker” and “hardened Docker” are very different security postures. The 5 security flags that OpenClaw requires — and that we covered in the Docker sandboxing guide — transform a container from a packaging format into an actual isolation boundary:

- Non-root user — agent processes can’t escalate to root inside the container

- Read-only root filesystem — malicious skills can’t write persistent backdoors

--cap-drop=ALL— drops every Linux capability, removing the ability to bind privileged ports, modify networking, or load kernel modules--security-opt=no-new-privileges— prevents processes from gaining additional privileges via setuid or setgid binaries- No Docker socket mount — prevents the agent from controlling Docker itself, which would let it escape the container entirely

With all 5 flags applied, a compromised agent inside the container can’t modify the filesystem, can’t escalate privileges, can’t talk to Docker, and can’t use Linux capabilities to break out. That’s not VM-level isolation — it still shares the host kernel. But it’s vastly stronger than the default Docker configuration that most people deploy with, which is essentially no isolation at all beyond namespace separation.

When to pick this: Single-tenant deployments where you control the agent and the skills it runs. Small and mid-size teams that need production-grade security without a dedicated infrastructure team. Environments where you’re pairing container hardening with network-layer controls (UFW, DOCKER-USER iptables chain) to create defense in depth.

The Industry Is Moving Fast — Here’s Where

March 2026 was a turning point for agent sandboxing and governance. Three moves in particular reshaped the landscape:

NVIDIA OpenShell + TrendAI Vision One

Announced on March 16, 2026, TrendAI’s Vision One integration with NVIDIA OpenShell lets enterprise administrators define AI governance and compliance policies centrally. TrendAI brings governance, risk visibility, and runtime enforcement directly into the agent lifecycle. That means your security team can set policies — which syscalls agents can make, which network endpoints they can reach, which data categories they can access — and those policies are enforced at the OS level, not inside the agent’s own process. The agent doesn’t get a vote.

Microsoft Agent Governance Toolkit

Microsoft open-sourced their Agent Governance Toolkit on GitHub (microsoft/agent-governance-toolkit). It covers runtime policy enforcement, zero-trust identity, execution sandboxing, and SRE. The toolkit addresses all 10 categories in the OWASP Agentic Top 10. Microsoft Agent 365 — the commercial product built on the same framework — is generally available May 1, 2026, at $15/user/month. That pricing tells you Microsoft expects agent governance to be as ubiquitous as email security within 12 months.

RSAC 2026 Innovation Sandbox

RSAC 2026’s Innovation Sandbox — the most-watched startup competition in cybersecurity — featured Geordie AI, focused on enterprise AI agent security governance systems. When RSA picks agent security for its flagship competition, the window between “emerging concern” and “compliance requirement” is shorter than you think.

“I’ve been building an AI agent governance runtime in Rust. Yesterday NVIDIA announced the same thesis at GTC.”

— r/LocalLLaMA developer, March 2026That developer wasn’t surprised by the announcement. They were surprised by the timing. The problem was obvious to anyone running agents in production. The validation from NVIDIA, Microsoft, and RSAC simultaneously just made it undeniable.

Governance by Design, Not by Afterthought

JetBrains published “Why Your AI Governance Is Holding You Back” in March 2026. The core argument: governance must be embedded by design — woven into how agents are built, orchestrated, deployed, and monitored. Bolting governance on after deployment is like installing a smoke detector after the kitchen is already on fire. It might alert you to the next one. It won’t help with the one you’re standing in.

Every agent should operate with the minimum autonomy, the minimum tool access, and the minimum credential scope required to complete its task. Not “what’s convenient.” Not “what’s easiest to configure.” The minimum. This principle applies to every sandboxing layer — the MicroVM’s network rules, gVisor’s syscall allowlist, Docker’s capability drops. Least agency isn’t a suggestion. It’s the design philosophy that makes sandboxing work.

This is where the sandboxing spectrum connects to the governance conversation. Sandboxing is the enforcement layer. Governance is the policy layer. If your agent doesn’t need outbound HTTPS, block it. If it doesn’t need filesystem writes, make the root read-only.

Sandboxing without governance is a padded room. Governance without sandboxing is a policy manual that nobody enforces. Governance without least agency is giving an agent a keyring when it only needs one key. You need all three.

How to Pick Your Sandboxing Layer

The decision isn’t “which is best” — it’s “which matches your constraints.” Here’s the decision tree:

Start with hardened Docker if you’re a small team running 1–3 agents on a single server. Apply all 5 security flags. Add UFW rules to restrict outbound traffic. Use the DOCKER-USER iptables chain to control container networking. Layer Tailscale or WireGuard for encrypted access. This covers 90% of single-tenant deployments and requires zero infrastructure beyond a standard VPS.

Move to gVisor when you’re running untrusted skills, community plugins, or multiple agents from different sources on shared infrastructure. The user-space kernel catches syscall-level attacks that container namespace isolation misses. gVisor is particularly well-suited for compute-heavy agents with limited I/O — you get strong isolation without full VM overhead, and the 10–30% I/O penalty won’t affect workloads that spend most of their time on computation. If your cloud provider supports custom OCI runtimes (GCP does natively, AWS and Azure require configuration), gVisor slots in with minimal changes to your deployment pipeline.

Deploy MicroVMs (Firecracker or Kata) when you’re in a multi-tenant environment, handling regulated data, or when your threat model includes kernel-level exploits. For production AI agents executing untrusted code, the hardware boundary prevents kernel-based attacks entirely — a category of defense that no amount of container hardening can replicate. This requires bare metal or VMs with nested virtualization support. The operational overhead is real — you’re managing a fleet of VMs, not containers — but the isolation guarantees are the strongest available outside air-gapped infrastructure. With Kata, you get that isolation through standard container APIs, which significantly lowers the migration cost.

WASM runtimes provide lightweight, capability-based sandboxing with near-native performance and a memory footprint smaller than any container. For agents running deterministic, compute-bound tasks — data transformation, code evaluation, plugin execution — WASM offers isolation without the kernel overhead of MicroVMs or the syscall interception tax of gVisor. Not yet general-purpose: WASM runtimes lack full POSIX compatibility, filesystem abstractions are still maturing, and networking support varies. Watch this space, but don’t bet your production stack on it until the ecosystem closes those gaps.

And regardless of which layer you pick: pair it with network-level controls. Sandboxing limits what an agent can do on the host. Network rules limit where it can reach. Both layers together are what make the sandbox meaningful. See the OpenClaw Security guide for the full stack, including firewall configuration, credential isolation, and monitoring.

Where OpenClaw Sits on the Spectrum

OpenClaw’s default deployment uses hardened Docker containers — layer 3 of the sandboxing spectrum. The 5 required security flags are a solid baseline. But they’re a baseline, not a ceiling. NVIDIA’s OpenShell adds OS-level sandboxing (layers 1–2) on top of the container layer, which is why the NemoClaw stack pairs OpenShell with OpenClaw rather than replacing Docker entirely.

The defense-in-depth pattern for an OpenClaw deployment in 2026 looks like this: hardened Docker at the container layer, network controls (UFW + DOCKER-USER chain + Tailscale) at the perimeter, and — if you’re running OpenShell — OS-level sandboxing underneath. Each layer catches what the others miss. A container escape hits the network firewall. A network bypass hits the OS sandbox. No single layer is sufficient. All 3 together create an isolation boundary that’s genuinely hard to break out of.

ManageMyClaw applies all 5 Docker security flags, UFW firewall rules, and the DOCKER-USER iptables chain to every deployment, starting at the $499 Starter tier. That’s layer 3 hardened, with network controls layered on top. For the full security architecture, including the 14-point audit checklist, see the security pillar.

Frequently Asked Questions

What are MicroVMs and how do they differ from regular containers for AI agents?

MicroVMs (like Firecracker and Kata Containers) give each workload its own lightweight virtual machine with a dedicated minimal kernel. Unlike Docker containers, which share the host kernel via namespaces and cgroups, MicroVMs provide complete kernel-level isolation. A kernel vulnerability exploited inside a MicroVM can’t reach the host or other workloads. They achieve near-container startup times (roughly 125ms for Firecracker) but require KVM or hypervisor support, which adds infrastructure requirements.

How does gVisor protect AI agents differently than Docker?

gVisor inserts a user-space kernel (called Sentry) between the container and the host kernel. Every system call the agent makes is intercepted and validated by Sentry before it can reach the real kernel. This means an attacker who finds a kernel exploit would be exploiting gVisor’s process, not the host kernel itself. Google uses gVisor internally for its container infrastructure. It’s lighter than MicroVMs because there’s no dedicated kernel per workload, but adds some I/O latency due to the syscall interception layer.

What are the 5 Docker security flags every AI agent deployment needs?

The 5 flags are: non-root user (prevents privilege escalation inside the container), read-only root filesystem (blocks persistent backdoors), --cap-drop=ALL (removes all Linux capabilities), --security-opt=no-new-privileges (prevents gaining privileges via setuid/setgid), and no Docker socket mount (prevents the agent from controlling Docker and escaping the container). Together, these transform Docker from a packaging tool into an actual isolation boundary. See the Docker sandboxing guide for implementation details.

What is NVIDIA OpenShell and how does it relate to agent sandboxing?

NVIDIA OpenShell is an open-source runtime for autonomous AI agents, announced at GTC on March 16, 2026. It provides OS-level sandboxing that goes deeper than container-level isolation — operating at layers 1–2 of the sandboxing spectrum (MicroVM/gVisor territory) rather than the container layer alone. Enterprise partners include Adobe, Atlassian, Cisco, CrowdStrike, Salesforce, SAP, ServiceNow, and Red Hat. TrendAI’s Vision One integration adds centralized governance policies and runtime enforcement directly into the agent lifecycle.

Is hardened Docker sufficient for production AI agent deployments?

For single-tenant deployments where you control the agent and the skills it runs, hardened Docker with all 5 security flags plus network controls (UFW, DOCKER-USER iptables chain, Tailscale) provides production-grade security. It’s the most practical option for small and mid-size teams. However, if you’re running untrusted third-party skills, handling regulated data, or operating multi-tenant infrastructure, you should evaluate gVisor or MicroVMs for stronger isolation guarantees.

What is the OWASP Agentic Top 10 and why does it matter for sandboxing?

The OWASP Agentic Top 10 is the industry standard list of the most critical security risks for autonomous AI agents. A key concept from OWASP is the principle of least agency: every agent should operate with the minimum autonomy, the minimum tool access, and the minimum credential scope required to complete its task. Microsoft’s open-source Agent Governance Toolkit covers all 10 categories, including runtime policy enforcement, zero-trust identity, and execution sandboxing. Sandboxing addresses the execution isolation risk, but comprehensive agent security requires governance, identity, monitoring, and network controls alongside isolation.

Is WebAssembly (WASM) a viable sandboxing option for AI agents?

WASM is emerging as a fourth sandboxing option alongside MicroVMs, gVisor, and hardened Docker. It provides lightweight, capability-based isolation with near-native performance and a memory footprint smaller than any container runtime. For agents running deterministic, compute-bound tasks — data transformation, code evaluation, plugin execution — WASM offers compelling isolation characteristics. However, it’s not yet a general-purpose replacement: WASM runtimes lack full POSIX compatibility, filesystem support is still maturing, and networking capabilities vary by runtime. Watch this space, but don’t bet your production stack on it until the ecosystem closes those gaps.

Can I combine multiple sandboxing layers?

Yes, and you should. Defense in depth means layering isolation boundaries so that each layer catches what the others miss. A production deployment might use hardened Docker at the container level, gVisor or OpenShell for syscall-level/OS-level sandboxing underneath, and network controls (firewall rules, egress filtering, encrypted access via Tailscale or WireGuard) at the perimeter. NVIDIA’s NemoClaw stack demonstrates this pattern: OpenShell provides OS-level sandboxing beneath the container layer, with policy enforcement and audit trails on top.