103 upvotes, 91 comments, one signal: “The reason small businesses are seeing such a massive advantage isn’t the LLM — it’s the orchestration.”

On r/AI_Agents, a thread titled “What is your full AI Agent stack in 2026?” (103 upvotes, 91 comments) produced one of the clearest signals in the AI subreddit ecosystem this month. The top comment, with 14 upvotes: “The reason small businesses are seeing such a massive advantage isn’t just because they have access to the same brains (LLMs) as big companies, but because they can move faster on the Orchestration.”

Not the model. Not the prompt. The orchestration layer — the infrastructure between the LLM and the business process it’s supposed to run.

That comment landed in a thread where 91 people described what they’re actually running in production. Not what they plan to build. Not what worked in a demo. What survived contact with real users, real API failures, and real edge cases over months of daily use. Across that thread and 3 others with a combined 337 upvotes and 327 comments, a production stack pattern emerged. It’s not one tool. It’s 6 layers, each handling a different job, each replaceable independently.

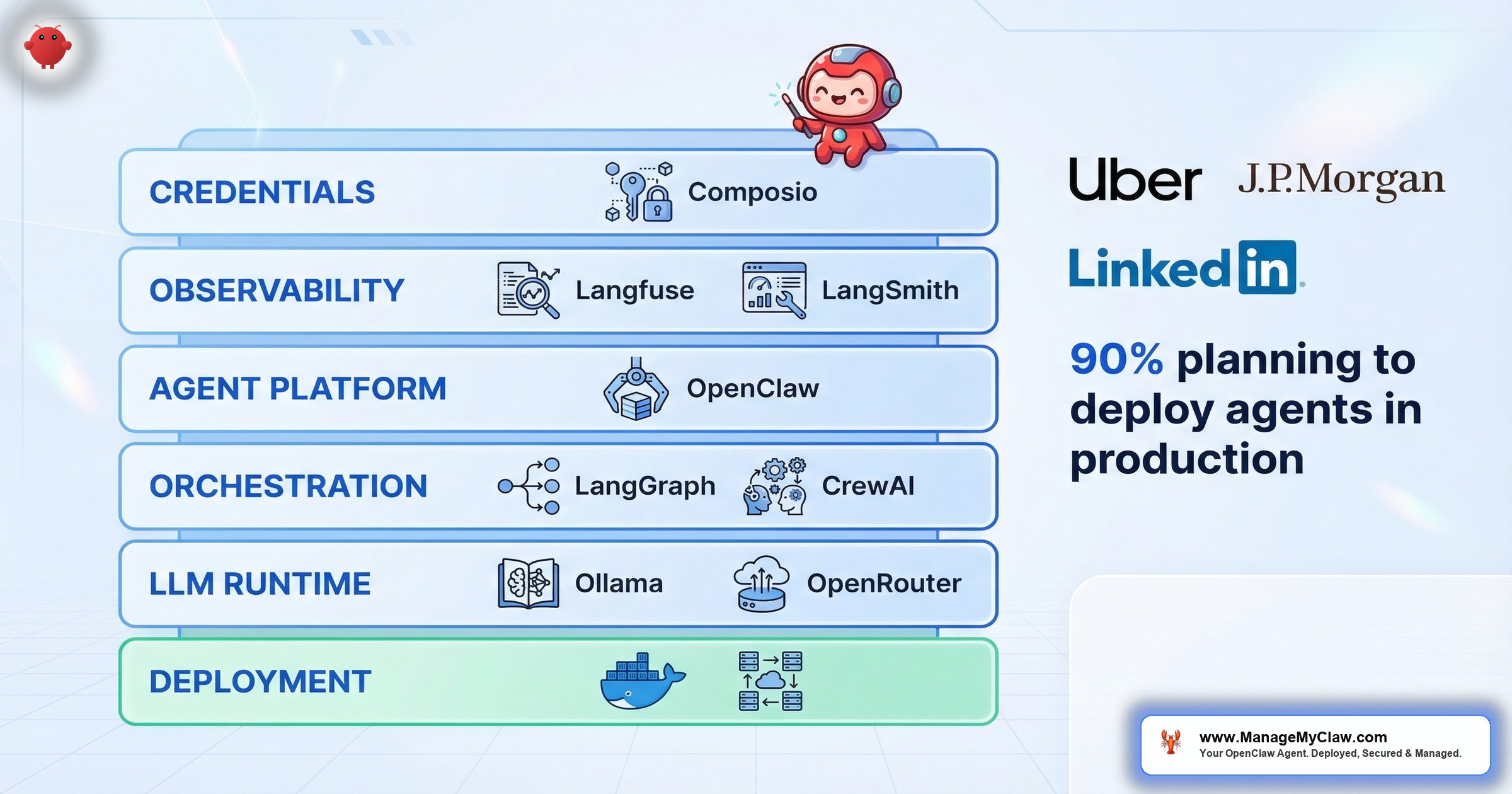

The pattern isn’t just a Reddit phenomenon. Paolo Perrone’s “The AI Agent Stack in 2026: Frameworks, Runtimes, and Production Tools” (Data Science Collective, March 2026) and Tensorlake’s companion analysis independently mapped the same layered architecture. And the adoption numbers confirm it: 90% of respondents in non-tech companies have either deployed AI agents in production or are actively planning to. This isn’t an engineering curiosity anymore. It’s infrastructure.

This post maps that stack layer by layer — what each layer does, which tools the community is using at each one, and the uncomfortable insight that keeps surfacing in every thread.

The Framework Landscape Has Settled

Twelve months ago, the orchestration layer was a free-for-all. Dozens of frameworks, weekly launches, no consensus. In 2026, three frameworks dominate AI agent orchestration: LangGraph, CrewAI, and AutoGen.

LangGraph v1.0 (October 2025) is in production at Uber, JP Morgan, LinkedIn, and Klarna. When financial institutions with compliance requirements and ride-sharing platforms with millions of daily transactions both choose the same orchestration framework, that’s not a trend. That’s a signal.

LangGraph solidified its position as the graph-based orchestration leader with its v1.0 release in October 2025. That wasn’t a minor version bump — it was the framework declaring itself production-stable.

CrewAI carved out its niche in multi-agent collaboration with role-based agents. Where LangGraph gives you fine-grained control over individual agent graphs, CrewAI lets you define a crew of agents with specific roles, goals, and backstories and coordinate them on a shared task. It’s a different mental model — less graph theory, more team management.

AutoGen, Microsoft’s multi-agent framework, occupies the third position. Its strength is conversational agent patterns — agents that debate, review each other’s work, and reach consensus before acting. For teams already embedded in the Microsoft ecosystem, AutoGen integrates naturally.

But here’s the nuance the ZenML analysis surfaced: most real-world systems rely on one primary orchestration layer rather than multiple overlapping frameworks. LangChain and LangGraph are typically used together as a single orchestration layer, not stacked on top of CrewAI. Pick one. Master it. Move on to the layers that actually determine whether your stack survives.

And where does n8n fit? It’s complementary, not competing. LangGraph handles sophisticated orchestration with fine-grained control for developers. n8n handles rapid deployment with visual development for non-developers. Increasingly, teams use both — n8n for business process automation, LangGraph for the agent logic underneath.

The 6-Layer Production Stack

Think of the AI agent stack like a building’s infrastructure. The electrical panel, plumbing, HVAC, security system, fire alarms, and interior design are all separate systems. You can swap one without tearing down the others. But if any one fails, the building becomes unusable. The production agent stack works the same way — 6 layers, each independently upgradeable, all of them necessary.

Here’s what survived 8+ months of production across the threads analyzed:

| Layer | Function | Top Community Pick | Why It Won |

|---|---|---|---|

| 1. Agent Platform | Core agent runtime + skills | OpenClaw | Self-hosted, MCP skills, local data control |

| 2. Orchestration | Multi-step workflow execution | n8n / Python orchestrator | Retry logic, error handling, visual audit trail |

| 3. LLM Runtime | Model hosting + routing | Ollama + model routing | Local inference, cost control, privacy |

| 4. Credentials | OAuth + API key management | Composio | Agent never touches raw credentials |

| 5. Observability | Tracing, evaluation, debugging | Langfuse / LangSmith | You can’t improve what you can’t observe |

| 6. Deployment + Hardening | Docker, firewall, security | Hardened Docker + UFW | Production-grade isolation, no DevOps team needed |

The table shows the stack. The addition of observability as its own layer — not a footnote inside deployment — reflects the 2026 consensus: AI observability is now mission-critical infrastructure. The rest of this post explains why each layer matters, what the community has learned about each one, and where the whole thing breaks down when you skip a layer.

Layer 1: The Agent Platform — Where Intent Becomes Action

The agent platform is the layer your users interact with. It interprets natural language, decides what needs to happen, and calls the right tools. OpenClaw was the most mentioned agent platform across all 4 threads analyzed. One comment in the “full AI Agent stack” thread put it simply: “100% vibing on openclaw. It takes care of it.”

But what the community means by “agent platform” in 2026 is different from what it meant 12 months ago. It’s not just a chatbot with tools. It’s a runtime that manages MCP skills, handles context across sessions, routes tasks to the right model, and maintains state between actions. The platform layer is where you define what your agent can do. Every other layer exists to support, secure, or scale what happens here.

On r/AI_Agents, the thread “I built AI agents for 20+ startups this year. Here is the engineering roadmap” (444 upvotes, 93 comments) drew a line between demos and production deployments. A comment in the thread noted: “A bit more sophisticated than the usual openClaw on Mac mini Post.” That’s the community acknowledging 2 tiers: the personal deployment that runs on a Mac mini and the production stack that requires the 4 layers sitting underneath.

The platform layer is necessary but not sufficient. It’s the front door to the building. Without the building behind it, the door opens onto nothing.

Layer 2: Orchestration — The Layer That Separates Demos from Production

This is where the 14-upvote comment hits hardest. Small businesses don’t win because they have better models. They win because they move faster on orchestration — the layer that connects the agent’s decisions to repeatable, reliable, auditable business processes.

“Running a multi-agent system in production for about 8 months now. Here’s what actually survived vs what we threw out: Survived: Python orchestrator that treats each agent as a subprocess with its own…”

— Top comment (16 upvotes), r/AI_Agents “Running AI agents in production” thread8 months. The systems that survived all share one property: they separated the intelligence layer (agent decides what to do) from the execution layer (orchestrator does it reliably). The intelligence layer can hallucinate, retry differently, or creatively reinterpret your instructions. The execution layer can’t. It runs the same way every time — or it fails loudly enough for you to fix it.

2 orchestration patterns dominate the community:

n8n — most mentioned workflow automation tool across all threads. The top comment on the “What AI tools are actually worth learning in 2026?” thread (117 upvotes, 105 comments) had 29 upvotes: “go for n8n if you want to automate repetitive tasks without writing much code. the framework matters less than people think.. genuinely what will determine if an agent is reliably or not is the infrastructure.” Self-hosted, visual workflow builder, built-in error handling and retry logic. For founders and small teams who don’t write Python daily, n8n is the orchestration layer. See the workflow library for production-ready patterns.

Python orchestrator — the pattern where each agent runs as a subprocess with its own process boundary, managed by a central Python script. This is what the 8-month production deployment used. It’s more flexible than n8n but requires engineering skills to build and maintain. LangGraph v1.0 fits here as the framework layer for developers who want structured agent graphs without writing the orchestration plumbing from scratch. Its production adoption at Uber, JP Morgan, LinkedIn, and Klarna validates the approach at enterprise scale. CrewAI and AutoGen serve the same layer with different paradigms — role-based multi-agent collaboration and conversational agent coordination, respectively.

“The orchestration layer is the transmission in your car. The engine (LLM) generates power. The wheels (APIs) touch the road. But without the transmission translating RPM into torque at the right ratio, you’re revving loudly in the driveway.”

Layer 3: LLM Runtime — Model Routing Is the Optimization Nobody Talks About

Ollama was the most mentioned local LLM runtime across every thread. The appeal is straightforward: run models locally, keep data on your machine, eliminate per-token API costs for routine tasks. But the more sophisticated production stacks aren’t running a single model for everything. They’re routing.

Model routing means sending different tasks to different models based on complexity. A quick classification task goes to a small, fast, local model. A nuanced legal summary goes to Opus 4.6 via API. A code generation request might go to a specialized coding model. The routing layer sits between the agent platform and the model, intercepting each request and deciding which model handles it based on the task type, token budget, or latency requirements.

The trending thread on r/openclaw — “Opus 4.6 vs MiMo-V2-Pro vs GLM-5 — Real-world tests, results are interesting” — captures why this matters. Different models win at different tasks. Running one model for everything means you’re overpaying for simple tasks and underperforming on hard ones. Model routing fixes both problems simultaneously.

“It’s the difference between hiring one generalist at senior pay for every job versus building a team where each person handles what they’re best at.”

The cost impact is measurable. Routing 70% of requests to a local model via Ollama and reserving API calls for the remaining 30% that need frontier-level reasoning can cut your monthly LLM spend by 50–60% while maintaining output quality where it matters.

Layer 4: Credential Management — The Layer Most People Skip

Your agent needs API keys and OAuth tokens to do anything useful. The question is how those credentials are stored, rotated, and isolated from the agent’s runtime. Composio showed up repeatedly as the credential management layer — it handles OAuth flows and API key storage so the agent can trigger actions without holding raw secrets in its context window.

This layer is easy to skip. You paste your API key into a config file, the agent works, and you move on. Then prompt injection hits, and the attacker who redirected your agent’s output now has your Stripe secret key sitting in the agent’s context. The credential layer exists specifically to prevent that scenario. The agent requests an action. The credential layer authenticates independently. The raw credential never enters the agent’s memory.

When n8n serves as your orchestration layer, its built-in credential vault handles this function for workflow-triggered actions. When the agent acts directly via MCP skills, Composio fills the same role. Either way, the pattern is identical: the agent says what to do, and a separate system authenticates the action.

Layer 5: Observability — The Layer That Became Non-Negotiable

In 2025, observability was a nice-to-have. In 2026, production teams treat it as mission-critical infrastructure — a dedicated layer in the stack. An agent can return a confident, well-formatted, completely wrong answer. Without tracing, you’ll never know.

The shift happened because agent failures are fundamentally different from traditional software failures. A web app either returns a 500 error or it doesn’t. An agent can return a confident, well-formatted, completely wrong answer — and without tracing, you’ll never know. Observability platforms solve this by capturing every LLM call, every tool invocation, every decision point, and making the entire chain inspectable after the fact.

The top platforms that production teams are converging on:

Langfuse — open source, self-hostable, built specifically for LLM application tracing. For teams that already self-host their agent stack, Langfuse fits naturally. It captures traces, evaluations, and cost data without sending anything to a third-party service.

LangSmith — the observability platform from the LangChain ecosystem. If you’re already running LangGraph for orchestration, LangSmith provides native integration for tracing agent graphs, debugging failed runs, and evaluating output quality over time.

Braintrust and Helicone round out the options — Braintrust for evaluation-focused workflows and Helicone for proxy-based observability that sits in front of your LLM API calls without requiring code changes.

“You can’t improve what you can’t observe.”

— Most upvoted observation, r/AI_Agents engineering roadmap threadLayer 6: Deployment and Hardening — Where Theory Meets the Server

Layers 1 through 5 define what your agent stack does and whether you can see what it’s doing. Layer 6 determines whether it stays running — securely, reliably, and without someone on r/sysadmin writing a post about how your deployment is basically malware with a friendly UI.

Hardened Docker containers with UFW firewall rules are the baseline here. Non-root user, read-only root filesystem, dropped Linux capabilities, no Docker socket mount, no-new-privileges flag. On top of that: firewall configuration that restricts outbound traffic to only the services your agent actually needs, monitoring to catch when something goes wrong, and a deployment process that’s repeatable rather than a one-time artisanal SSH session.

The 444-upvote “engineering roadmap” thread reinforced this. A comment captured the failure mode perfectly: “phase 5 logging point is the one most people skip. you can’t improve what you can’t observe. ‘built a slot machine’ is the right mental model for any agent without structured failure logging.”

A slot machine. That’s what an unmonitored agent is. You pull the lever. Sometimes the output is exactly what you wanted. Sometimes it sends the wrong email to the wrong client. Without logging, you can’t tell the difference until a human notices — and by then the damage is done.

The Tool Comparison Matrix

Every layer has options. Here’s what the community is actually using at each one, with the trade-offs that matter:

| Tool | Layer | Strengths | Limitations | Best For |

|---|---|---|---|---|

| OpenClaw | Agent Platform | Self-hosted, MCP skills, full local control | Requires hardening for production use | Founders who want data sovereignty |

| n8n | Orchestration | Visual builder, retry logic, self-hosted | Can’t adapt to novel requests without new workflows | Non-developers, repeatable processes |

| LangGraph | Orchestration | Structured agent graphs, Python-native | Requires engineering skills, steeper learning curve | Developers building custom agent pipelines |

| CrewAI | Orchestration | Role-based multi-agent collaboration, intuitive mental model | Less granular control than LangGraph for complex graphs | Teams coordinating multiple specialized agents |

| AutoGen | Orchestration | Conversational agent patterns, Microsoft ecosystem integration | Narrower community adoption than LangGraph | Microsoft-stack teams, debate/consensus agent workflows |

| Ollama | LLM Runtime | Local inference, zero per-token cost, data stays on-device | Model quality limited by local hardware | Privacy-focused, high-volume low-complexity tasks |

| Model Routing | LLM Runtime | Cost optimization, best-model-per-task | Adds configuration complexity | Production stacks with mixed task types |

| Composio | Credentials | OAuth handling, agent never sees raw keys | Additional dependency in the stack | Any deployment connecting agents to third-party APIs |

| Langfuse | Observability | Open source, self-hostable, LLM-native tracing | Requires self-hosting for full data control | Privacy-focused teams already self-hosting their stack |

| LangSmith | Observability | Native LangGraph/LangChain integration, evaluation suite | Vendor lock-in to LangChain ecosystem | Teams already using LangGraph for orchestration |

| Hardened Docker | Deployment | Production isolation, standard tooling, wide support | Shared host kernel (not VM-level isolation) | Single-tenant deployments, SMBs |

You don’t need every tool in this matrix. You need one tool per layer. The stack breaks when a layer is missing entirely, not when you pick the “wrong” tool within a layer.

The Uncomfortable Insight Nobody Wants to Hear

In the “What AI tools are actually worth learning in 2026?” thread (117 upvotes, 105 comments), one comment cut through every tool recommendation, every framework comparison, and every “best stack” debate. From a builder running agents in production for real estate: “the honest answer nobody wants to hear: the tool is almost irrelevant.”

The tool is almost irrelevant. The infrastructure is what matters.

That’s a hard message to hear if you’ve spent weeks evaluating agent platforms, comparing LangGraph to CrewAI, reading benchmark threads about which model scores higher on MMLU. But the production data backs it up. The 8-month survivor didn’t win because of the agent framework. It won because of the Python orchestrator that treated agents as subprocesses, the logging that made failures observable, and the infrastructure that kept everything running.

“The framework matters less than people think.. genuinely what will determine if an agent is reliably or not is the infrastructure.”

— Top comment (29 upvotes), r/AI_Agents “What AI tools are actually worth learning in 2026?”Infrastructure. Orchestration. Logging. Credential isolation. Hardened deployment. The layers that don’t show up in demo videos. The layers that determine whether your agent is still running reliably 8 months from now or whether you’ve quietly stopped using it because it broke one too many times and you couldn’t figure out why.

This is the paradox of the AI agent stack in 2026: the most valuable layers are the ones that have nothing to do with AI.

The Vibe Coding Trap

A trending thread on r/AI_Agents — “Vibe-coders: time to flex… Real devs: your 15-year skill is basically trivia now. Claude already writes better code than you in seconds. Adapt or perish.” — captures the tension between speed and durability in the agent ecosystem.

Vibe coding gets you to a working demo fast. An LLM writes your agent code, you deploy it, it works in testing, and you ship. The problem shows up 6 weeks later when the API you’re calling changes its response format, the retry logic you never added lets a transient failure cascade into a full outage, and the logging you didn’t configure means you’re guessing at the root cause.

The engineering roadmap thread addressed this directly. The comment about logging was pointed: “phase 5 logging point is the one most people skip. you can’t improve what you can’t observe.” That’s not an anti-AI-coding argument. It’s a pro-infrastructure argument. Write the code however you want — by hand, with Claude, with whatever works. But the layers beneath the code — orchestration, monitoring, security, deployment — are what make it production-grade.

How to Build Your Stack (Without Burning 3 Months)

The order matters. Building top-down (starting with the agent platform) feels natural but leads to the pattern where you get a working demo in a weekend and spend the next 3 months trying to make it production-ready. Building bottom-up (starting with deployment and hardening) is boring but durable.

Here’s the sequence that the community’s production survivors suggest:

- 1 Layer 6 first. Set up your server. Harden your Docker configuration. Configure your firewall. This is a one-time investment that pays dividends on every layer you add above it.

- 2 Layer 5 + Layer 1 + Layer 3. Deploy observability alongside your agent platform and a basic LLM connection. Start with a single model — Ollama for local inference or a cloud API. Wire in Langfuse or LangSmith from day one. Get your first read-only workflow running (morning briefing, email summary). No write actions yet. But from your very first agent call, you can see exactly what’s happening inside the system.

- 3 Layer 4. Set up credential management before you give the agent access to any service that can write, send, or modify data. Composio for direct agent actions. n8n’s credential vault if you’re using n8n as your orchestration layer.

- 4 Layer 2. Add your orchestration layer. LangGraph if you have engineering capacity and want fine-grained control. CrewAI if you’re coordinating multiple role-based agents. n8n if you want visual, low-code workflow automation. Connect your first multi-step workflow. Start with Pattern 1 from the workflow library: agent as router, orchestrator as executor. One workflow stable before you add the next.

- 5 Layer 3 upgrade. Add model routing once you’ve identified which tasks are hitting the API unnecessarily. Route classification and simple extraction to local models. Reserve API calls for complex reasoning.

Where ManageMyClaw Fits in the Stack

ManageMyClaw is Layer 6 as a service — plus the configuration work that connects Layers 1 through 5 into a working system. Docker hardening, firewall configuration, Composio OAuth setup, observability wiring (Langfuse integration), workflow configuration, and monitoring, deployed and tested. The Pro tier ($1,499) includes model routing optimization — Layer 3 tuning that routes tasks to the right model based on complexity, cutting LLM costs without sacrificing output quality on the tasks that matter.

The 6-layer stack works. The question is whether you assemble it yourself over 3 months or get it deployed in under 60 minutes. Both paths lead to the same architecture. One of them lets you start running production workflows this week instead of next quarter.

The Bottom Line

The AI agent stack in 2026 isn’t one tool. It’s 6 layers: agent platform, orchestration, LLM runtime, credentials, observability, and deployment. The framework landscape has settled — LangGraph, CrewAI, and AutoGen dominate orchestration, with n8n complementing for visual automation. Observability (Langfuse, LangSmith) graduated from afterthought to dedicated layer. The tools at each layer are interchangeable. The layers themselves are not optional.

The community has spent the past 8 months proving this through production deployments, debugging sessions, and thread after thread of “here’s what survived vs. what we threw out.” The surviving stacks all share the same architecture: intelligence at the top, infrastructure at the bottom, and a clean separation between what the agent decides and how the system executes.

The tool is almost irrelevant. The infrastructure is what matters. Build the layers. Fill in the tools. Ship something that’s still running 8 months from now.

Frequently Asked Questions

What is the AI agent stack in 2026?

The production AI agent stack in 2026 consists of 6 layers: the agent platform (OpenClaw is the most common), the orchestration layer (LangGraph, CrewAI, AutoGen, or n8n), the LLM runtime (Ollama for local inference plus model routing for cost optimization), credential management (Composio or n8n’s built-in vault), observability (Langfuse, LangSmith, Braintrust, or Helicone for tracing and evaluation), and the deployment/hardening layer (hardened Docker containers with firewall rules). LangGraph v1.0 is in production at Uber, JP Morgan, LinkedIn, and Klarna. 90% of respondents in non-tech companies have either deployed agents or are planning to. Each layer is independently replaceable, but skipping any layer entirely creates the failure modes that cause production deployments to break down within weeks.

Why does the orchestration layer matter more than the agent framework?

The orchestration layer separates intelligence (agent decides what to do) from execution (orchestrator does it reliably). Production deployments that have survived 8+ months all share this separation. Without orchestration, every step in a multi-step workflow involves an LLM call, which introduces hallucination risk, token costs, and failure modes at each step. With orchestration, the agent makes 1–2 decisions and the orchestrator executes the rest deterministically with retry logic, error handling, and audit logging. The community consensus in the r/AI_Agents threads is clear: infrastructure determines reliability, not the agent framework.

What is model routing and how does it reduce costs?

Model routing sends different tasks to different LLMs based on complexity. Simple tasks like classification or data extraction go to small, fast, local models via Ollama at zero per-token cost. Complex tasks like nuanced reasoning, legal summaries, or creative writing go to frontier models like Opus 4.6 via API. This pattern can reduce monthly LLM spend by 50–60% while maintaining output quality on the tasks that require it. The routing layer sits between your agent platform and the models, intercepting requests and deciding which model handles each one.

Do I need to know how to code to build this stack?

Not necessarily. The n8n orchestration path is designed for non-developers — it’s a visual workflow builder with drag-and-drop nodes and built-in error handling. OpenClaw’s agent platform uses natural language configuration. The deployment and hardening layer (Layer 6) is where most non-technical founders need help — Docker configuration, firewall rules, and server security require command-line familiarity. That’s the layer where a managed deployment service removes the technical barrier, handling the infrastructure so you can focus on configuring the workflows that run your business.

What’s the difference between n8n, LangGraph, CrewAI, and AutoGen for orchestration?

n8n is a visual, low-code workflow automation platform — self-hosted, with built-in retry logic and a visual audit trail non-technical team members can read. LangGraph (v1.0, October 2025) is a Python framework for building structured agent graphs with fine-grained control, now proven at enterprise scale at Uber, JP Morgan, LinkedIn, and Klarna. CrewAI focuses on multi-agent collaboration with role-based agents — you define crews with specific roles and goals, and they coordinate on shared tasks. AutoGen is Microsoft’s multi-agent framework for conversational agent patterns where agents debate and review each other’s output. For founders without dedicated engineers, n8n is the practical choice. For teams building custom multi-agent systems, LangGraph provides the most control. Most production systems rely on one primary orchestration framework, not multiple overlapping ones.

How long does it take to set up the full 6-layer stack?

Self-assembled, the community reports 4–12 weeks depending on technical experience. The deployment and hardening layer (Layer 6) alone can take 1–2 weeks if you’re learning Docker security, firewall configuration, and server hardening from scratch. With a managed service, the full stack can be deployed in under 60 minutes because the infrastructure layers are pre-configured and tested. Either path produces the same architecture — the difference is time to first production workflow.

Why do production agent stacks fail?

The most common failure mode is missing layers. A stack with a great agent platform but no orchestration breaks when multi-step workflows fail silently. A stack with no credential isolation breaks when a prompt injection exposes API keys. A stack with no observability becomes a slot machine — agents can return confident, well-formatted, completely wrong answers, and without tracing you’ll never know. The 444-upvote engineering roadmap thread on r/AI_Agents identified structured failure logging as the single most-skipped step. Platforms like Langfuse and LangSmith now make this layer deployable in hours, not weeks. You can’t improve what you can’t observe.