“Run Autonomous, Self-Evolving Agents More Safely with NVIDIA OpenShell.”

— NVIDIA Technical Blog, March 2026

Gartner projects 40% of enterprise applications will include AI agents by end of 2026. NVIDIA shipped NemoClaw with 17 launch partners — Adobe, Salesforce, SAP, CrowdStrike, ServiceNow, Atlassian, Red Hat — to meet that demand with a security-first architecture. But “security-first” is a phrase that appears in every vendor’s slide deck. What does it mean at the implementation level? What are the actual Linux primitives enforcing isolation? How does the policy engine evaluate agent actions? Where does sensitive data route when a HIPAA-regulated agent needs to reason over protected health information?

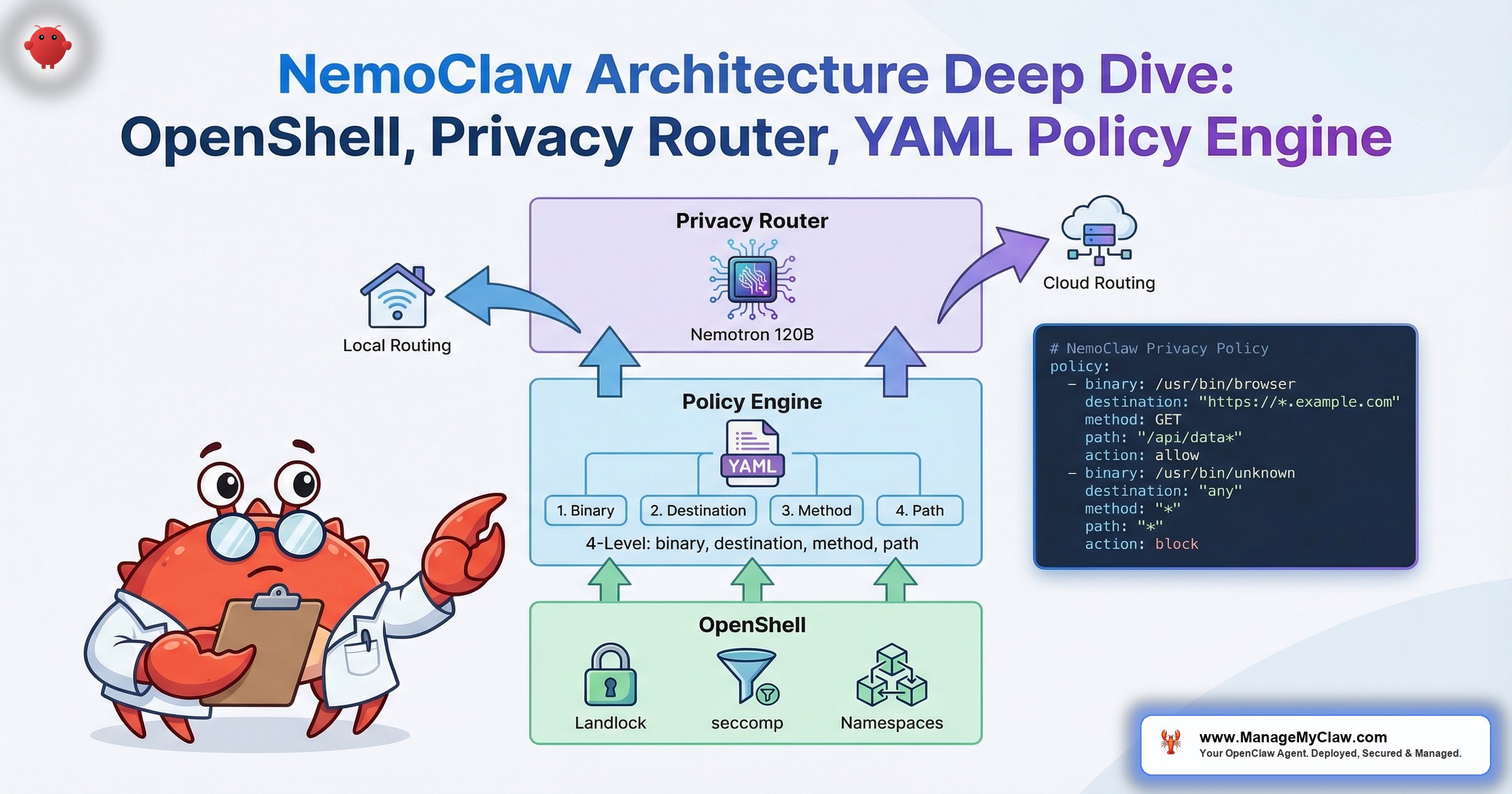

This post is the technical deep dive. We are going to walk through the 3 core components of the NemoClaw security stack — OpenShell’s kernel-level sandbox, the YAML policy engine, and the privacy router — at the architecture level that enterprise architects and security engineers need for evaluation. Code examples included. No marketing abstractions.

Linux security primitives in OpenShell

Policy evaluation levels (binary, destination, method, path)

OpenShell: 4 Linux Security Primitives in One Runtime

Most AI agent sandboxing discussions start and end with Docker containers. Docker provides namespace isolation and cgroups for resource limits — useful for separating processes, but built for application packaging, not adversarial containment. A misconfigured docker.sock mount, a --privileged flag, or an unpatched kernel CVE can escalate a container compromise to full host access. OpenShell takes a different approach: it layers 4 distinct Linux security primitives to create defense-in-depth isolation purpose-built for autonomous agents.

OpenShell is distributed as a single Rust binary that orchestrates a K3s pod inside a Docker container — lightweight yet production-ready. The architecture is deliberately single-player: one developer, one environment, one gateway. Each session gets an ephemeral sandbox where only explicitly mounted directories are visible to the agent process. The Rust binary handles sandbox lifecycle management, policy enforcement orchestration, and the HTTP CONNECT proxy that mediates all outbound network traffic. This design eliminates the multi-tenant attack surface that complicates traditional container deployments.

The distinction matters. Docker isolation fails when the boundary is misconfigured. OpenShell isolation is deny-by-default across every primitive — misconfiguration means the agent can do less, not more.

Primitive 1: Landlock — Filesystem Access Control

Landlock is a Linux security module available since kernel 5.13 that enables unprivileged processes to restrict their own filesystem access. Unlike AppArmor or SELinux, Landlock does not require root privileges or policy compilation — it is applied programmatically by the process itself. OpenShell uses Landlock to enforce deny-by-default filesystem boundaries for every agent sandbox.

In practice, this means an agent process starts with zero filesystem access. The policy engine then whitelists specific paths: the agent’s working directory, its configuration files, and any explicitly approved data directories. Everything else — /etc/shadow, /root, /var/run/docker.sock, SSH keys, system binaries — is invisible to the agent. Not restricted. Invisible. The kernel will not resolve those paths for the sandboxed process.

AppArmor requires profile compilation and root-level policy management. Landlock is self-restricting — the sandbox runtime can apply it without elevated privileges, and restrictions are irrevocable once set. An agent cannot remove its own Landlock restrictions, even if it achieves code execution within the sandbox. This is a kernel-enforced guarantee, not an application-level promise.

Static vs. Dynamic Policies: Two Enforcement Models

OpenShell enforces two distinct categories of policy, each with different lifecycle characteristics:

| Policy Type | Scope | When Applied | Modifiable at Runtime? |

|---|---|---|---|

| Static policies | Filesystem access (Landlock), process restrictions (seccomp) | Locked at sandbox creation | No — irrevocable once set |

| Dynamic policies | Network authorization (HTTP CONNECT proxy) | Applied at runtime | Yes — hot-reloadable without sandbox restart |

Static policies are kernel-enforced and permanent for the sandbox session. Once Landlock filesystem restrictions and seccomp syscall filters are applied, they cannot be relaxed — even by the process that created them. This is a kernel-level guarantee, not an application-level promise. Dynamic network policies, by contrast, are evaluated by the HTTP CONNECT proxy and can be updated at runtime. This means security teams can tighten or adjust network access without destroying and recreating the sandbox — a critical operational capability for incident response scenarios where you need to revoke an agent’s API access immediately.

Primitive 2: seccomp — System Call Filtering

seccomp (secure computing mode) filters which system calls a process can make to the kernel. OpenShell applies a restrictive seccomp profile that blocks dangerous syscalls before the kernel processes them. The blocked list includes:

mount/umount— prevents the agent from mounting filesystems or escaping chroot boundariesptrace— prevents debugging or inspecting other processes (a common container escape vector)reboot/kexec_load— prevents system-level disruptionunshare/setns— prevents the agent from creating new namespaces or joining existing ones (another escape vector)init_module/delete_module— prevents kernel module manipulation

Docker also supports seccomp profiles, but the default Docker seccomp profile allows 300+ syscalls. OpenShell’s profile is narrower — purpose-built for agent workloads where the process needs to read files, make network requests, and execute approved binaries, but nothing more. Every syscall outside the allowlist returns EPERM — the kernel denies the call before execution.

Primitive 3: Network Namespaces — Isolated Network Stack

Each agent sandbox runs in its own Linux network namespace. This is not just IP address isolation — it is a completely separate network stack. The agent has its own routing table, its own iptables rules, and its own DNS resolution. It cannot see the host’s network interfaces, other containers’ traffic, or any services bound to the host’s loopback address.

Outbound traffic from the agent’s namespace routes through the policy engine (covered in the next section), which evaluates every connection against the YAML policy before allowing or denying it. Inbound traffic is blocked by default. The agent cannot bind listening ports on the host. If an agent needs to expose an API or webhook endpoint, the policy engine must explicitly allow it — and the configuration specifies which ports, protocols, and source IP ranges are permitted.

Primitive 4: Process Isolation — Separate Process Space

The agent runs in its own PID namespace and process space. It cannot see or interact with processes outside its sandbox — including the policy engine process. This is the architectural decision that makes the entire stack credible: the component that enforces security policy is unreachable by the component it governs.

Compare this to application-layer guardrails. Claude Code, Cursor, and vanilla OpenClaw all enforce safety rules inside the agent process. A sufficiently sophisticated prompt injection or tool-use exploit could theoretically modify those rules. OpenShell’s policy engine runs in a separate process that the agent literally cannot address.

OWASP ASI02 (Tool Misuse & Exploitation) specifically calls out the risk of agents overriding their own safety controls. When the control plane lives inside the agent’s process space, a compromised agent can modify it. When the control plane runs out-of-process with kernel-enforced isolation, the agent cannot access, modify, or terminate it. This is the difference between a policy and an enforcement boundary. See our OWASP Agentic Top 10 mapping for how NemoClaw addresses each risk category.

How the 4 Primitives Work Together

No single primitive provides complete isolation. The strength of OpenShell’s approach is the layering:

| Primitive | What It Controls | Failure Mode Without It |

|---|---|---|

| Landlock | Filesystem paths the agent can read/write | Agent reads SSH keys, credentials, host configs |

| seccomp | System calls the agent can make to the kernel | Agent mounts filesystems, debugs processes, loads kernel modules |

| Network namespaces | Network interfaces, routing, DNS | Agent exfiltrates data to arbitrary endpoints, scans internal network |

| PID namespaces | Process visibility and interaction | Agent kills the policy engine, inspects other agents’ memory |

An agent that escapes Landlock restrictions (hypothetically) still cannot make dangerous syscalls (seccomp). An agent that finds a seccomp bypass still cannot reach the host network (namespace isolation). An agent that somehow tunnels network traffic still cannot see or kill the policy engine (PID isolation). Each layer covers the failure modes of the others.

“The key insight of OpenShell is that AI agents are adversarial workloads. They generate and execute code, make network requests, and interact with external APIs — all behaviors you’d flag as suspicious in any other process.”

— NVIDIA Technical Blog, “Run Autonomous, Self-Evolving Agents More Safely with NVIDIA OpenShell”

The YAML Policy Engine: 4-Level Evaluation, Out-of-Process Enforcement

The policy engine is the decision layer of the NemoClaw stack. Every action the agent attempts — every file read, every network request, every binary execution — passes through the policy engine before the operating system permits it. The engine runs as a separate process that the sandboxed agent cannot access, modify, or terminate. Policies are defined in YAML configuration files at policies/openclaw-sandbox.yaml.

The 4-Level Evaluation Model

The policy engine evaluates every agent action against 4 hierarchical levels. Each level narrows the scope of what is permitted:

- Binary — What program is being executed? Only approved binaries can run. If the agent attempts to execute a binary not in the allowlist, the request is denied before any further evaluation.

- Destination — What host is being contacted? Outbound connections are evaluated against a domain allowlist. The agent can reach

api.slack.combut notattacker-c2.example.com. - Method — What HTTP method is being used? An agent may be allowed to

GETfrom the Jira API but notDELETE. Read access does not imply write access. - Path — What URL path is being accessed? An agent can read

/api/v2/issuesbut not/api/v2/admin/users. Path-level granularity prevents privilege escalation through API exploration.

This 4-level hierarchy means a single policy can express: “The agent may execute curl to api.slack.com using POST on /api/chat.postMessage — and nothing else.” That is granularity that Docker capabilities, AppArmor profiles, and AGENTS.md allowlists cannot express in a single rule.

Under the Hood: OPA, Rego, and TLS Termination

The network policy engine is not a simple allowlist parser. Under the hood, OpenShell’s HTTP CONNECT proxy uses Open Policy Agent (OPA) with policies written in Rego, a purpose-built policy language for cloud-native authorization. This is the same policy framework used by Kubernetes admission controllers, Envoy proxies, and Terraform Cloud — battle-tested at scale across the cloud-native ecosystem.

The proxy terminates TLS on every outbound connection to inspect each HTTP request at the method level. This is how L7 enforcement works: the proxy sees the full HTTP request (method, host, path, headers) before forwarding it to the destination. Without TLS termination, the proxy would only see encrypted traffic and could enforce at the IP/port level but not at the method or path level. This is the architectural tradeoff that enables the 4-level evaluation model — and it is worth understanding because it means the proxy holds the TLS certificates for outbound connections.

# Allow git to clone from GitHub only

allow {

input.binary == "git"

input.host == "github.com"

input.port == 443

}

# Allow curl to POST to Slack API, deny everything else

allow {

input.binary == "curl"

input.host == "api.slack.com"

input.method == "POST"

startswith(input.path, "/api/chat.")

}

# Default deny — strict-by-default

default allow = falseThe Rego rules above demonstrate per-binary, per-endpoint, per-method control. The git binary can reach GitHub on port 443. The curl binary can POST to Slack’s chat APIs. Everything else — every binary, every host, every method not explicitly authorized — is denied. The sandbox can only reach endpoints that are explicitly allowed. Full network policy reference: docs.nvidia.com/nemoclaw/latest/reference/network-policies.html. For a walkthrough of writing your first policy: OpenShell first network policy tutorial.

YAML policies handle the static declaration layer well (filesystem access, binary allowlists). But network authorization requires conditional logic — rules that depend on the combination of binary, host, port, method, and path. Rego is a declarative policy language purpose-built for these multi-dimensional authorization decisions. It supports pattern matching, string operations, and logical composition without the fragility of imperative scripting. The YAML configuration layer and the Rego network policy layer are complementary, not competing — YAML defines what the sandbox looks like, Rego governs what it can reach. See the approve/deny flow documentation for the complete decision pipeline.

Example: Slack Integration Policy

Here is what an enterprise Slack policy looks like in the YAML policy engine. This configuration allows the agent to post messages and read channel history, but blocks file uploads, user management, and admin APIs:

# Slack integration — read channels, post messages only

- name: slack-agent-policy

binary:

allow:

- curl

- node

destination:

allow:

- api.slack.com

- files.slack.com

method:

allow:

- GET

- POST

path:

allow:

- /api/chat.postMessage

- /api/conversations.history

- /api/conversations.list

deny:

- /api/admin.*

- /api/files.upload

- /api/users.admin.*Every field is explicit. There is no implicit inheritance, no “allow all unless denied.” If a binary, destination, method, or path is not in the allow list, the request is blocked and logged. This is deny-by-default at every evaluation level.

Example: Healthcare HIPAA-Compliant Policy

For regulated industries, the policy engine enforces data handling requirements at the infrastructure layer — not the application layer. This example restricts a healthcare agent to only access an internal EHR API and route all inference through the privacy router (covered in the next section):

# Healthcare agent — PHI restricted to local inference

- name: hipaa-clinical-notes

binary:

allow:

- node

- python3

destination:

allow:

- ehr.internal.hospital.org

- localhost # Local Nemotron inference only

deny:

- api.openai.com

- api.anthropic.com

- "*.amazonaws.com"

method:

allow:

- GET

- POST

deny:

- DELETE

- PUT

path:

allow:

- /api/v1/patients/*/notes

- /api/v1/patients/*/vitals

deny:

- /api/v1/admin/*

- /api/v1/patients/*/billingNotice the explicit denial of cloud LLM endpoints. This agent can reason over clinical notes using local Nemotron models (localhost), but the network policy physically prevents PHI from reaching OpenAI, Anthropic, or AWS endpoints. This is not a prompt-level instruction that the agent could theoretically ignore. It is a kernel-enforced network rule. The connection will not be established.

Pre-built policy presets: Slack, Jira, Discord, npm, PyPI, Docker, Telegram, HuggingFace, Outlook

Policy Presets: Production-Ready Defaults

Writing YAML policies from scratch for every integration is exactly the kind of work that slows enterprise adoption. NemoClaw ships with a policies/presets/ directory containing pre-built, audited policies for common enterprise integrations:

| Preset | Allowed Destinations | Key Restrictions |

|---|---|---|

| Slack | api.slack.com, files.slack.com | No admin APIs, no workspace management |

| Jira | *.atlassian.net | Read issues, create comments; no project deletion |

| Discord | discord.com/api | Message posting; no server management |

| npm | registry.npmjs.org | Package install only; no publish, no token management |

| PyPI | pypi.org, files.pythonhosted.org | Package install only; no upload |

| Docker | registry.docker.io | Image pull only; no push, no build context access |

| Telegram | api.telegram.org | Send messages; no bot management APIs |

| HuggingFace | huggingface.co, cdn-lfs.huggingface.co | Model download only; no upload, no token scope |

| Outlook | graph.microsoft.com | Read/send mail; no calendar delete, no admin consent |

Each preset defines all 4 levels (binary, destination, method, path) and follows the OWASP Principle of Least Agency — the agent gets the minimum access required for its integration and nothing more. Enterprise teams can compose presets for multi-integration agents, override specific rules, and version-control the resulting policy alongside their infrastructure-as-code.

Presets are a starting point, not a destination. As your agents’ workflows evolve, policies need regular review to ensure permissions still align with the Principle of Least Agency. An agent that was authorized to post Slack messages 6 months ago may now also need Jira access — or may no longer need Slack at all. Quarterly policy audits are part of any serious governance program.

Audit Trail: Every Decision Logged

Every policy evaluation produces an audit log entry: timestamp, agent identity, requested action, policy match, and decision (allow/deny). For SOC2 evidence collection, HIPAA audit requirements, and EU AI Act transparency obligations, this is not optional — it is the evidentiary foundation. The audit trail answers the question every compliance officer asks: “Can you prove what this AI agent did and didn’t do on Tuesday at 3:14 PM?”

Because the policy engine runs out-of-process, the audit trail is tamper-resistant. The agent cannot access, modify, or delete its own logs. If the agent is compromised, the audit trail of its actions remains intact for forensic analysis.

Privacy Router: Inference Routing for Data Sovereignty

The privacy router solves a fundamental tension in enterprise AI agent deployment: organizations need the reasoning capability of frontier cloud models (Claude, GPT-5), but they cannot send protected health information, personally identifiable information, financial records, or trade secrets to third-party APIs. The privacy router intercepts every inference call and makes a routing decision based on the sensitivity of the data involved.

Routing Architecture

The privacy router sits between the agent and all LLM endpoints. It intercepts every inference request and evaluates it through 3 stages:

-

1

Classification — The router analyzes the content of the inference request for sensitive data categories: PHI, PII, financial data, credentials, intellectual property. Classification uses pattern matching, named entity recognition, and configurable rules. -

2

Redaction / Routing Decision — Based on the classification, the router either (a) strips sensitive content and routes to a cloud model with redacted context, (b) routes entirely to a local Nemotron model running on-premises, or (c) passes through to the cloud model if no sensitive data is detected. -

3

Audit Logging — Every routing decision is logged: what data was classified as sensitive, which model received the request, what was redacted, and what was routed locally. This log integrates with the policy engine’s audit trail for a unified compliance record.

This is the architectural innovation that makes NemoClaw viable for regulated industries. A healthcare system can use Claude for general administrative reasoning while keeping patient data on local Nemotron models — with a provable audit trail showing that PHI never left the premises.

Healthcare Example: HIPAA-Compliant Agent Workflow

Consider a clinical notes summarization agent deployed at a hospital. The workflow processes patient records and produces summaries for physician review. Here is how the privacy router handles a single inference cycle:

| Data Type | Classification | Routing Decision | Model |

|---|---|---|---|

| Patient name, DOB, MRN | PHI — identifiers | Route locally | Nemotron (on-premises) |

| Diagnosis codes, lab results | PHI — clinical | Route locally | Nemotron (on-premises) |

| “Summarize in SOAP format” | Non-sensitive instruction | Pass through to cloud | Claude / GPT-5 |

| Hospital department structure | Non-sensitive context | Pass through to cloud | Claude / GPT-5 |

| Physician schedule reference | PII — staff identifiers | Redact, then route to cloud | Claude / GPT-5 (redacted) |

The result: clinical reasoning happens on local hardware with full PHI access. General-purpose reasoning (formatting, structure, language quality) leverages frontier cloud models without exposing protected data. The audit log records every routing decision, providing HIPAA audit evidence that PHI never traversed a cloud API.

The privacy router’s local routing requires NVIDIA GPU hardware running Nemotron models. Minimum system requirements: Linux, 4 vCPU, 8 GB RAM, Node.js 20+, Docker. For production clinical workloads, NVIDIA recommends higher-tier hardware. Organizations without on-premises GPU infrastructure can use NVIDIA DGX Cloud or the Dell Pro Max GB10 (DGX Spark) at $4,756.84 for a dedicated inference appliance.

Nemotron 3 Super 120B: The Local Inference Engine

The privacy router’s local routing capability depends on having a model that can handle complex enterprise reasoning tasks without cloud API calls. NVIDIA’s answer is Nemotron 3 Super 120B — a model architecturally optimized for the latency and throughput demands of local agent inference.

Total parameters, 12B active via MoE

Native context window (tokens)

Nemotron 3 Super uses a LatentMoE architecture that interleaves three distinct layer types — Mamba-2 (state-space), Mixture-of-Experts (MoE), and traditional Attention layers. The 120B total parameters with only 12B active per inference call directly addresses the “thinking tax” that makes large models impractical for local deployment. The MoE routing means you get 120B-class reasoning quality at 12B-class compute cost.

Key architectural features for enterprise agent workloads:

- Native 1M-token context window — SSMs (Mamba-2 layers) provide linear-time complexity for long-context processing, enabling agents to reason over entire codebases, legal documents, or clinical records without chunking

- Multi-Token Prediction (MTP) layers — predicts multiple tokens per forward pass, improving both generation speed and output quality for agent tool-use sequences

- NVFP4 quantization on Blackwell — trained on 25 trillion tokens using NVIDIA’s FP4 format, optimized for Blackwell GPU architecture

- 2.2x throughput vs GPT-OSS-120B and 7.5x vs Qwen3.5-122B on 8k/16k context settings — verified benchmarks from NVIDIA’s technical blog

Nemotron 3 Super is available through multiple channels: Ollama for local development, HuggingFace for custom fine-tuning, and NVIDIA NIM for production deployment. For NemoClaw deployments, the Ollama integration is the most straightforward path to local inference — though it comes with some known routing limitations we address in the current issues section below.

Privacy-sensitive agent workloads often involve large documents: full patient records, multi-year financial statements, legal discovery sets. Without sufficient context length, the agent must chunk these documents and make multiple inference calls — increasing the attack surface for data leakage and complicating the privacy router’s routing decisions. Nemotron 3 Super’s native 1M-token window means the agent can process entire documents in a single local inference call. No chunking, no partial cloud routing, no data fragmentation across providers.

Financial Services: Data Residency Compliance

For financial institutions subject to data residency requirements (GDPR Article 44, various national banking regulations), the privacy router provides a provable mechanism for keeping regulated data within jurisdictional boundaries. An agent processing EU customer transaction data routes all PII and financial records to local models within the organization’s EU data center. General reasoning tasks — formatting, calculations, template generation — route to cloud models. The audit trail proves, per-request, where each piece of data was processed.

This is not theoretical. EU AI Act full enforcement begins August 2026. Every AI agent deployment touching EU personal data will need a documented data processing architecture that proves compliance. The privacy router’s audit trail is purpose-built for that evidentiary requirement.

From Install to Sandbox: What the Deployment Looks Like

NemoClaw installs with a single command:

curl -fsSL https://www.nvidia.com/nemoclaw.sh | bashThe installer launches a guided onboard wizard that walks through 3 configuration steps:

- Sandbox creation — configures the OpenShell sandbox with Landlock, seccomp, network namespaces, and process isolation for your agent

- Inference configuration — sets up the privacy router, configures local Nemotron models if GPU hardware is available, and establishes cloud model routing rules

- Security policy application — applies baseline YAML policies from presets and prompts for custom rules based on your agent’s integrations

System Requirements

- Operating System: Linux (kernel 5.13+ for Landlock support)

- CPU: 4 vCPU minimum

- Memory: 8 GB RAM minimum

- Runtime: Node.js 20+, Docker

- GPU: NVIDIA GPU required for local Nemotron inference (optional for policy engine and sandbox)

- Status: Alpha / early-access (March 2026)

The installation is straightforward. The production hardening is not. The wizard produces a baseline configuration that is functional but not enterprise-ready. Customizing YAML policies for your organization’s specific integration landscape, configuring the privacy router’s classification rules for your data types, integrating audit logs with your existing SIEM, and setting up CrowdStrike Falcon AIDR integration — that is where the 2-6 week implementation timeline comes from.

CrowdStrike, JetPatch, and the Enterprise Governance Ecosystem

NemoClaw does not exist in isolation. NVIDIA launched with 17 enterprise partners, and 2 integrations are particularly relevant for security architects evaluating the stack.

CrowdStrike Falcon AIDR

CrowdStrike’s Secure-by-Design Blueprint for NemoClaw extends Falcon’s AI Detection and Response (AIDR) to cover every prompt, response, and agent action. This means the same SOC team that monitors your endpoint fleet can monitor your AI agents through the same Falcon console they already use. No separate tool, no separate alert pipeline, no separate incident response workflow.

The integration secures 3 surfaces: the agent’s input (prompts and instructions), the agent’s output (responses and actions), and the agent’s behavior (tool calls, network requests, file operations). CrowdStrike’s 2026 Global Threat Report documented an 89% year-over-year increase in AI-enabled attacks. Falcon AIDR applies CrowdStrike’s threat intelligence to the agent’s activity stream in real time.

JetPatch Enterprise Control Plane

JetPatch provides the operational governance layer for NemoClaw deployments at scale. Where the YAML policy engine governs individual agent behavior, JetPatch governs the fleet: deployment orchestration, patch management, compliance reporting, and operational visibility across all NemoClaw instances in an organization. For enterprises running 10, 50, or 100+ agents across departments, the control plane is what keeps the governance model manageable.

“48% of cybersecurity professionals identify agentic AI as the #1 attack vector heading into 2026.”

— Dark Reading Poll, 2026

NemoClaw vs. Vanilla OpenClaw vs. Hardened Docker: Security Architecture Comparison

Enterprise architects evaluating NemoClaw need to understand how it compares to existing deployment approaches at the architecture level — not the marketing level. Here is a layer-by-layer comparison:

| Security Layer | Vanilla OpenClaw | Hardened Docker | NemoClaw + OpenShell |

|---|---|---|---|

| Filesystem isolation | None by default | Read-only root, bind mounts | Landlock (kernel-enforced, irrevocable) |

| Syscall filtering | None | Docker default seccomp (300+ allowed) | Purpose-built seccomp (agent-specific allowlist) |

| Network isolation | Host network | Docker bridge + iptables | Dedicated network namespace + policy-evaluated egress |

| Process isolation | Shared process space | PID namespace (container-level) | PID namespace + out-of-process policy engine |

| Policy enforcement | AGENTS.md (in-process) | Docker capabilities (container-level) | YAML engine, 4-level evaluation (out-of-process) |

| Policy granularity | Tool allowlist | Capability flags | Binary + destination + method + path |

| Data privacy | All data to 1 provider | All data to 1 provider | Privacy router: local/cloud split by sensitivity |

| Audit trail | Agent logs (if configured) | Docker logs | Tamper-resistant, per-action decision log |

| OWASP ASI coverage | Limited (ASI02 partial) | ASI02, ASI06 partial | ASI01-ASI10 addressable via policy + sandbox |

The column that matters most for security evaluation is the enforcement boundary. Vanilla OpenClaw’s safety controls are agent-internal — a compromised agent can potentially override them. Docker’s controls are container-level — stronger than in-process, but still sharing the host kernel with known escape vectors. NemoClaw’s controls are kernel-enforced and out-of-process — the agent cannot reach the component that governs it.

For a deeper comparison of sandboxing approaches including MicroVMs and gVisor, see our AI Agent Sandboxing in 2026 analysis.

What Enterprise Architects Should Know Before Committing

NemoClaw’s architecture is sound. Its current maturity level is not production-grade for every use case. An honest evaluation requires acknowledging both.

- Alpha software — NemoClaw launched March 16, 2026. APIs, policy formats, and configuration structures may change before GA.

- Linux-only — OpenShell’s kernel primitives (Landlock, seccomp, network namespaces) are Linux-native. No macOS or Windows support.

- GPU dependency for privacy router — Local Nemotron inference requires NVIDIA GPU hardware. Without it, the privacy router cannot route sensitive data locally.

- Limited production track record — 17 launch partners committed, but field-tested deployments are weeks old, not years old.

Known Issues: What the GitHub Tracker Reveals

Alpha software means open issues. Enterprise teams evaluating NemoClaw should review the GitHub issue tracker for current pain points. As of March 2026, these are the issues most likely to affect deployment:

| Issue | Impact | Status |

|---|---|---|

| #385: Local Ollama inference routing fails from sandbox | Agents cannot reach locally-hosted Ollama models from inside the sandbox — blocks the primary local inference path | Open |

| #336: WSL2 sandbox cannot reach Windows-hosted Ollama | WSL2 network namespace isolation prevents cross-OS model access — affects Windows development environments | Open |

| #481: Cannot connect Discord or Telegram to NemoClaw | Messaging platform integrations fail despite policy presets existing for both services | Open |

| #272: Network policy presets missing binaries restriction | Some presets define destination rules but omit binary-level restrictions, creating a gap in the 4-level enforcement model | Open |

Additionally, the HTTP CONNECT proxy has been reported to return 403 errors on valid tunnels in certain configurations — a symptom of TLS termination edge cases where the proxy’s certificate chain does not match the destination’s expected handshake. These are integration-layer issues, not architectural flaws, but they directly affect deployment timelines.

If local Ollama inference routing does not work from inside the sandbox, the privacy router cannot route sensitive data to local models. This means the core value proposition — keeping PHI, PII, and financial data on local hardware while using cloud models for general reasoning — is currently blocked for Ollama-based deployments. Organizations evaluating NemoClaw for regulated workloads should verify this issue is resolved before committing to a privacy-router-dependent architecture. NVIDIA NIM deployments are not affected by this specific issue.

That said, the core security primitives — Landlock, seccomp, network namespaces — are battle-tested Linux kernel features with years of production history in non-agent contexts. OpenShell is applying proven isolation technology to a new category of workload. The risk is in the integration layer and tooling maturity, not in the underlying security model.

Organizations that build governance around these primitives now will be production-ready when NemoClaw reaches GA. Organizations that wait will be 6-12 months behind on policy development, compliance documentation, and team training.

How NemoClaw Maps to OWASP Agentic Top 10

Enterprise security teams evaluating NemoClaw need to map its capabilities against the OWASP Top 10 for Agentic Applications. Here is how the 3 security layers address each risk category:

| OWASP Risk | NemoClaw Component | Mitigation Mechanism |

|---|---|---|

| ASI01: Agent Goal Hijack | Policy Engine | Out-of-process enforcement; agent cannot override its own restrictions |

| ASI02: Tool Misuse | Policy Engine + Sandbox | 4-level policy evaluation; binary allowlists; denied actions logged |

| ASI03: Identity Abuse | Sandbox + Network NS | Credential isolation via Landlock; network policy prevents lateral movement |

| ASI04: Uncontrolled Code | seccomp + Landlock | Blocked syscalls prevent kernel manipulation; filesystem deny-by-default |

| ASI05: Data Exposure | Privacy Router | Sensitive data classification + local routing; PII stripping for cloud requests |

| ASI06: Supply Chain | Policy Engine | Binary allowlists; only approved executables can run in sandbox |

| ASI07: System Prompt Leak | Process Isolation | Agent cannot access policy engine configs or other agents’ instructions |

| ASI08: Overreliance | Audit Trail | Decision logging enables human review of agent outputs |

| ASI09: Monitoring Gaps | Audit Trail + CrowdStrike | Every action logged; Falcon AIDR integration for real-time monitoring |

| ASI10: Multi-Agent Risks | PID + Network NS | Agent-to-agent isolation; agents cannot see or communicate outside policy |

No single product covers every OWASP risk category completely. NemoClaw provides the infrastructure-level controls. Application-level controls (input validation, output filtering, human-in-the-loop workflows) remain the responsibility of the deployment team. The architecture gives you the enforcement boundaries. The governance program gives you the operational discipline to maintain them.

Independent Architecture Assessments

Vendor documentation is necessary but not sufficient for enterprise evaluation. Independent security analyses provide the adversarial perspective that vendor docs typically omit. Three third-party assessments are worth reviewing alongside this deep dive:

Katonic AI: “NemoClaw vs. Docker Isolation: What Agent-Specific Sandboxing Actually Adds”

Katonic’s analysis focuses on the delta between Docker’s container isolation model and NemoClaw’s agent-specific sandboxing. The assessment validates that OpenShell’s Landlock + seccomp layering provides meaningfully stronger isolation than Docker’s default seccomp profile, while noting that the operational complexity of managing YAML policies across agent fleets remains a governance challenge.

Stormap.ai: “Inside NemoClaw: The Architecture, Sandbox Model, and Security Tradeoffs”

Stormap’s assessment examines the TLS termination tradeoff in the HTTP CONNECT proxy — specifically, the trust implications of the proxy holding TLS certificates for outbound connections. The analysis concludes that TLS termination is architecturally necessary for L7 enforcement but recommends certificate rotation policies and hardware security module (HSM) integration for production deployments handling sensitive traffic.

Codersera: “Nvidia NemoClaw + OpenClaw: Secure Sandbox Guide for Local vLLM Agents”

Codersera provides a practitioner-focused guide to deploying NemoClaw with vLLM-based local inference. The guide covers sandbox configuration for Ollama and vLLM backends, network policy setup for local model routing, and workarounds for the known Ollama routing issues documented in GitHub issue #385.

We recommend enterprise security teams review at least two independent assessments before committing to any AI agent sandboxing architecture. Vendor documentation describes the intended behavior. Independent analysis describes the observed behavior, the edge cases, and the failure modes.

Enterprise FAQ

Can NemoClaw run without NVIDIA GPU hardware?

The OpenShell sandbox and YAML policy engine work without GPU hardware — they rely on Linux kernel primitives (Landlock, seccomp, namespaces), not GPU compute. The privacy router’s local inference capability requires NVIDIA GPUs to run Nemotron models. Without GPU hardware, all inference routes to cloud models, and the privacy router functions as a logging/audit layer without local routing capability.

Is NemoClaw production-ready for regulated industries?

NemoClaw is in alpha/early-access as of March 2026. The underlying Linux security primitives are production-proven, but the NemoClaw integration layer is new. For regulated industries, we recommend a pilot deployment to validate the architecture against your specific compliance requirements before committing to production rollout.

How does the policy engine handle actions not covered by existing presets?

Deny by default. If an agent attempts an action that does not match any allow rule in the YAML policy, the action is blocked and logged. No implicit permissions exist. Custom policies are written in the same YAML format as presets and can be composed alongside them.

Can an agent modify its own YAML policies?

No. The policy engine runs in a separate process that the agent cannot access, modify, or terminate. This is enforced by PID namespace isolation and Landlock filesystem restrictions. The agent cannot see the policy files, cannot write to the policy directory, and cannot signal the policy engine process. Policy changes require out-of-band access by an authorized administrator.

How does NemoClaw integrate with existing SIEM platforms?

The audit trail produces structured log output that integrates with standard SIEM platforms (Splunk, Elastic, Datadog, Microsoft Sentinel). CrowdStrike Falcon AIDR provides native integration for organizations already using the Falcon platform. JetPatch Enterprise Control Plane adds fleet-level operational visibility for multi-agent deployments.

What is the difference between static and dynamic policies in OpenShell?

Static policies (filesystem via Landlock, syscall filtering via seccomp) are locked at sandbox creation and cannot be relaxed at runtime — this is a kernel-enforced guarantee. Dynamic policies (network authorization via the HTTP CONNECT proxy and OPA/Rego) can be hot-reloaded without restarting the sandbox. This means security teams can revoke an agent’s API access in real time during an incident without destroying the sandbox environment.

Why does Nemotron 3 Super 120B only use 12B active parameters?

Nemotron 3 Super uses a Mixture-of-Experts (MoE) architecture that routes each inference call through a subset of expert layers. Only 12B of the 120B total parameters are activated per request, delivering 120B-class reasoning quality at 12B-class compute cost. This directly addresses the “thinking tax” that makes large models impractical for local inference. Combined with the 1M native context window powered by Mamba-2 state-space layers, this architecture enables complex enterprise reasoning tasks — clinical notes, legal documents, financial analysis — to run entirely on local hardware.

What is the difference between NemoClaw’s policy engine and Docker capabilities?

Docker capabilities control what a container process can do at the kernel level (e.g., CAP_NET_RAW, CAP_SYS_ADMIN). They are coarse-grained and binary: a capability is either granted or not. NemoClaw’s policy engine operates at 4 levels (binary, destination, method, path) and evaluates every agent action individually. Docker capabilities cannot express “allow POST to api.slack.com/api/chat.postMessage but deny DELETE to api.slack.com/api/admin.users” — the YAML policy engine can.

NemoClaw’s architecture represents a genuine step forward for enterprise AI agent security. The kernel-level primitives are proven. The policy engine’s 4-level evaluation model is more granular than anything available in container-native tooling. The privacy router solves a real compliance gap for regulated industries. The question for most organizations is not whether the architecture is sound — it is whether the implementation and governance around it are configured correctly for their specific deployment.

ManageMyClaw provides NemoClaw implementation, security hardening, and ongoing managed care for enterprise deployments. Our assessment tier delivers architecture review, security gap analysis against OWASP ASI01-ASI10, and a written remediation plan — before any commitment to implementation.

Architecture review. OWASP gap analysis. Written remediation plan. 1-hour executive briefing.

Schedule Architecture Review