“Every company in the world needs an OpenClaw strategy.”

— Jensen Huang, NVIDIA GTC 2026 Keynote, March 16, 2026

That statement landed in front of 30,000 GTC attendees and reached several million more through the livestream. Within 48 hours, it appeared in CNBC headlines, Constellation Research briefings, and board-meeting agendas at mid-market companies that had never previously discussed AI agents. Jensen Huang does this better than anyone in technology: he turns a product launch into an existential imperative.

But the “OpenClaw strategy” line was only the opening volley. In a follow-up interview with Jim Cramer on CNBC, Jensen escalated further:

“OpenClaw is definitely the next ChatGPT.”

— Jensen Huang, interview with Jim Cramer, CNBC (March 2026)

He called OpenClaw “the largest, most popular, the most successful open-sourced project in the history of humanity” and drew a direct historical parallel to four inflection-point technologies: “Just as Linux gave the industry exactly what it needed at exactly the time, just as Kubernetes showed up at exactly the right time, just as HTML showed up.” He framed OpenShell — NemoClaw’s sandbox layer — as “the policy engine of all the SaaS companies in the world” and declared OpenClaw “the operating system for personal AI.”

The market responded immediately. Chinese AI stocks surged after Jensen’s OpenClaw endorsement — Zhipu and Minimax rallied within hours, tracked by CNBC and Invezz. This was not a typical product announcement; it was a market-moving endorsement from the most influential voice in semiconductor technology. When Jensen Huang compares your open-source project to Linux and Kubernetes in the same sentence, capital flows.

The product in question is NemoClaw — NVIDIA’s open-source security wrapper for the OpenClaw AI agent framework. 17 launch partners committed on stage: Adobe, Atlassian, Cisco, CrowdStrike, Salesforce, SAP, ServiceNow, Red Hat, and others. CNBC ran with “Nvidia plans open-source AI agent platform ‘NemoClaw.'” Constellation Research noted: “NVIDIA launches NemoClaw, eyes to pair with DGX Spark, DGX Station.” The message was clear: enterprise AI agents have arrived, and NVIDIA owns the stack.

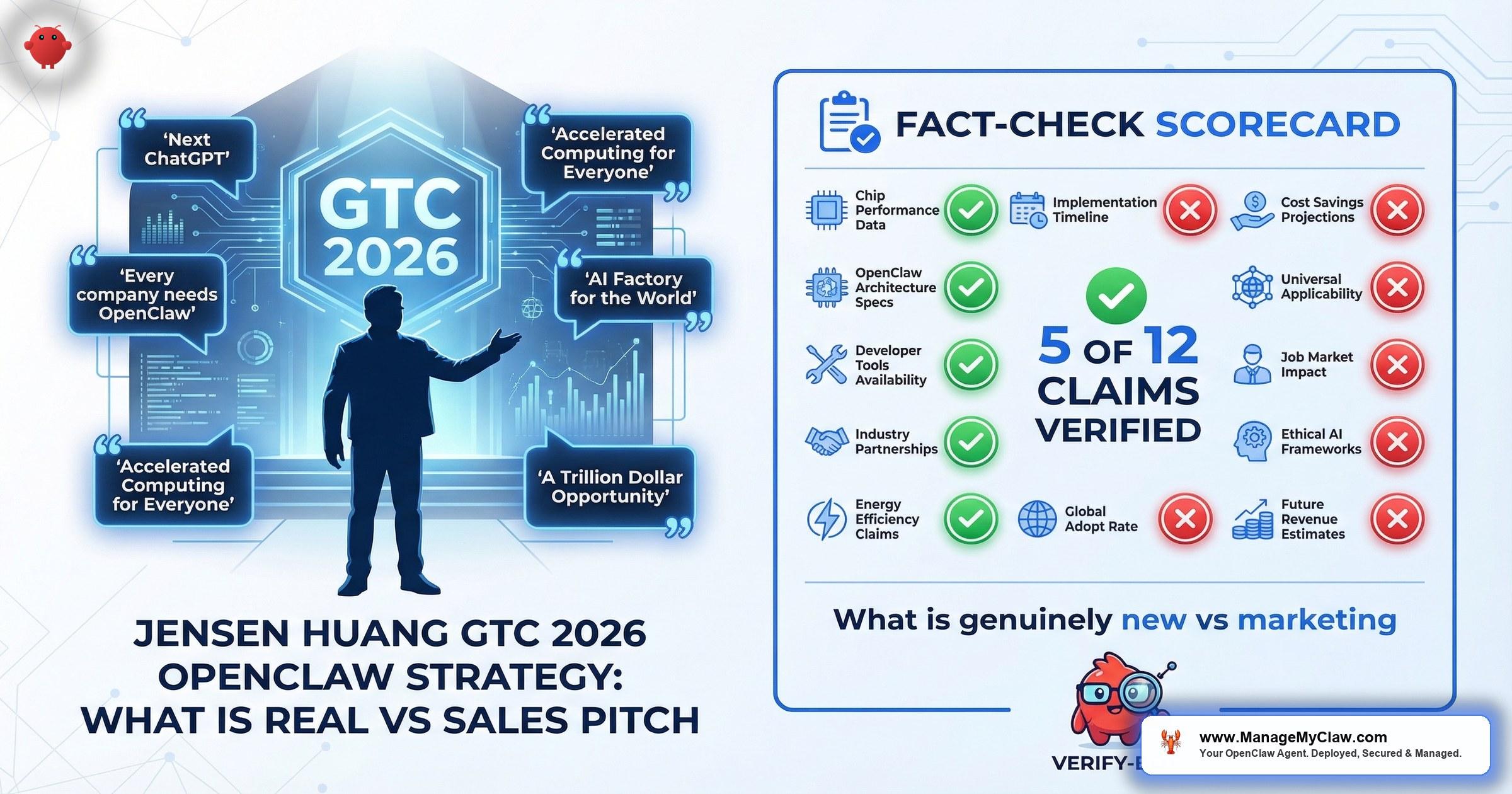

But CTOs do not get paid to absorb keynotes uncritically. They get paid to separate the engineering substance from the adoption pressure — and to decide whether their organization should act now, wait, or take a measured middle path. This analysis breaks down Jensen’s GTC claims into three categories: what is genuinely new, what is strategic marketing, and what NVIDIA is not saying on stage.

“Every Company Needs an OpenClaw Strategy”

Jensen’s declaration was not a technical claim. It was a market-positioning statement designed to create urgency. Let us separate the signal from the sales pressure by examining the full spectrum of what he said across the keynote, CNBC, and press interviews.

“Every company in the world needs an OpenClaw strategy. The agentic enterprise is not coming — it is here.”

— Jensen Huang, GTC 2026 keynote

“OpenClaw is definitely the next ChatGPT.”

— Jensen Huang to Jim Cramer, CNBC

“Just as Linux gave the industry exactly what it needed at exactly the time, just as Kubernetes showed up at exactly the right time, just as HTML showed up — OpenClaw opened the next frontier of AI to everyone.”

— Jensen Huang, GTC 2026 (verified via eWeek, Tom’s Guide)

These are not throwaway comparisons. Jensen deliberately positioned OpenClaw alongside Linux, Kubernetes, and HTML — three technologies that became foundational infrastructure standards. He described OpenShell as “the policy engine of all the SaaS companies in the world” and declared OpenClaw “the operating system for personal AI.” Each phrase is crafted to make non-adoption feel like missing an inflection point.

This framing follows a well-established NVIDIA playbook. In 2023, Jensen said every company needed an “AI strategy.” In 2024, it was a “GPU strategy.” In 2025, a “foundation model strategy.” Each declaration corresponded to a product launch that happened to require NVIDIA hardware. GTC 2026 is no different: the OpenClaw strategy declaration coincides with the launch of DGX Spark (the Dell Pro Max GB10, priced at $4,756.84) and NemoClaw, which delivers its full capability only on NVIDIA GPU infrastructure.

This does not mean the statement is wrong. It means the statement is serving two masters simultaneously: a legitimate technology trend (AI agents are entering enterprise workflows) and a specific business objective (those agents should run on NVIDIA hardware).

Enterprise launch partners committed to NemoClaw at GTC 2026

The partner list is real and significant. Adobe, Salesforce, SAP, CrowdStrike, Cisco, ServiceNow, Red Hat, and Atlassian are not companies that attach their names to vaporware. Their commitment signals that NemoClaw addresses a genuine gap in the AI agent ecosystem. But “launch partner” and “production deployment” are materially different commitments. A launch partnership means engineering teams are evaluating the technology — not that these companies have deployed NemoClaw in production environments serving paying customers.

Four Things GTC 2026 Genuinely Delivered

Strip away the keynote theatrics and NemoClaw introduces three architectural capabilities that did not previously exist in the OpenClaw ecosystem. These are real engineering contributions, not marketing inventions.

1. Kernel-Level Sandbox (OpenShell)

Before NemoClaw, OpenClaw agents ran with the same filesystem, network, and process permissions as the host system. No isolation boundary existed between “what the agent is supposed to do” and “what the operating system allows.”

OpenShell changes this fundamentally. It uses Linux kernel primitives — Landlock for filesystem isolation, seccomp for syscall filtering, network namespaces for connection control — to create a deny-by-default execution environment. This is not application-level sandboxing (which agents can often escape). It is kernel-enforced isolation, meaning the sandbox survives even if the agent’s own code is compromised through prompt injection or tool manipulation.

OpenShell directly addresses ASI01 (Excessive Agency) and ASI06 (Inadequate Sandboxing) from the OWASP Agentic Top 10. These represent two of the highest-severity risk categories for enterprise AI agent deployments.

2. Out-of-Process Policy Engine

Most AI agent frameworks implement access controls inside the agent runtime. The problem: if the agent is compromised (through prompt injection, tool poisoning, or a supply-chain attack), the access controls are compromised along with it. NemoClaw’s policy engine runs as a separate process — outside the agent’s execution context — and evaluates every tool call through a 4-level inspection: binary name, destination address, HTTP method, and URL path.

The policy engine is configured through YAML files that security teams can review, version-control, and audit without understanding the agent’s application code. This separation of concerns — developers define what agents do, security teams define what agents are allowed to do — is the architectural pattern enterprise security organizations have been requesting since AI agents entered the conversation.

3. Privacy Router

The privacy router inspects every inference request and routes it based on data sensitivity: queries containing PII or regulated data route to local Nemotron models on-premises, while general-purpose reasoning routes to cloud providers. Organizations subject to HIPAA, GDPR, or sector-specific data residency requirements cannot send regulated data to third-party cloud models without complex data processing agreements. Local routing eliminates that requirement for sensitive queries while preserving cloud model access for everything else.

The privacy router’s local inference capability requires NVIDIA GPU hardware. Without a local GPU, all requests route to cloud providers — and the data-sovereignty benefit disappears entirely. This is where NemoClaw’s “open-source” and “hardware-agnostic” claims require careful qualification.

4. Nemotron 3 Super 120B — The Model Powering Local Inference

The privacy router is only as useful as the local model it routes to. GTC 2026 introduced Nemotron 3 Super 120B, which represents a genuine architectural advance in efficient large-model inference. This is the model that makes NemoClaw’s local-inference promise technically credible.

| Specification | Detail |

|---|---|

| Architecture | LatentMoE — hybrid interleaved Mamba-2 + MoE + select Attention layers |

| Total parameters | 120B total, 12B active per inference pass |

| Context window | Native 1M tokens (linear-time via SSMs — no quadratic attention bottleneck) |

| Training data | 25 trillion tokens using NVFP4 (4-bit floating-point optimized for Blackwell) |

| Generation speed | Multi-Token Prediction (MTP) layers for faster output |

| Throughput vs. competitors | 2.2x higher than GPT-OSS-120B, 7.5x higher than Qwen3.5-122B |

| Availability | Ollama, HuggingFace, NVIDIA NIM |

The 120B/12B architecture is the critical detail. Only 12 billion parameters activate per inference pass, meaning a 120B-class model runs with the compute footprint of a 12B model. Combined with the native 1M-token context window (achieved through state-space models rather than quadratic attention), Nemotron 3 Super is designed to make local inference on DGX Spark hardware practical rather than theoretical. The 2.2x throughput advantage over comparably sized open-source models is verified by NVIDIA’s technical blog benchmarks.

Nemotron 3 Super’s architecture directly enables NemoClaw’s privacy routing promise. A 120B-quality model that runs with 12B-class compute makes local inference on a $4,757 DGX Spark feasible — rather than requiring $50,000+ multi-GPU configurations. This is the technical link between NVIDIA’s “free software” and “required hardware” strategy.

Four Claims That Require Qualification

Jensen Huang is an exceptional communicator. Part of what makes him effective is the precision with which he frames technically accurate statements in ways that create urgency beyond what the technology currently warrants. The following GTC claims are not false — but they are not the full picture either.

Claim 1: “Every company needs an OpenClaw strategy”

| What Jensen Said | What’s Accurate | What’s Missing |

|---|---|---|

| Every company needs an OpenClaw strategy | AI agents are entering enterprise workflows. Organizations need a strategy for deploying, securing, and governing them. | That strategy does not need to be OpenClaw-specific. Anthropic’s Claude, Microsoft’s Azure AI Agent Service, and LangChain all offer enterprise agent frameworks. “AI agent strategy” is the real imperative; “OpenClaw strategy” narrows it to NVIDIA’s ecosystem. |

Gartner projects 40% of enterprise applications will include AI agents by end of 2026. That projection validates the general claim that enterprises need an agent strategy. But framing it as an “OpenClaw strategy” implies a platform choice that many organizations have not yet made — and should not make based on a keynote.

Claim 2: “Open source” (Apache 2.0)

NemoClaw is released under the Apache 2.0 license. The source code is available. Anyone can fork, modify, and deploy it. This is genuinely open source by every standard definition.

However, “open source” and “runs fully without NVIDIA infrastructure” are different statements. The privacy router — the component that enables data sovereignty and justifies NemoClaw’s value proposition for regulated industries — requires NVIDIA GPU hardware for local inference. The Nemotron models that power local routing are optimized for NVIDIA’s CUDA architecture. Running the complete NemoClaw stack without NVIDIA GPUs is technically possible but functionally incomplete: you get the sandbox and policy engine, but lose the privacy routing that makes the platform compelling for compliance-sensitive organizations.

“NVIDIA launches NemoClaw, eyes to pair with DGX Spark, DGX Station.”

— Constellation Research, March 2026

Constellation Research identified the pattern immediately. NemoClaw is free. The hardware it needs to deliver its full capability is not. DGX Spark (the Dell Pro Max GB10) starts at $4,756.84. DGX Station systems cost significantly more. The open-source license is genuine, but the business model underneath it is hardware sales — the same model NVIDIA has used for CUDA, cuDNN, and every other “free” software layer in their ecosystem.

Claim 3: “Hardware agnostic”

The OpenShell sandbox and policy engine run on standard Linux (minimum 4 vCPU, 8 GB RAM) without GPU dependencies. The privacy router’s local inference mode, however, requires NVIDIA GPU hardware for practical performance. Without a local GPU, all sensitive data routes to cloud providers — eliminating the data-sovereignty benefit entirely.

DGX Spark (Dell Pro Max GB10) starting price — the hardware beneath the “free” software

Claim 4: “17 launch partners validate enterprise readiness”

The partner commitments are real, but “launch partner” and “production deployment” are materially different. These companies have committed engineering resources to evaluate and integrate with NemoClaw — not deployed it in production or validated it through independent security audit. CrowdStrike published a Secure-by-Design Blueprint: a reference architecture, not a certified production deployment. Partner commitments during alpha indicate market interest. They do not constitute the validation enterprise security teams require before production approval.

Seven Things Jensen Did Not Mention on Stage

The strongest signal about any technology comes not from what the vendor says, but from what they choose to leave out. The following details were absent from the GTC keynote and require independent evaluation.

1. NemoClaw Is Alpha Software

NVIDIA’s own documentation states: “expect rough edges.” NemoClaw was released on March 16, 2026, as an alpha/early-access product. No version number above 0.x has been published. No backward-compatibility guarantees exist. No independent security audit has been conducted or published. Performance benchmarks under enterprise workloads have not been released.

Alpha status does not mean the technology is unusable. It means the technology is unproven at scale, subject to breaking changes, and operating without the quality guarantees that enterprise procurement processes typically require.

NVIDIA’s own documentation warns to “expect rough edges.” Enterprise organizations deploying NemoClaw today should budget for configuration changes with each release, potential breaking API modifications, and the engineering time to absorb updates that alpha-stage software requires. This is not a criticism — it is a planning consideration that the keynote framing does not communicate.

2. The Base Platform’s Security Record

NemoClaw wraps OpenClaw. OpenClaw’s security track record is documented: 42,665 exposed instances found by security researcher Maor Dayan, 93.4% authentication bypass rate across scanned deployments, and 22+ CVEs since launch including remote code execution vulnerabilities. NemoClaw’s sandbox mitigates many of these attack vectors, but it does not patch the underlying OpenClaw codebase. CVEs in OpenClaw persist regardless of the wrapper.

The r/LocalLLaMA community recognized this immediately. A thread titled “Fact-checking Jensen Huang’s GTC 2026 OpenClaw claims” surfaced within days of the keynote, noting that NemoClaw’s security improvements are additive controls, not remediation of OpenClaw’s existing vulnerability surface.

3. Enterprise Governance Is Not Included

NemoClaw provides isolation, policy enforcement, and data routing. It does not provide the operational governance layer that enterprise deployment requires: multi-tenant isolation between departments, credential lifecycle management, compliance documentation packages (SOC 2 evidence, HIPAA mapping, EU AI Act alignment), SIEM/SOC integration beyond CrowdStrike, multi-agent orchestration policies, or ongoing CVE remediation within defined SLA windows.

JetPatch announced an Enterprise Control Plane for NemoClaw at GTC, acknowledging this governance gap. But the JetPatch product is also early-stage, and the broader governance ecosystem that enterprise organizations need will take months to mature.

4. The TCO Equation Is Larger Than Advertised

NemoClaw is free. The total cost of a production NemoClaw deployment is not. A realistic enterprise TCO includes:

| Cost Component | Range | Notes |

|---|---|---|

| NemoClaw software license | $0 | Apache 2.0 — genuinely free |

| NVIDIA GPU hardware (DGX Spark) | $4,757+ | Required for privacy router local inference |

| Linux compute infrastructure | $500-$5,000/mo | Depends on agent count and workload |

| Engineering: YAML policy configuration | 40-120 hours | Per-agent policies, testing, validation |

| Engineering: privacy router tables | 20-60 hours | Data classification, routing rules, testing |

| Engineering: SIEM integration | 40-80 hours | Custom work for non-CrowdStrike platforms |

| Compliance documentation | 60-160 hours | SOC 2, HIPAA, EU AI Act mapping |

| Ongoing maintenance | 10-40 hrs/mo | CVE patching, policy updates, alpha-stage changes |

At independent consultant rates of $150/hour (the rate published by nemoclawconsulting.com), the engineering work alone ranges from $30,000 to $63,000 for initial deployment — before ongoing maintenance. The “free open-source platform” framing is technically accurate and practically misleading for enterprise planning.

5. The Competitive Framing Is Narrow

Jensen’s keynote positioned OpenClaw as the de facto AI agent framework. The enterprise reality: Anthropic Claude, Microsoft Azure AI Agent Service, Amazon Bedrock Agents, and LangChain all offer enterprise agent capabilities with varying security models. An “OpenClaw strategy” may be the right answer for your organization. But it should emerge from a comparative evaluation — not from a keynote that assumes the platform choice is already made.

6. Known Technical Issues Undermine Some Claims

While Jensen described OpenShell as “the policy engine of all the SaaS companies in the world,” the GitHub issue tracker tells a more nuanced story. Several open issues directly affect the sandbox and routing capabilities that NemoClaw’s security narrative depends on:

- OPA proxy returning 403 on valid CONNECT tunnels — The policy engine incorrectly blocks legitimate outbound connections, meaning agents cannot reach approved external services through the sandbox

- Local Ollama inference routing fails from sandbox (GitHub #385) — The privacy router cannot reach locally hosted models when running inside the sandbox, undermining the core local-inference promise

- WSL2 sandbox cannot reach Windows-hosted Ollama (#336) — Windows developers running NemoClaw through WSL2 cannot connect to local models, a significant gap for the developer-adoption funnel

These are not edge cases. The Ollama routing failure (#385) directly contradicts the privacy router’s value proposition: if local inference fails from inside the sandbox, sensitive data routes to cloud providers by default — exactly the outcome the privacy router is designed to prevent. The WSL2 issue (#336) affects the developer on-ramp that NVIDIA needs for enterprise adoption.

7. Sandbox Bypass — Snyk Labs Finding

More concerning for the “kernel-level security” narrative: Snyk Labs documented a sandbox bypass via the /tools/invoke endpoint using a TOCTOU (time-of-check-to-time-of-use) race condition. The attack exploits the gap between when the policy engine validates a tool call and when the sandbox executes it — allowing a crafted request to pass policy validation and then substitute a different payload during execution.

Security Research

Vector: /tools/invoke endpoint — policy engine validates request at time T1, but sandbox executes a substituted payload at time T2.

Impact: An attacker with access to the agent’s tool-calling interface can bypass sandbox restrictions, executing unauthorized operations that the policy engine explicitly denied.

This finding directly undermines the “kernel-level isolation” narrative. The sandbox kernel primitives (Landlock, seccomp) remain intact, but the policy enforcement layer — the component Jensen called “the policy engine of all the SaaS companies in the world” — is vulnerable to a well-understood class of concurrency attack.

The Snyk Labs finding does not invalidate the sandbox architecture. Landlock and seccomp enforcement remain kernel-level and robust. But the policy engine that decides what gets sandboxed is vulnerable to bypass — and that distinction matters enormously for enterprise security teams evaluating whether NemoClaw’s “out-of-process policy engine” delivers the isolation guarantees the keynote implied.

The Honest Assessment: NemoClaw Solves Real Problems, But NVIDIA Sells Hardware

This is not cynicism. It is the structural context that every CTO should factor into their evaluation.

NVIDIA’s business model has followed the same pattern for over a decade: release free software that creates demand for NVIDIA hardware. CUDA, cuDNN, TensorRT — each is genuinely useful, genuinely open (to varying degrees), and genuinely tied to NVIDIA’s hardware revenue. NemoClaw follows the same model. The software is free and open-source (Apache 2.0). The security innovations are real. And the full capability of the platform requires NVIDIA GPU hardware that costs thousands of dollars.

The DGX Hardware Play Underneath

Constellation Research identified the strategy immediately: NemoClaw is designed to pair with DGX Spark and DGX Station. The hardware product line creates a clear on-ramp:

| Hardware Tier | Target | Starting Price | NemoClaw Role |

|---|---|---|---|

| DGX Spark (Dell Pro Max GB10) | SMBs, developer workstations, PoC deployments | $4,756.84 | Entry-level local inference for privacy router |

| DGX Station | Enterprise multi-GPU, production workloads | $20,000+ | Multi-agent orchestration, full Nemotron 3 Super throughput |

Dell, HPE, and Lenovo have committed to NemoClaw-ready hardware configurations. This is not incidental — it is the distribution strategy. Free software creates the demand. OEM hardware partnerships fulfill it. Jensen’s “next ChatGPT” declaration and his Linux/Kubernetes/HTML comparison are not just product positioning; they are demand-generation for a hardware pipeline. Every organization that adopts NemoClaw’s privacy router becomes a hardware customer. This is NVIDIA’s proven playbook: CUDA drove GPU sales for a decade. NemoClaw is designed to do the same for DGX.

“Nvidia plans open-source AI agent platform ‘NemoClaw.'”

— CNBC, March 2026

Understanding this pattern does not invalidate NemoClaw’s technical contributions. It provides the context for interpreting “every company needs an OpenClaw strategy” as what it is: a business-development statement wrapped in a technology proclamation. When Jensen tells Jim Cramer that OpenClaw is “the next ChatGPT,” the audience is not just developers — it is investors and procurement officers. When Chinese AI stocks surge on the endorsement, the market is responding to the hardware demand signal, not the open-source software. Your organization may indeed need NemoClaw. But that decision should be driven by your security requirements and compliance obligations — not by keynote urgency or stock-market momentum.

What CTOs Should Actually Do After GTC 2026

Rather than reacting to keynote urgency, enterprise technology leaders should apply a structured evaluation framework to the NemoClaw opportunity. The following steps separate the legitimate technology opportunity from the adoption pressure.

-

1

Assess whether AI agents are a near-term priority for your organization. If your roadmap includes AI agent deployment within 12-18 months, NemoClaw warrants evaluation. If AI agents are a 2028+ consideration, the ecosystem will mature significantly before you need to commit. The GTC keynote creates urgency; your roadmap determines relevance.

-

2

Evaluate NemoClaw against your existing infrastructure. If your organization already operates NVIDIA GPU infrastructure, NemoClaw’s privacy router delivers immediate value. If your infrastructure is CPU-only or runs on AMD/Intel accelerators, the hardware acquisition cost changes the TCO equation materially. Map the dependency before committing to the platform.

-

3

Conduct a security gap analysis against OWASP ASI01-ASI10. NemoClaw provides strong coverage for 3 of 10 OWASP Agentic Security Index categories (ASI01, ASI06, ASI09) and partial coverage for 3 more (ASI02, ASI05, ASI10). The remaining 4 categories — including supply-chain trust (ASI03) and cross-agent escalation (ASI04) — require additional controls regardless of platform choice. Map your risk before assuming the NemoClaw security wrapper covers it.

-

4

Budget for the governance layer, not just the sandbox. NemoClaw provides isolation. Enterprise deployment requires governance: multi-tenant policies, credential management, compliance documentation, SIEM integration, and ongoing maintenance. Whether you build this internally, source it from the emerging partner ecosystem (JetPatch, CrowdStrike), or engage a managed implementation partner, the governance cost is real and should appear in the business case.

-

5

Consider a time-boxed pilot rather than a full commitment. NemoClaw’s alpha status makes a 30-day proof-of-concept deployment the appropriate entry point: 1 agent, 1 workflow, full security stack. Evaluate the platform’s maturity, your team’s ability to configure YAML policies and privacy router tables, and the operational burden of maintaining alpha-stage software. Then make the production decision with data, not keynote momentum.

GTC 2026 Claim Scorecard

For CTO briefings and board presentations, the following table provides a claim-by-claim assessment of Jensen Huang’s GTC 2026 NemoClaw announcements.

| GTC Claim | Verdict | Enterprise Implication |

|---|---|---|

| “Every company needs an OpenClaw strategy” | Marketing pressure | Every company needs an AI agent governance strategy. Platform choice should follow evaluation, not keynote. |

| NemoClaw solves OpenClaw security | Partially true | Addresses 3 of 10 OWASP ASI categories with strong coverage. Does not patch underlying OpenClaw CVEs. |

| Open source (Apache 2.0) | True | Genuinely open. Full capability requires NVIDIA GPU hardware — a business model consideration, not a licensing issue. |

| Hardware agnostic | Partially true | Sandbox and policy engine: yes. Privacy router at full capability: requires NVIDIA GPU. |

| 17 enterprise partners | True | Commitments are real. Launch partnership is evaluation-stage, not production-deployment validation. |

| Enterprise-ready security | Aspirational | Alpha software with no independent audit, no backward compatibility guarantees, no SLA. “Expect rough edges.” |

| Kernel-level sandbox innovation | True | OpenShell’s use of Landlock, seccomp, and namespaces is a genuine architectural advance for AI agent isolation. |

| Privacy router for data sovereignty | True, with conditions | Real capability. Requires NVIDIA GPU for local inference. Without GPU, all data routes to cloud providers. |

| “The next ChatGPT” (CNBC) | Aspirational | Market-moving rhetoric that drove Chinese AI stock rallies. Adoption trajectory remains unproven at ChatGPT scale. |

| “Policy engine of all the SaaS companies” | Undermined | Snyk Labs documented TOCTOU bypass via /tools/invoke. Policy engine vulnerable to concurrency attack. |

| Nemotron 3 Super 120B local inference | True | 120B/12B LatentMoE architecture with 1M context is a genuine advance. 2.2x throughput vs. GPT-OSS-120B verified. |

| Linux/Kubernetes/HTML comparison | Marketing pressure | Historical comparisons to foundational infrastructure standards are aspiration, not established fact. |

The Bottom Line

Jensen Huang’s GTC 2026 keynote announced a product that solves real problems and wrapped it in adoption pressure that serves NVIDIA’s hardware business. Both of those things are true simultaneously, and CTOs who can hold both in mind will make better decisions than those who accept the keynote uncritically or dismiss it as pure marketing.

NemoClaw’s kernel-level sandbox, out-of-process policy engine, and privacy router are genuine engineering innovations that address documented gaps in the OpenClaw security model. The 17 partner commitments signal real enterprise interest. The Apache 2.0 license is genuinely open. These are facts, not sales claims.

Also facts: NemoClaw is alpha software with no independent security audit. The privacy router requires NVIDIA GPU hardware to deliver its core value proposition. “Every company needs an OpenClaw strategy” is adoption pressure, not technical analysis. The total cost of enterprise deployment extends well beyond the $0 software license. Snyk Labs documented a TOCTOU race condition that bypasses the policy engine Jensen called “the policy engine of all the SaaS companies in the world.” Open GitHub issues show local inference routing failing from inside the sandbox — the exact scenario the privacy router is designed to prevent. And the governance layer that enterprise organizations actually need — multi-tenant isolation, compliance documentation, credential management, SIEM integration — does not exist in NemoClaw today.

GTC claims that hold up to enterprise scrutiny without qualification

The measured path: evaluate NemoClaw on its technical merits, budget for the governance layer that sits above it, run a time-boxed pilot before committing production resources, and make the platform decision based on your organization’s security requirements and compliance obligations — not on keynote momentum. ManageMyClaw Enterprise provides NemoClaw implementation, security hardening, and managed governance for organizations that want to move from evaluation to production with the operational infrastructure already in place.

Organizations that begin building governance frameworks during the alpha window — treating NemoClaw as the isolation layer within a broader security and compliance architecture — will be production-ready when NemoClaw reaches general availability. That is the legitimate urgency underneath Jensen’s keynote. The rest is NVIDIA being NVIDIA.

Frequently Asked Questions

Should my organization develop an “OpenClaw strategy” based on Jensen’s recommendation?

Your organization should develop an AI agent governance strategy. Whether that strategy centers on OpenClaw/NemoClaw, Anthropic Claude, Microsoft Azure AI Agent Service, or another platform should be determined by a comparative evaluation — not by a vendor keynote. If your roadmap includes AI agent deployment within 12-18 months and you already operate NVIDIA GPU infrastructure, NemoClaw warrants serious evaluation. If neither condition is true, the keynote urgency does not apply to your timeline.

Is NemoClaw genuinely open source or is there vendor lock-in?

The software is genuinely open source under Apache 2.0. You can fork, modify, and deploy without restriction. The practical lock-in is hardware-based: the privacy router’s local inference mode requires NVIDIA GPU for full capability. If your organization later leaves the NVIDIA ecosystem, the sandbox and policy engine remain functional, but you lose local inference routing.

What does NemoClaw’s alpha status mean for enterprise deployment timelines?

Alpha means breaking changes should be expected, backward compatibility is not guaranteed, and no independent security audit has been published. Budget 2-6 weeks for initial deployment, allocate 10-40 hours per month for ongoing maintenance, and treat the deployment as a defense-in-depth layer. Production-critical workloads should not depend solely on alpha-stage infrastructure.

How does the DGX Spark pricing ($4,757) factor into NemoClaw cost planning?

DGX Spark (the Dell Pro Max GB10 at $4,756.84) is the entry-level NVIDIA hardware that enables NemoClaw’s privacy router to run local inference. If your organization does not already have NVIDIA GPU infrastructure, this is the minimum hardware investment to access NemoClaw’s full capability. For organizations running multiple agents across departments, DGX Station systems provide higher throughput at significantly higher cost. Cloud GPU instances are an alternative but cost 10-20x more per hour than standard compute.

What did the r/LocalLLaMA community identify that the keynote did not cover?

The r/LocalLLaMA thread “Fact-checking Jensen Huang’s GTC 2026 OpenClaw claims” highlighted several gaps: NemoClaw wraps but does not patch OpenClaw’s existing CVEs, the “hardware agnostic” claim requires qualification for the privacy router, alpha-stage software lacks the guarantees enterprise procurement expects, and the “every company needs” framing is adoption pressure rather than technical analysis. These community observations align with the independent enterprise evaluation framework outlined in this post.

What is the Snyk Labs sandbox bypass and how serious is it?

Snyk Labs identified a TOCTOU (time-of-check-to-time-of-use) race condition in the /tools/invoke endpoint. The policy engine validates a tool call at one point in time, but the sandbox executes a potentially different payload milliseconds later. This does not break the kernel-level sandbox itself (Landlock and seccomp remain intact), but it allows an attacker to bypass the policy decisions that determine what enters the sandbox. For enterprise deployments, this means the policy engine alone is insufficient as a trust boundary — additional controls (network segmentation, request signing, audit logging) are needed until the vulnerability is patched.

How does Nemotron 3 Super 120B compare to other open-source models?

Nemotron 3 Super uses a LatentMoE architecture with 120B total parameters but only 12B active per inference pass, combined with a native 1M-token context window achieved through Mamba-2 state-space layers rather than quadratic attention. NVIDIA’s benchmarks show 2.2x higher throughput than GPT-OSS-120B and 7.5x higher than Qwen3.5-122B. The model is available on Ollama, HuggingFace, and NVIDIA NIM. For NemoClaw deployments specifically, this architecture makes local inference on DGX Spark hardware practical — a 120B-quality model running with 12B-class compute requirements.

Why did Chinese AI stocks rally after Jensen’s OpenClaw comments?

Jensen’s declaration that OpenClaw is “the next ChatGPT” and his comparison to Linux, Kubernetes, and HTML signaled to investors that open-source AI agent infrastructure is entering its platform-standard phase — similar to how Linux became the default server OS. Chinese AI companies like Zhipu and Minimax, which build on open-source AI infrastructure, rallied because Jensen’s endorsement validated the market they operate in. For CTOs, the stock-market reaction is a useful signal: capital markets believe AI agents are infrastructure, not hype. But stock movements should inform strategic planning, not vendor selection.

What is the recommended first step for a CTO evaluating NemoClaw after GTC?

Start with a structured architecture assessment: map your current AI agent deployment (or planned deployment) against the OWASP Agentic Top 10 (ASI01-ASI10), evaluate your existing NVIDIA GPU infrastructure, and identify which NemoClaw capabilities address gaps in your current security posture. A 30-day pilot with 1 agent and 1 workflow provides empirical data without requiring a full commitment. Schedule an architecture review if you want an independent assessment before investing internal engineering resources.

Our architecture review maps your deployment against OWASP ASI01-ASI10, evaluates NemoClaw fit for your infrastructure, and delivers a prioritized recommendation — independent of vendor keynote pressure.

Schedule Architecture Review