“NemoClaw is hardware-agnostic at the software level. But the privacy router — the component that keeps sensitive data off cloud APIs — needs an NVIDIA GPU for full Nemotron local inference. Your hardware choice determines whether data residency is a configuration option or a compromise.”

— NVIDIA NemoClaw Documentation, March 2026

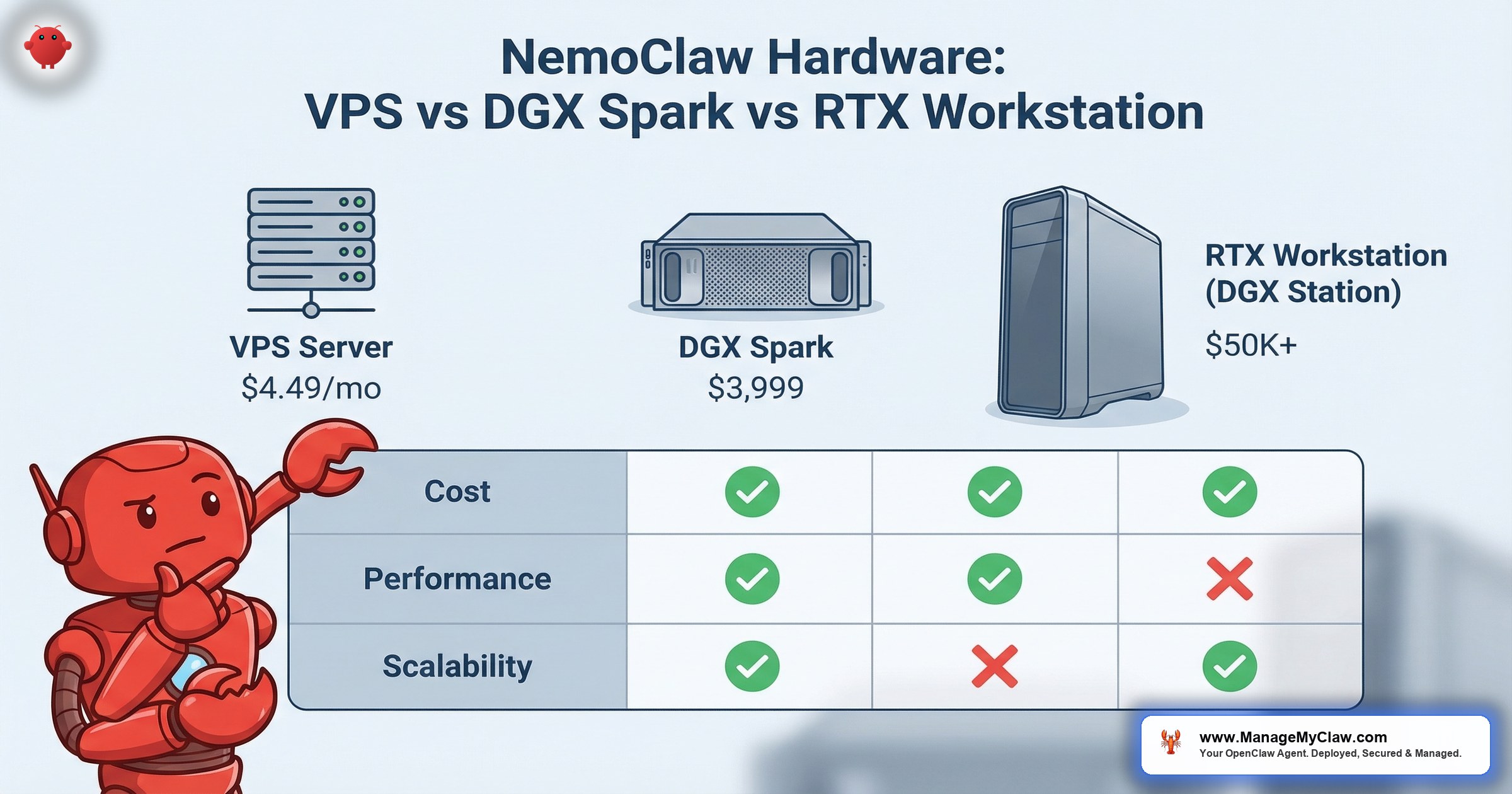

Gartner projects 40% of enterprise applications will include AI agents by end of 2026. NVIDIA shipped NemoClaw on March 16, 2026 (open-sourced March 6), with system requirements that appear modest: Linux, 4 vCPU, 8 GB RAM, Docker. A $4.49/month VPS from Hetzner meets those minimum specs. But the minimum specs tell you what will boot NemoClaw — not what will run a production deployment with local inference, privacy routing, and data residency controls that your compliance team requires.

The hardware decision is not about whether NemoClaw will install. It is about whether your deployment can enforce data sovereignty. The privacy router — the component that classifies queries and routes sensitive data to local Nemotron models instead of cloud LLMs — requires an NVIDIA GPU for full local inference. Without a GPU, you can run NemoClaw with cloud-only routing or smaller Ollama-based local models, but you cannot run production Nemotron inference on-premises. For organizations subject to HIPAA, SOC2, GDPR, or the EU AI Act (full enforcement August 2026), that distinction determines your entire compliance architecture.

This guide evaluates three hardware paths enterprise architects are considering today: VPS cloud infrastructure, the NVIDIA DGX Spark (Dell Pro Max GB10), and RTX workstations. We covered NemoClaw’s privacy routing architecture in our Privacy Router deep dive and the full security stack in our Architecture Deep Dive. This post is the infrastructure companion — what to buy, what it costs, and what each option enables or constrains in your NemoClaw deployment.

VPS monthly cost — cloud routing only, no local GPU inference

DGX Spark — GB10 Grace Blackwell Superchip, full local inference + privacy routing

The Three Questions That Determine Your Hardware Path

Before comparing specifications and pricing, answer three questions. Every other decision flows from these:

-

1

Does your data residency policy require local inference? If your compliance framework mandates that certain data categories never leave your infrastructure — HIPAA Protected Health Information, GDPR Article 17 personal data, SOC2 Type II sensitive classifications — you need GPU hardware capable of running Nemotron locally. Cloud-only routing means every query touches a third-party API. No GPU means no local inference for the privacy router.

-

2

What is your team size and deployment scope? A single developer evaluating NemoClaw for a proof-of-concept has fundamentally different hardware requirements than a 10-agent production deployment serving 200 employees. The VPS path scales horizontally with monthly costs. The DGX path scales vertically with one-time capital expenditure. The RTX path sits between them — developer-grade GPU inference for teams of 3–10.

-

3

What is your budget model: OpEx or CapEx? VPS infrastructure is pure operational expenditure — monthly bills that scale with usage. DGX Spark and RTX workstations are capital expenditure — upfront purchases that depreciate over 3–5 years. Your finance team’s preference for OpEx vs. CapEx may override technical considerations. Both models work. The TCO math changes depending on the timeline.

NemoClaw is hardware-agnostic at the software level. The OpenShell sandbox, YAML policy engine, and NemoClaw runtime run identically on a $4.49 VPS and a $50,000 DGX Station. The divergence is in the privacy router: without an NVIDIA GPU, you route all inference to cloud LLMs or run smaller Ollama-based local models. With an NVIDIA GPU, you run full Nemotron inference locally — the query never leaves your network. Same software. Different data residency posture.

VPS Deployments: Cloud-Only Routing at $4.49–$24/Month

The VPS path is the lowest-cost entry point for NemoClaw. Providers like Hetzner, DigitalOcean, and Linode offer Linux instances that meet NemoClaw’s minimum requirements — 4 vCPU, 8 GB RAM, Ubuntu 22.04+, Docker — at monthly costs between $4.49 and $24. For organizations already running cloud infrastructure, provisioning a NemoClaw instance is operationally identical to spinning up any other Linux workload.

NemoClaw Minimum System Requirements (All Paths)

- Operating System: Ubuntu 22.04+ LTS (or equivalent Linux, kernel 5.13+)

- CPU: 4 vCPU minimum (8+ recommended for multi-agent deployments)

- RAM: 8 GB minimum (16+ recommended with local inference models)

- Docker: Installed and running (required for container-based sandbox isolation)

- Node.js: v20 or later (LTS release)

- Network: Outbound HTTPS access to LLM provider APIs

What VPS Deployments Enable

On a VPS without GPU hardware, NemoClaw runs with full security stack functionality — OpenShell sandbox with Landlock, seccomp, and network namespace isolation; YAML policy engine with 4-level evaluation; audit logging; CrowdStrike Falcon integration. The sandbox does not require a GPU. The policy engine does not require a GPU. Every security primitive that NemoClaw provides works on commodity cloud infrastructure.

What changes is inference routing. Without a GPU, the privacy router operates in one of two modes:

- Cloud-only routing — All inference requests are routed to cloud LLM providers (OpenAI, Anthropic, Google, etc.). The privacy router still classifies queries by sensitivity level, but all classified queries go to cloud APIs. Your YAML policies control which providers receive which classifications, but the data leaves your network regardless.

- Ollama local models — You can run smaller, CPU-compatible models via Ollama for basic local inference. Models like Llama 3.1 8B or Mistral 7B can run on CPU-only instances, but response times are significantly slower than GPU-accelerated inference, and model capability is constrained compared to Nemotron. Suitable for development and testing. Not recommended for production workloads with latency requirements.

If your compliance framework requires that sensitive data categories never leave your infrastructure, a VPS without GPU hardware cannot satisfy that requirement for NemoClaw inference. The privacy router can classify queries, but it cannot run Nemotron locally to keep sensitive inference on-premises. For organizations where data residency is a regulatory obligation (not just a preference), the VPS-only path requires accepting that inference data reaches third-party cloud providers — or it requires upgrading to GPU-attached cloud instances, which changes the cost profile significantly.

VPS Cost Analysis

| Provider | Instance | Specs | Monthly Cost |

|---|---|---|---|

| Hetzner CX22 | Shared vCPU | 4 vCPU / 8 GB RAM / 80 GB NVMe | $4.49/mo |

| DigitalOcean Basic | Shared CPU | 4 vCPU / 8 GB RAM / 160 GB SSD | $48/mo |

| Hetzner CX32 | Shared vCPU | 8 vCPU / 16 GB RAM / 160 GB NVMe | $8.49/mo |

| Hetzner CCX23 | Dedicated CPU | 4 vCPU / 16 GB RAM / 80 GB NVMe | $15.49/mo |

| Hetzner CCX33 | Dedicated CPU | 8 vCPU / 32 GB RAM / 160 GB NVMe | $24.49/mo |

At $4.49–$24/month, the VPS path is orders of magnitude cheaper than GPU hardware. For teams that are evaluating NemoClaw’s security primitives, testing YAML policy configurations, or running deployments where all inference can safely route to cloud providers, VPS infrastructure is the rational starting point. We covered this hosting comparison in detail in our OpenClaw Mac Mini vs VPS hosting guide — the infrastructure math applies equally to NemoClaw deployments.

When VPS Is the Right Choice

- Proof-of-concept deployments — Testing NemoClaw’s sandbox, policy engine, and agent runtime before committing to GPU hardware

- Non-regulated workloads — Agents handling tasks where data residency is a preference, not a regulatory mandate

- Cloud-first organizations — Teams already running infrastructure on cloud providers who accept cloud LLM routing for all inference

- Budget-constrained evaluations — Testing the NemoClaw thesis at $54/year before committing $5,000+ to GPU hardware

- Multi-region deployments — Organizations needing NemoClaw instances in multiple geographies (EU, US, APAC) where shipping hardware is impractical

DGX Spark: GB10 Grace Blackwell Superchip at $3,999

The NVIDIA DGX Spark is now priced at $3,999 — not $4,756.84, which is the price of the Dell Pro Max GB10 configuration with 4 TB storage. The DGX Spark itself features the GB10 Grace Blackwell Superchip, delivering 1 petaFLOP of FP4 AI performance in a compact, power-efficient desktop form factor with 128 GB of unified memory. This is the hardware that makes the privacy router’s on-premises inference path viable without enterprise-grade DGX Station infrastructure.

For enterprise architects, the DGX Spark answers a specific question: Can we achieve full data residency for NemoClaw inference at a price point that does not require board-level capital approval? At $3,999, the answer shifts from “only with $50K+ DGX Station hardware” to “within a department’s discretionary budget.” As Constellation Research noted at GTC 2026: “NVIDIA launches NemoClaw, eyes to pair with DGX Spark, DGX Station” — confirming the deliberate hardware strategy connecting the agent runtime to purpose-built inference hardware.

DGX Spark (GB10 Grace Blackwell Superchip) — 1 petaFLOP FP4, 128 GB memory

DGX Spark Key Specifications

- Superchip: GB10 Grace Blackwell — NVIDIA’s unified CPU+GPU architecture

- AI Performance: 1 petaFLOP FP4 inference compute

- Memory: 128 GB unified (CPU and GPU share the same memory pool)

- Form Factor: Compact desktop — power-efficient, no data center required

- Clustering: Supports up to 4 DGX Spark systems in a unified configuration — creating a “desktop data center” with linear performance scaling

- Price: $3,999 (NVIDIA direct); Dell Pro Max GB10 with 4 TB storage at $4,756.84

What DGX Spark Enables

The DGX Spark running NemoClaw provides the full deployment stack:

- Full privacy router functionality — The privacy router classifies incoming queries by sensitivity level and routes sensitive data to Nemotron running locally on the GB10’s NVIDIA GPU. Sensitive inference never leaves your physical premises. Cloud LLMs handle non-sensitive general reasoning. This is the architecture described in our Privacy Router deep dive — fully realized on a single device.

- Complete security stack — OpenShell sandbox, YAML policy engine, kernel-level isolation (Landlock, seccomp, namespaces), audit logging. Everything that runs on a VPS also runs on DGX Spark — plus GPU-accelerated local inference.

- Offline-capable operation — With Nemotron running locally, agents can operate without any cloud API access for sensitive workflows. Air-gapped deployments become possible for classified environments, SCIF facilities, or high-security research labs. The YAML policy engine can enforce network isolation rules that prevent any outbound connectivity for designated agent workflows.

- Hybrid routing optimization — Use the full capability of cloud models (GPT-4, Claude, Gemini) for non-sensitive reasoning while routing PHI, PII, financial data, and classified information to local Nemotron inference. Cost optimization and compliance are not mutually exclusive on this hardware.

- Multi-unit clustering — NVIDIA supports connecting up to 4 DGX Spark systems in a unified configuration, creating a “desktop data center” with linear performance scaling. For departments needing more inference throughput than a single unit provides, clustering avoids the jump to DGX Station pricing. Four clustered units at $15,996 total deliver substantially more compute than a single unit while remaining under discretionary budget thresholds.

NVIDIA announced DGX Spark and NemoClaw-ready hardware availability through a broad OEM ecosystem: ASUS, Dell, GIGABYTE, HP, MSI, and Supermicro are all accepting orders, with shipping in the months ahead. This expands the hardware options beyond the Dell Pro Max GB10 that was the sole shipping product at GTC 2026. Enterprise-grade configurations with ECC memory, redundant power, and vendor support contracts are expected across multiple OEMs in Q2–Q3 2026. For organizations that require enterprise hardware support agreements, the OEM diversity provides procurement flexibility and competitive pricing.

Real user discussions about running NemoClaw on DGX Spark hardware are available on the NVIDIA developer forums at forums.developer.nvidia.com/t/nemoclaw-on-spark. These threads provide practical deployment experiences, performance observations, and configuration tips from early adopters — useful supplemental data for architecture planning beyond NVIDIA’s official documentation.

DGX Spark Deployment Architecture

A production DGX Spark NemoClaw deployment typically follows this architecture:

# Privacy Router Configuration — DGX Spark

inference:

local:

model: "nemotron-mini"

device: gpu:0

classifications:

- "phi" # Protected Health Information

- "pii" # Personally Identifiable Information

- "financial" # Financial records, account data

- "classified" # Internal-only business data

cloud:

providers:

- name: "openai"

model: "gpt-4o"

classifications:

- "general" # Non-sensitive reasoning

- "public" # Publicly available dataThis configuration gives your compliance team a verifiable boundary: queries classified as PHI, PII, financial, or classified data hit the local Nemotron model on the GB10’s GPU and never leave the device. General reasoning queries route to cloud LLMs for maximum capability. The YAML policy engine enforces these boundaries at the kernel level — agents cannot bypass the classification to send sensitive data to cloud providers.

When DGX Spark Is the Right Choice

- Regulated industries — Healthcare (HIPAA), financial services (SOC2, PCI-DSS), legal (attorney-client privilege), government (CMMC/NIST 800-171)

- Data residency mandates — EU AI Act compliance, GDPR data processing requirements, industry-specific data sovereignty rules

- Department-level deployments — 1–5 agents serving a single team or department, where $3,999 in hardware CapEx is within discretionary budget

- Pilot programs — Organizations running a 30-day proof-of-concept that needs to demonstrate full privacy routing capability to security and compliance reviewers

- Organizations with existing Dell relationships — Enterprise procurement teams that can add the Pro Max GB10 to an existing Dell contract for streamlined purchasing

RTX Workstations: Developer Evaluation and Team-Level Inference

RTX workstations equipped with NVIDIA RTX 4090 or RTX 5090 GPUs represent the middle path — more inference capability than a VPS, different positioning than the DGX Spark. These are developer-grade and team-grade machines that can run Nemotron models locally for NemoClaw privacy routing, with GPU architectures optimized for both inference and the general-purpose workstation tasks (rendering, simulation, development) that your engineering team already uses them for.

The RTX path is relevant for two enterprise scenarios:

- Developer evaluation — Engineering teams that already own RTX workstations can evaluate NemoClaw’s full privacy routing stack without any new hardware procurement. Install NemoClaw, configure the privacy router to use the existing RTX GPU, and test the complete deployment architecture. The evaluation produces real data about inference latency, model quality, and privacy router behavior that informs the hardware decision for production.

- Team-level production — For teams of 3–10 people running 1–3 agents, an RTX workstation provides sufficient GPU inference capability for NemoClaw privacy routing at production workloads. The RTX 4090 (24 GB VRAM) handles Nemotron-mini class models effectively. The RTX 5090 (32 GB VRAM) supports larger model variants with better throughput for concurrent agent requests.

| GPU | VRAM | Use Case | Approx. Cost (GPU Only) |

|---|---|---|---|

| RTX 4090 | 24 GB GDDR6X | Developer evaluation, small team inference (1–3 agents) | $1,599–$2,199 |

| RTX 5090 | 32 GB GDDR7 | Team-level production, larger Nemotron variants, higher concurrency | $1,999–$2,499 |

| RTX 4090 Workstation | 24 GB GDDR6X (full system) | Dedicated NemoClaw inference node for team deployment | $4,000–$7,000 (full build) |

| RTX 5090 Workstation | 32 GB GDDR7 (full system) | Production team inference with headroom for model scaling | $5,000–$8,000 (full build) |

The cost overlap between a high-end RTX workstation ($5,000–$8,000) and the DGX Spark ($3,999) is intentional on NVIDIA’s part. The DGX Spark is purpose-built for AI inference workloads. An RTX workstation is a general-purpose machine with GPU capability. For teams that need the workstation for other tasks (development, rendering, simulation) and want to add NemoClaw inference, the RTX path avoids purchasing dedicated hardware. For teams that want a dedicated NemoClaw inference appliance, the DGX Spark’s GB10 Superchip is architecturally optimized for the task.

If your NemoClaw privacy router shares GPU resources with other workstation tasks (rendering jobs, developer builds, ML training), inference latency becomes unpredictable. A developer running a large Docker build or a rendering pipeline will compete for GPU memory and compute. For production NemoClaw deployments where SLA guarantees matter, a dedicated inference device (DGX Spark or dedicated RTX workstation) is preferable to a shared developer machine.

DGX Station: Enterprise-Grade Multi-GPU for Large Deployments

For organizations deploying NemoClaw at scale — 10+ agents, multiple departments, hundreds of users — the NVIDIA DGX Station provides enterprise-grade infrastructure in a workstation form factor. The latest DGX Station features the GB300 Grace Blackwell Ultra Desktop Superchip, delivering 748 GB of coherent memory and 20 petaflops of AI compute — a 20x increase over the DGX Spark’s 1 petaFLOP. This is data-center-class inference capability designed for continuous operation with ECC memory, redundant components, and NVIDIA enterprise support.

DGX Station pricing starts at $50,000+ depending on configuration. This is infrastructure that requires C-level budget approval, data center planning, and enterprise procurement processes. For organizations at this scale, the hardware cost is a small fraction of the total NemoClaw deployment investment — implementation, policy configuration, compliance documentation, and ongoing managed care represent the majority of the budget. At this tier, the conversation shifts from “which hardware” to “which implementation partner.”

| Hardware | Superchip | Memory | AI Compute | Typical Scale | Price Range |

|---|---|---|---|---|---|

| DGX Spark | GB10 Grace Blackwell | 128 GB unified | 1 petaFLOP FP4 | 1–5 agents, single dept | $3,999 |

| DGX Spark (4x cluster) | 4x GB10 | 512 GB total | ~4 petaFLOPs FP4 | 5–15 agents, multi-team | $15,996 |

| DGX Station | GB300 Grace Blackwell Ultra | 748 GB coherent | 20 petaFLOPs | 10–50+ agents, enterprise | $50,000+ |

The DGX Station supports air-gapped configurations for organizations operating in strict regulatory environments — SCIF facilities, classified government networks, healthcare systems with physical isolation requirements. In air-gapped mode, all inference runs locally on the GB300 Superchip with zero network connectivity. The YAML policy engine enforces complete network isolation, and the privacy router operates exclusively against local Nemotron models. For defense, intelligence, and high-security healthcare deployments, air-gapped DGX Station is the reference architecture.

At the DGX Station tier, NemoClaw deployments can run full-size Nemotron models, multiple concurrent inference streams, and enterprise-scale privacy routing with sub-100ms classification and routing latency. The 748 GB of coherent memory on the GB300 supports running multiple large model variants concurrently — a larger, more capable model for complex financial analysis, a smaller, faster model for general PII redaction — all within the same privacy router configuration without memory contention.

Hardware Comparison: Side-by-Side Decision Matrix

The following table consolidates the key decision criteria across all hardware paths. Every row represents a question your architecture review team should answer before committing to a hardware path.

| Criteria | VPS ($4.49–$24/mo) | DGX Spark ($3,999) | RTX Workstation ($4K–$8K) | DGX Station ($50K+) |

|---|---|---|---|---|

| Local GPU Inference | No | Yes — GB10 Superchip | Yes — RTX 4090/5090 | Yes — Multi-GPU A100/H100 |

| Privacy Router: Full Nemotron | No — cloud-only or Ollama | Yes — full local inference | Yes — Nemotron-mini class | Yes — full-size Nemotron |

| Data Residency | Cloud providers only | Full on-premises for sensitive data | Full on-premises for sensitive data | Full on-premises, enterprise scale |

| OpenShell Sandbox | Full (kernel-level) | Full (kernel-level) | Full (kernel-level) | Full (kernel-level) |

| YAML Policy Engine | Full (4-level evaluation) | Full (4-level evaluation) | Full (4-level evaluation) | Full (4-level evaluation) |

| Agent Scale | 1–5 agents (CPU-bound) | 1–5 agents (GPU-accelerated) | 1–3 agents (shared GPU) | 10–50+ agents (multi-GPU) |

| Team Size | 1–20 (cloud inference limits) | 1–30 (single department) | 3–10 (developer/team) | 50–500+ (enterprise-wide) |

| Budget Model | OpEx (monthly) | CapEx (one-time) | CapEx (one-time) | CapEx (one-time + support) |

| Air-Gap Capable | No (requires internet) | Yes (Nemotron runs offline) | Yes (with dedicated config) | Yes (designed for it) |

| Enterprise Support | Cloud provider SLA | Dell ProSupport available | Vendor workstation warranty | NVIDIA DGX Enterprise Support |

| HIPAA/SOC2 Viable | Partial (cloud BAAs required) | Yes (full data residency) | Yes (with dedicated hardware) | Yes (enterprise compliance) |

3-Year Total Cost of Ownership: Infrastructure + Inference

Hardware cost is the visible expense. The less visible cost is inference — the per-token charges from cloud LLM providers that accumulate monthly. A VPS deployment with cloud-only routing generates ongoing inference costs that do not exist on GPU hardware running local Nemotron. The TCO analysis below models realistic enterprise usage: 5 agents, ~50,000 inference requests/month, with 30% classified as sensitive (requiring either local inference or premium cloud routing).

| Cost Component | VPS Path | DGX Spark Path | RTX Workstation Path |

|---|---|---|---|

| Hardware (Year 1) | $0 | $3,999 | $5,000–$8,000 |

| Hosting / Power (Year 1) | $294 ($24.49/mo x 12) | $360 (electricity estimate) | $360 (electricity estimate) |

| Cloud Inference (Year 1) | $3,600–$12,000 (all queries cloud-routed) | $1,200–$4,800 (non-sensitive only) | $1,200–$4,800 (non-sensitive only) |

| Year 1 Total | $3,894–$12,294 | $5,559–$9,159 | $6,560–$13,160 |

| Year 2 Total (recurring) | $3,894–$12,294 | $1,560–$5,160 | $1,560–$5,160 |

| Year 3 Total (recurring) | $3,894–$12,294 | $1,560–$5,160 | $1,560–$5,160 |

| 3-Year TCO | $11,682–$36,882 | $8,679–$19,479 | $9,680–$23,480 |

The crossover point is visible in Year 2. The VPS path has lower Year 1 costs if cloud inference volume is modest. But by Year 2, the DGX Spark path’s ongoing costs drop to hosting and non-sensitive cloud inference only — the $3,999 hardware is paid off, and sensitive inference runs locally at zero marginal cost. At 128 GB of unified memory with 1 petaFLOP of FP4 compute, the Spark handles production Nemotron inference for department-scale deployments without cloud per-token charges. For organizations with high inference volume or high ratios of sensitive data, the DGX Spark path reaches cost parity within 10–14 months and becomes cheaper thereafter.

The TCO table above does not include the cost of a compliance finding. If your organization routes HIPAA-covered PHI to cloud LLM providers without appropriate BAAs and audit controls, a single HIPAA violation can range from $100 to $50,000 per incident (with annual maximums up to $1.5 million per violation category under the HITECH Act). The $3,999 DGX Spark investment that enables full data residency should be measured against that regulatory exposure, not just against monthly VPS costs.

These infrastructure costs are separate from NemoClaw implementation and managed care. The software implementation — YAML policy configuration, sandbox hardening, compliance documentation, CrowdStrike integration — costs the same regardless of hardware path. ManageMyClaw deploys NemoClaw on all three hardware options. The implementation and ongoing managed care investment is identical whether your NemoClaw instance runs on a $4.49 VPS or a $3,999 DGX Spark.

Which Hardware Path: Decision Tree for Enterprise Architects

After reviewing the specifications, cost analysis, and deployment architectures, the decision typically resolves to one of four enterprise scenarios. Each scenario has a clear hardware recommendation.

Scenario A: Evaluation Phase (No Budget Approval Yet)

Recommendation: VPS ($4.49–$24/month)

You are exploring NemoClaw as a potential solution. Your team needs to understand the sandbox model, test YAML policy configurations, and evaluate whether NemoClaw’s architecture meets your security requirements. No capital expenditure is justified at this stage. Deploy on a VPS, configure cloud-only routing, and produce an evaluation report that either kills the initiative or justifies the hardware procurement request. The VPS deployment gives you 90% of the NemoClaw evaluation surface area at 1% of the cost of GPU hardware.

Scenario B: Regulated Industry, Department-Level Deployment

Recommendation: DGX Spark ($3,999)

Your compliance team requires data residency for sensitive inference. You are deploying 1–5 agents for a single department (legal, finance, healthcare operations). At $3,999, the DGX Spark is within discretionary budget authority. It provides full privacy routing with the GB10 Grace Blackwell Superchip, 128 GB of unified memory, and a verifiable compliance boundary that you can demonstrate to auditors. For departments needing more throughput, up to 4 units cluster together with linear scaling. This is the path for organizations where data sovereignty is a requirement, not a preference.

Scenario C: Engineering Team With Existing GPU Hardware

Recommendation: RTX Workstation (existing hardware)

Your engineering team already has RTX 4090 or 5090 workstations for development. You can evaluate NemoClaw’s full privacy routing stack — including local Nemotron inference — without any new procurement. The evaluation determines whether dedicated hardware (DGX Spark or DGX Station) is justified for production. This is the zero-CapEx path to a full-stack NemoClaw evaluation, and it produces the latency and throughput data your architecture review needs to make the production hardware decision.

Scenario D: Enterprise-Wide Deployment (50+ Users)

Recommendation: DGX Station ($50,000+)

You are deploying NemoClaw across multiple departments with 10+ agents serving 50–500+ users. Data residency is non-negotiable. SLA guarantees are required. The hardware cost is a fraction of the total deployment investment (implementation, managed care, compliance documentation). DGX Station provides enterprise-grade multi-GPU inference, NVIDIA support contracts, and the infrastructure to run full-size Nemotron models at scale. At this tier, start with a $2,500 architecture assessment to map your specific requirements before committing to hardware specifications.

of CISOs rank agentic AI as their #1 attack vector — Dark Reading

What Does Not Change Across Hardware Paths

The hardware decision affects inference routing and data residency. It does not affect the following NemoClaw capabilities, which are identical across all deployment platforms:

- OpenShell Sandbox — Kernel-level isolation via Landlock filesystem controls, seccomp system call filtering, network namespaces, and PID isolation. Works on any Linux kernel 5.13+. No GPU dependency.

- YAML Policy Engine — 4-level evaluation (binary, destination, method, path) with deny-by-default enforcement. CPU-only processing. No GPU dependency.

- CrowdStrike Falcon Integration — AIDR telemetry, identity-based agent controls, SOC dashboard visibility. Network-based integration. No GPU dependency.

- Audit Logging — Complete trail of every agent action, policy evaluation, and inference request. Storage-dependent, not GPU-dependent.

- OWASP ASI01–ASI10 Coverage — NemoClaw’s security architecture addresses all 10 OWASP Agentic Security Initiative categories. The coverage is architectural, not hardware-dependent.

- Compliance Documentation — SOC2 evidence packages, HIPAA mapping, EU AI Act alignment. Documentation is a governance output, not a hardware output.

“The architecture deep dive covers 3 security layers — OpenShell kernel sandbox, YAML policy engine with 4-level evaluation, and the privacy router. Two of those three layers are completely hardware-independent. The hardware decision only affects the third.”

— ManageMyClaw, NemoClaw Architecture Deep Dive

NemoClaw Hardware Decision FAQ

Can I start with a VPS and migrate to DGX Spark later?

Yes. NemoClaw’s configuration is portable. Your YAML policies, privacy router routing tables, and OpenShell sandbox configurations transfer directly from a VPS deployment to DGX Spark hardware. The migration is a re-deployment with the same configuration files on new hardware, plus adding the local inference endpoint to your privacy router configuration. No policy rewrite required. This is the most common path for organizations that start with evaluation and graduate to production.

Can NemoClaw run on AWS, Azure, or GCP GPU instances?

Yes. Cloud providers offer GPU-attached instances (AWS p4d/p5, Azure NC-series, GCP A2/A3) that provide NVIDIA GPU inference capability. This gives you local inference within the cloud provider’s data center — your data does not leave the cloud provider, but it does not stay on your physical premises either. For organizations where “data residency” means “within our cloud tenancy” (not “on our physical hardware”), cloud GPU instances provide a viable middle path. Cost is significantly higher than VPS — GPU cloud instances run $1,000–$5,000+/month.

Does ManageMyClaw support all three hardware paths?

Yes. ManageMyClaw deploys NemoClaw on VPS infrastructure, DGX Spark hardware, RTX workstations, DGX Stations, and cloud GPU instances. The $2,500 Assessment includes a hardware recommendation based on your compliance requirements, team size, and budget model. Implementation pricing ($15,000–$45,000) is the same regardless of hardware — the policy configuration, sandbox hardening, and compliance documentation work is identical.

Is the DGX Spark loud enough for an office environment?

The Dell Pro Max GB10 is designed as a desktop workstation, not a rack-mount server. Fan noise is comparable to a high-end desktop PC under load — audible but not disruptive in an office environment. For server-room deployments, noise is not a factor. For under-desk deployment in a quiet office, test with your specific workload before committing to placement.

What happens when NemoClaw moves from alpha to general availability?

NemoClaw is in alpha as of March 2026. NVIDIA has been transparent about this. When NemoClaw reaches GA, hardware requirements may change — particularly minimum GPU specifications for local inference. Organizations that deploy on DGX Spark or RTX 4090/5090 hardware today are positioned for the GA transition, as these GPUs exceed likely minimum requirements. VPS deployments are unaffected by GPU requirement changes since they do not run local inference. Our Architecture Deep Dive tracks NemoClaw’s evolution as NVIDIA releases updates.

Can I run multiple NemoClaw instances on one DGX Spark?

Yes, with caveats. Multiple NemoClaw instances can share the GB10 Superchip’s GPU for inference, but each instance needs its own CPU and RAM allocation. With the DGX Spark’s hardware profile, running 2–3 concurrent NemoClaw instances is practical for department-level deployments. Beyond that, GPU memory contention affects inference latency. For multi-department deployments requiring 5+ concurrent instances, the DGX Station’s multi-GPU architecture is the appropriate hardware tier.

Our $2,500 Assessment includes a hardware recommendation mapped to your compliance requirements, team size, and budget model — plus architecture review, OWASP ASI01–ASI10 gap analysis, and a prioritized implementation plan. Delivered in 1 week.

Schedule Architecture Review