60% of healthcare organizations will face delays in digital transformation due to noncompliance. That’s not a projection from a startup blog. That’s Gartner, warning that the organizations racing to deploy AI agents in clinical and administrative workflows are about to hit a regulatory wall they didn’t see coming.

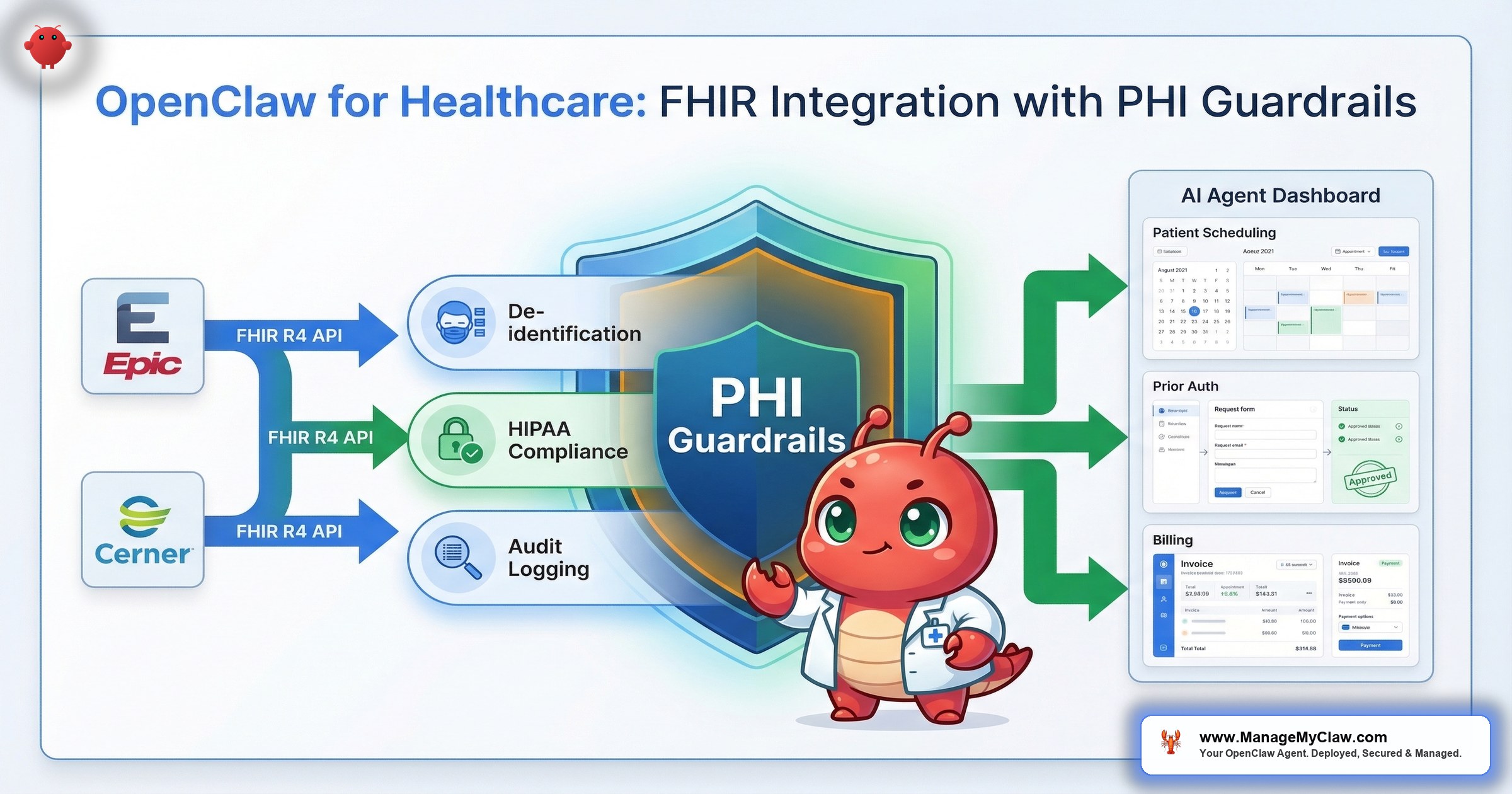

The collision point is specific: AI agents that interact with patient data through FHIR R4 APIs — the interoperability standard that connects Epic, Cerner, and Meditech — must satisfy HIPAA’s technical safeguards for electronic protected health information. By 2026, certified systems must prove they can safely integrate AI, disclose algorithmic risks, and deliver FHIR/SMART-based data exchange. That’s not guidance. That’s a certification requirement.

Think of FHIR like the USB-C of healthcare data. Every hospital system speaks it. But plugging an AI agent into that port without access controls is like giving a medical resident the administrator password to the entire hospital network on their first day.

OpenClaw’s agent architecture can connect to FHIR endpoints and automate administrative workflows across hospital departments. But healthcare isn’t a startup founder’s email inbox. The data is PHI. The penalties are real — up to $2.1 million per violation category per year. And the architecture decisions you make at deployment determine whether your AI agent network is a compliance asset or a HIPAA violation waiting for an audit.

The short answer up front: healthcare deployments require NemoClaw-level security — standard OpenClaw hardening isn’t sufficient for PHI.

Why FHIR R4 Is the Integration Layer (and Why It Matters for AI Agents)

FHIR R4 (Fast Healthcare Interoperability Resources, Release 4) is the standard API specification for exchanging electronic health records across systems. Epic, Cerner, and Meditech all expose FHIR R4 endpoints. If your hospital runs any of these EHRs — and over 90% of U.S. hospitals run at least one — FHIR is how external systems, including AI agents, access patient data.

For AI agent deployments, FHIR matters because it’s the authorized interoperability layer. Instead of building custom database connections to each EHR (a compliance nightmare and an engineering one), agents interact with standardized FHIR resources: Patient, Encounter, Observation, Condition, MedicationRequest. Each resource has a defined schema. Each API call is auditable. Each response contains structured data the agent can process without ambiguity.

The SMART on FHIR authorization framework adds OAuth 2.0-based access controls on top. An agent doesn’t get blanket access to the EHR. It gets scoped tokens — specific resources, specific patients, specific operations (read vs. write). This maps directly to the least-privilege principle that OWASP’s Agentic Top 10 identifies as foundational for AI agent security.

It’s like a library card system. FHIR is the catalog. SMART is the card itself. The card tells the library which sections you can enter, which books you can check out, and which ones you can only read in the building. An AI agent without SMART scoping is a visitor with a master key to every room.

Why this matters: If you’re evaluating OpenClaw for healthcare workflows, the question isn’t “can it connect to our EHR?” FHIR makes connection straightforward. The question is whether your deployment architecture enforces the access controls, audit trails, and data handling requirements that HIPAA mandates for every system touching ePHI.

The PHI Problem: Why Standard Hardening Isn’t Enough

The standard OpenClaw hardening stack — Docker sandboxing, DOCKER-USER iptables chain, Composio OAuth, tool allowlists, kill switch — addresses the operational security risks that every deployment faces. Network exposure. Credential exfiltration. Supply chain poisoning. Context compaction erasing safety rules. Those controls are necessary for healthcare. They’re not sufficient.

The gap is data sovereignty. When an OpenClaw agent processes a FHIR response containing a patient’s diagnosis codes, medication list, and insurance information, that data flows through the LLM inference pipeline. In a standard deployment, that inference call goes to a cloud API — Anthropic, OpenAI, or another provider. The moment PHI leaves your organizational perimeter and hits a third-party API endpoint, you’ve created a HIPAA disclosure event.

HIPAA Journal’s analysis “When AI Technology and HIPAA Collide” made this explicit: covered entities using AI systems that process PHI must have Business Associate Agreements with every vendor in the data flow chain. The BAA must cover the AI model provider, the hosting provider, and any intermediary that touches the data. Missing a single link in that chain is a violation.

OpenAI recognized this gap when they launched their “OpenAI for Healthcare” program — offering BAA-covered API access and HIPAA-eligible configurations. Platforms like Hathr.ai built their entire business on providing HIPAA-compliant Claude access with audit trails and access controls. These aren’t nice-to-haves. They’re the minimum viable architecture for any AI system touching patient data.

Deploying an AI agent with PHI access and no data sovereignty controls is like building a pharmacy with no lock on the door. The shelves are organized. The inventory system works. But anyone can walk in.

Why this matters: Standard OpenClaw hardening protects your infrastructure. Healthcare deployments must also protect the data flowing through that infrastructure. That requires either BAA-covered cloud inference or local model inference with no external data transmission. NemoClaw’s privacy router was built for exactly this pattern — routing PHI to local Nemotron models while sending non-sensitive reasoning to cloud APIs.

Healthcare AI Agent Networks: The Multi-Agent Model

A hospital isn’t a single workflow. It’s dozens of departments, each with distinct data access requirements and regulatory constraints. The AI agent model that works for healthcare isn’t a single all-powerful agent. It’s a coordinated network of specialized agents with clear role boundaries — what multi-agent AI research calls the planner/specialist/verifier model.

Planner agents coordinate workflows across departments — a discharge event triggers pharmacy notification, care coordination follow-up, and billing claim generation. The planner never touches PHI directly. Tool specialist agents execute operations within a single system with SMART-scoped FHIR access — one agent queries lab results, another processes claims, each with access to only the resources it needs. Verifier agents validate outputs before they’re committed — checking ICD-10 codes, insurance status, and procedure coverage before anything leaves the system.

On r/AI_Agents, a thread titled “Open Claw the right tool as an automated fitness coach?” explored how users are already thinking about OpenClaw for health-adjacent applications. The community’s consensus: the agent framework works, but the data handling layer is where deployments succeed or fail. That observation scales directly to hospital environments.

Why this matters: A single-agent architecture with broad FHIR access creates the same risk profile as the inbox-wipe incident — one agent, too many permissions, no blast radius containment. Multi-agent networks with role separation reduce the blast radius of any single failure to one department, one data type, one workflow.

The 4 PHI Guardrails for Healthcare AI Agent Deployments

60% of healthcare organizations plan formal AI governance programs by 2026. Here’s what that governance looks like at the technical layer — the 4 controls that separate a compliant deployment from an audit finding.

1. De-Identification at Ingestion

Data pulled from FHIR endpoints for model training or workflow optimization must be de-identified at the point of ingestion. Patient names, MRNs, dates of birth, Social Security numbers, and other HIPAA identifiers are stripped before the data enters any training pipeline. Re-identification happens only within HIPAA-controlled environments where authorized users need to map back to specific patients for clinical purposes.

NemoClaw’s privacy router handles this at the infrastructure layer. YAML routing rules classify each FHIR resource field as sensitive or non-sensitive. Sensitive fields are either redacted before cloud inference or routed to local Nemotron models where the data never leaves the organizational perimeter.

2. SMART on FHIR Scoping

Every agent in the network gets a SMART-scoped access token with the minimum FHIR resources required for its function. A prior authorization agent gets read access to Condition, MedicationRequest, and Coverage resources. It doesn’t get access to AllergyIntolerance, DiagnosticReport, or any resource outside its task scope. Scoping is enforced at the API level — the agent can’t bypass it regardless of what it’s instructed to do via prompt.

This is the FHIR-native equivalent of Composio OAuth’s tool allowlists, extended to clinical data. The principle is identical: a permission that doesn’t exist can’t be exploited.

3. Audit Trail for Every PHI Access

HIPAA’s Security Rule (45 CFR 164.312(b)) requires audit controls that record and examine activity in systems containing ePHI. For AI agent deployments, this means every FHIR query, every data element accessed, every inference call, and every action taken must be logged with timestamps, agent identity, and data classifications. The audit trail must be immutable and available for HHS Office for Civil Rights review.

NemoClaw’s OpenShell sandbox generates this trail automatically. Every tool invocation, file access, and network call is recorded. Combined with the privacy router’s routing decision logs, you get a complete data flow record that answers: “What PHI did this agent access, when, why, and where was it processed?”

4. Human-in-the-Loop for Clinical Decisions

AI agents in healthcare can automate administrative workflows end-to-end: prior authorizations, claims submissions, appointment scheduling, discharge coordination. Clinical decisions — treatment plans, medication changes, diagnosis coding that affects care — require mandatory human review before execution. The agent surfaces recommendations. A clinician approves them. No exceptions.

This isn’t just a governance best practice. The regulatory trajectory is clear: by 2026, certified systems must disclose algorithmic risks and prove they can safely integrate AI. An agent that autonomously modifies a treatment plan without clinician review is a liability that no BAA will cover.

Why this matters: These 4 guardrails map to the technical safeguards in 45 CFR 164.312. They’re not optional configurations. They’re the minimum requirements for any AI system that accesses, processes, or transmits ePHI. Skip any one of them and you’ve got a gap that an OCR audit will find.

Revenue Cycle Automation: Where the ROI Is Real

Administrative costs consume an estimated 15-30% of U.S. healthcare spending. The highest-ROI targets for AI agent automation aren’t clinical. They’re the revenue cycle workflows that are repeatable, rule-bound, and drowning in manual labor.

Prior authorization automation is the standout. A prior auth agent queries the EHR for the patient’s diagnosis (Condition resource), the requested procedure (ServiceRequest), and insurance coverage (Coverage resource) via FHIR. It checks the payer’s requirements against the clinical documentation, assembles the submission, and routes it for verifier review. At scale — 10,000+ monthly transactions — prior authorization automation recoups deployment costs within 60 days.

Revenue cycle automation broadly — including claims status tracking, denial management, and patient eligibility verification — shows positive ROI within 90 days for organizations processing sufficient volume. The workflows pass all 3 of the automatable task properties: they’re repeatable, have structured inputs (FHIR resources), and have defined outputs (approved/denied/pending statuses).

On r/EnhancerAI, a post titled “30+ Awesome OpenClaw Use Cases with .md files!” cataloged real-world implementations. Healthcare-adjacent use cases — appointment coordination, patient communication, documentation summarization — appeared repeatedly. The community is already building these workflows. The missing piece is the compliance layer.

Revenue cycle automation in healthcare is like email triage for founders — high volume, rule-based, enormous time savings — except the data is PHI and the regulatory requirements multiply every step.

Why this matters: If you’re evaluating OpenClaw for healthcare, start with revenue cycle. It’s the workflow category with the shortest path to measurable ROI, the most structured data (FHIR resources), and the most straightforward compliance mapping. Clinical workflows require deeper governance. Administrative workflows require the same 4 guardrails but with clearer automation boundaries.

NemoClaw: The Healthcare Deployment Architecture

Standard OpenClaw hardening provides operational security. Healthcare needs operational security plus data sovereignty plus compliance-grade audit trails plus privacy-aware inference routing. That’s NemoClaw’s architecture.

Here’s how NemoClaw’s 3-layer stack maps to healthcare requirements:

| NemoClaw Layer | Healthcare Function | HIPAA Requirement |

|---|---|---|

| Privacy Router | Classifies FHIR response fields as PHI or non-PHI. Routes PHI to local Nemotron. Redacts identifiers from cloud-bound requests. | Transmission security (164.312(e)(1)); no unauthorized disclosure |

| OpenShell Sandbox | Kernel-level isolation for each agent. Complete audit trail of every tool invocation and data access. | Audit controls (164.312(b)); access controls (164.312(a)(1)) |

| YAML Policy Engine | Per-department agent permissions. Deny-by-default. Human-readable policies for compliance review. | Access controls (164.312(a)(1)); documentation (164.316) |

The privacy router is the critical piece. When a FHIR specialist agent queries a patient’s medication history, the response contains PHI. The privacy router intercepts the inference call, detects the PHI elements, and routes reasoning to a local Nemotron model. The PHI never leaves your network. IQVIA — managing clinical trial data across thousands of facilities — is one of NemoClaw’s 17 enterprise launch partners, a signal that healthcare’s data infrastructure leaders consider this architecture the right direction.

Why this matters: Healthcare AI deployments without privacy-aware inference routing are architecturally incompatible with HIPAA. You can sign BAAs with cloud LLM providers. You can encrypt data in transit. But neither substitutes for keeping PHI within your organizational perimeter during inference. That’s what local model inference with a privacy router achieves — and it’s why NemoClaw-level security is a requirement, not an option, for healthcare.

Implementation: The Healthcare Deployment Sequence

Healthcare deployments follow a 3-phase sequence that standard business deployments don’t require.

Phase 1: Compliance mapping (Week 1-2). Map every FHIR resource to HIPAA data categories. Classify each field as PHI, quasi-identifier, or non-sensitive. Document the BAAs required for every vendor in the data flow. If any link can’t provide a BAA, it can’t touch PHI.

Phase 2: Infrastructure deployment (Week 2-4). Deploy the NemoClaw stack. Install local Nemotron models on NVIDIA hardware. Configure FHIR connections with SMART-scoped tokens. Set up SIEM integration for audit log export. Test de-identification rules against synthetic patient data before production data enters the system.

Phase 3: Single-workflow pilot (Week 4-8). Start with 1 revenue cycle workflow — prior authorization is the strongest candidate. Run with a single department, monitor routing decisions daily, generate compliance documentation. Expand only after the pilot validates both architecture and compliance posture.

ManageMyClaw’s enterprise deployment service covers this entire pipeline for healthcare organizations — compliance mapping, NemoClaw infrastructure, FHIR integration, and ongoing managed care with HIPAA-specific monitoring.

The Bottom Line

OpenClaw’s agent architecture works for healthcare workflows. FHIR R4 gives you a standardized, auditable integration layer. Multi-agent coordination with role separation gives you blast radius containment. Revenue cycle automation delivers ROI within 90 days.

Standard OpenClaw hardening is not sufficient for healthcare. The moment your agent touches PHI, you need data sovereignty controls that standard Docker sandboxing and Composio OAuth don’t provide. De-identification at ingestion, SMART-scoped FHIR access, compliance-grade audit trails, and privacy-aware inference routing are non-negotiable.

NemoClaw’s architecture is the deployment model for healthcare. Privacy router for PHI data sovereignty. OpenShell for audit-grade logging. YAML policy engine for per-department access controls that compliance officers can read and verify. It’s in alpha — not production-ready for regulated data today — but the organizations piloting now will be production-ready at GA. The organizations waiting will be 6-12 months behind while Gartner’s 60% noncompliance prediction comes true around them.

Healthcare AI isn’t a question of if. It’s a question of architecture. Get the architecture right and you’ve got a compliance asset that automates 15-30% of your administrative cost base. Get it wrong and you’ve got a HIPAA violation with a $2.1 million ceiling per category per year.

Frequently Asked Questions

Can OpenClaw connect to Epic, Cerner, and Meditech EHR systems?

Yes, through FHIR R4 APIs. Epic, Cerner, and Meditech all expose FHIR R4 endpoints for external system integration. OpenClaw agents connect to these endpoints using SMART on FHIR authorization, which provides OAuth 2.0-based scoped access tokens. The agent interacts with standardized FHIR resources (Patient, Encounter, Condition, MedicationRequest) rather than proprietary database schemas. The integration layer is standardized. The compliance layer — de-identification, audit trails, privacy routing — is where the architecture work lives.

Is standard OpenClaw hardening enough for HIPAA compliance?

No. Standard hardening (Docker sandboxing, firewall rules, Composio OAuth, tool allowlists) addresses operational security but doesn’t provide the data sovereignty controls HIPAA requires for PHI. Healthcare deployments need de-identification at ingestion, SMART-scoped FHIR access, compliance-grade audit trails, privacy-aware inference routing, and Business Associate Agreements covering every vendor in the data flow. NemoClaw’s architecture provides the technical controls. Your organization provides the governance framework, BAAs, and staff training.

What healthcare workflows have the highest ROI for AI automation?

Revenue cycle workflows: prior authorization automation recoups costs within 60 days at 10,000+ monthly transactions. Claims status tracking, denial management, and patient eligibility verification show positive ROI within 90 days. These workflows pass the automation test — repeatable, structured inputs (FHIR resources), defined outputs. Clinical decision support workflows have high potential but require mandatory human-in-the-loop review, longer pilot periods, and deeper governance frameworks.

How does the privacy router handle PHI in FHIR responses?

NemoClaw’s privacy router intercepts every inference call and classifies data using YAML-configurable rules. When a FHIR response contains PHI elements (patient names, MRNs, diagnosis codes, medication lists), the router either strips identifiers from cloud-bound requests or routes the entire inference call to a local Nemotron model where PHI never leaves the organizational perimeter. Every routing decision is logged — data types detected, model selected, redaction applied, timestamp — creating the audit trail HIPAA’s Security Rule requires.

What about using AI agents for clinical decision support?

AI agents can surface clinical recommendations — flagging drug interactions, suggesting diagnosis codes based on documentation, identifying patients overdue for screenings. But clinical decisions that affect patient care must have mandatory human-in-the-loop review before execution. The agent recommends. The clinician decides. By 2026, certified systems must disclose algorithmic risks and prove safe AI integration. Autonomous clinical decision-making without physician review isn’t just risky — it’s on track to be explicitly prohibited by certification requirements.

Is NemoClaw ready for production healthcare deployments today?

No. NemoClaw shipped as early-stage alpha on March 16, 2026. IQVIA and 16 other enterprise partners are committed, but the platform isn’t recommended for production use with live PHI at this stage. The recommended path: evaluate the architecture now, pilot with synthetic patient data, build your compliance framework and YAML routing rules, and deploy to production when NemoClaw reaches general availability. Organizations that prepare now will be production-ready at GA. The 60% Gartner warns about are the ones that haven’t started.

Do we need a Business Associate Agreement with the LLM provider?

If any PHI reaches the LLM provider’s servers, yes. HIPAA requires a BAA with every business associate that creates, receives, maintains, or transmits ePHI. OpenAI’s Healthcare program and platforms like Hathr.ai offer BAA-covered access. Alternatively, NemoClaw’s privacy router can route all PHI-containing inference to local Nemotron models, eliminating the need for a BAA with the cloud LLM provider entirely — because the PHI never reaches them. Either path satisfies the requirement. Doing neither is a violation.

Evaluating OpenClaw for Healthcare?

Healthcare deployments require NemoClaw-level security — privacy routing, compliance-grade audit trails, and per-department access controls. ManageMyClaw Enterprise handles compliance mapping, NemoClaw infrastructure deployment, FHIR integration, and ongoing managed care with HIPAA-specific monitoring. Start with a compliance architecture review.

Explore Enterprise Healthcare Plans