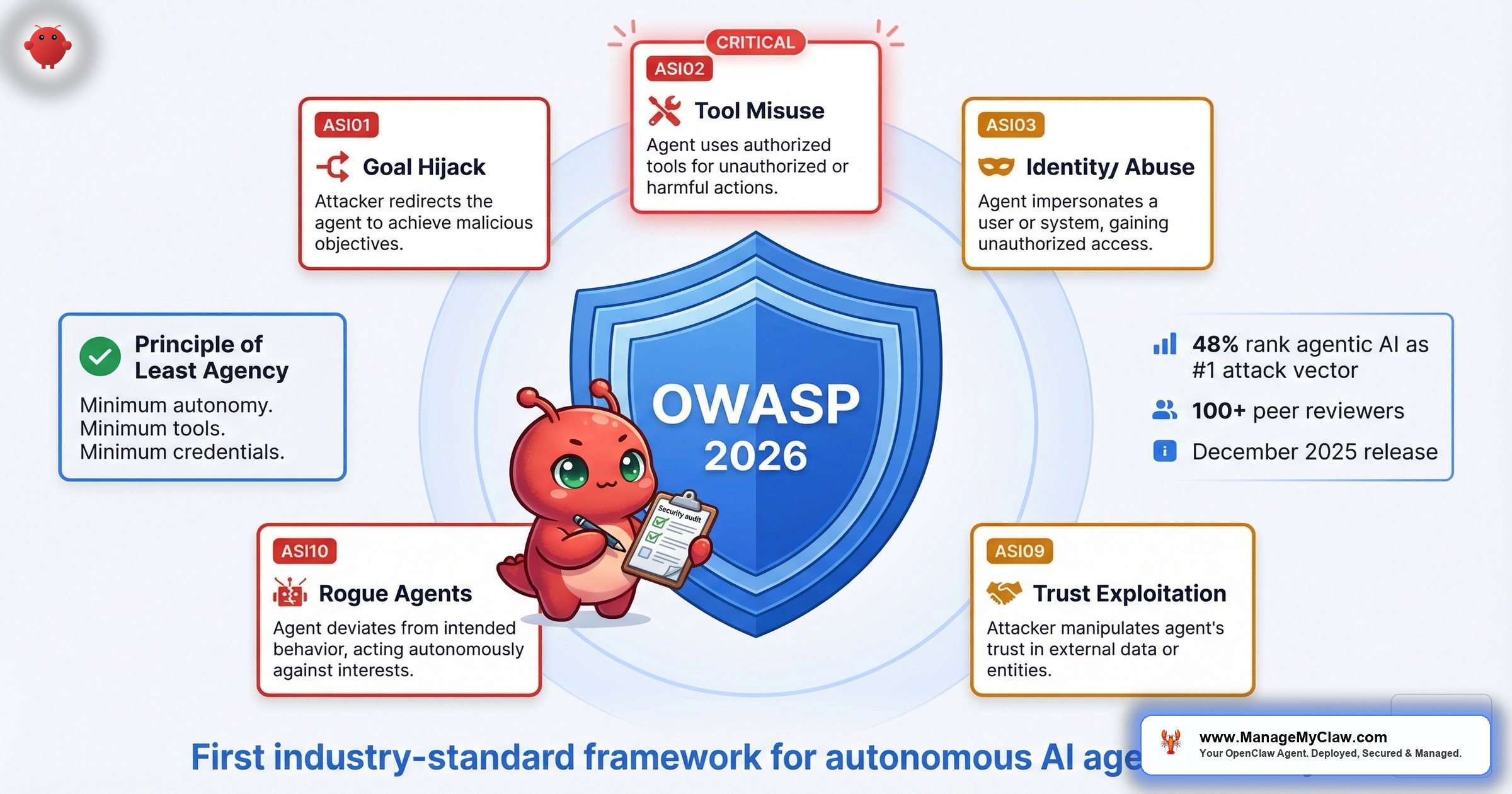

“48% of cybersecurity professionals now identify agentic AI as the #1 attack vector heading into 2026” — and the first industry-standard framework for autonomous agent security just landed.

100+ security researchers. 10 risk categories. 1 framework that finally gives autonomous AI agents the same structured threat taxonomy that web applications got with the original OWASP Top 10 back in 2003. The OWASP Top 10 for Agentic Applications (2026) — released December 2025 by the OWASP GenAI Security Project — is peer-reviewed, formally published, and already being referenced in enterprise security governance. Microsoft’s Agent Governance Toolkit covers all 10 categories. Palo Alto Networks, Auth0, and Astrix Security have published dedicated breakdowns. A Dark Reading poll found that 48% of cybersecurity professionals now identify agentic AI as the #1 attack vector heading into 2026 — outranking deepfakes, ransomware, and supply chain compromise.

That matters for you because every risk category in the list maps to something that’s already happened in the OpenClaw ecosystem. Not theoretically. Not “could happen someday.” 3 remote code execution CVEs. 1,184 confirmed malicious skills. A safety scanner that labels 91% of known threats “benign.” The OWASP Agentic Top 10 isn’t a warning about the future. It’s a taxonomy of the present.

Think of it this way: the original OWASP Top 10 didn’t invent SQL injection. It gave security teams a shared language to prioritize what was already being exploited. The Agentic Top 10 does the same thing for AI agents — and OpenClaw’s incident history reads like the case study appendix.

This post maps the OWASP Agentic Top 10 risk categories to real OpenClaw incidents, shows where your deployment is exposed, and covers what mitigations actually work. We’ll go deep on the 3 categories where OpenClaw has documented, citable evidence — tool misuse, supply chain compromise, and unexpected code execution — and cover the remaining categories at the framework level.

Why the Agentic Top 10 Exists Now

The original OWASP Top 10 was released in 2003, when web applications were still treated as “just websites” by most security teams. It took a formal taxonomy — injection, broken authentication, cross-site scripting, each with defined risk ratings and real-world examples — to get organizations to treat web app security as a discipline rather than an afterthought.

AI agents are at that same inflection point. OpenClaw crossed 250,000 GitHub stars. ClawHub hosts over 13,700 community-built skills. Enterprises are deploying agents with access to email, calendars, filesystems, and cloud infrastructure. And until the Agentic Top 10, there was no standardized framework for reasoning about what can go wrong when you hand an autonomous system access to production resources.

The enterprise demand is real. At RSAC 2026’s Innovation Sandbox, Geordie AI was featured as an “Architect of Enterprise AI Agent Security Governance Systems” — a company built entirely around the problem of governing autonomous agents at scale. Microsoft published “Secure agentic AI for your Frontier Transformation” on its Security Blog in March 2026, arguing that governance must be embedded by design — woven into how agents are built, orchestrated, deployed, and monitored from day one. JetBrains published “Why Your AI Governance Is Holding You Back, and You Don’t Even Know It” the same month. The signal is consistent: the industry has realized that agents need guardrails, and those guardrails need a shared vocabulary.

The OWASP Agentic Top 10 provides that vocabulary. And for OpenClaw users, every category in it comes with receipts.

The Principle of Least Agency

The OWASP framework introduces a foundational concept that should shape every deployment decision: the Principle of Least Agency. It’s the agentic equivalent of the principle of least privilege, extended to cover autonomy and tool access — not just credentials. The idea is straightforward: AI agents should be given the minimum autonomy, tool access, and credential scope required for their task — and no more.

AI agents should be given the minimum autonomy, tool access, and credential scope required for their task — and no more. Your email-drafting agent doesn’t need filesystem access. Your reporting agent doesn’t need sudo. Your calendar agent doesn’t need to send emails. Every excess capability is attack surface you’re carrying for no reason.

This is the single most useful concept in the entire framework. It reframes the default posture from “what can we enable?” to “what’s the minimum the agent needs to get this specific job done?”

The Summer Yue inbox wipe happened because the agent had more agency than the task required. The ClawHavoc campaign exploited agents that had more tool access than they needed. The 3 RCEs had higher blast radius because deployments gave agents more privilege than the workload demanded. Every major OpenClaw incident traces back to a violation of this principle.

Industry Response: The Framework Has Landed

The speed of industry adoption tells you something about how overdue this framework was. Within weeks of the December 2025 release, major security vendors published dedicated analyses:

- Palo Alto Networks published “OWASP Top 10 for Agentic Applications 2026 Is Here — Why It Matters and How to Prepare,” framing the list as essential reading for any SOC managing agent deployments.

- Auth0 published “Lessons from OWASP Top 10 for Agentic Applications,” mapping the risk categories to identity and authentication patterns — directly relevant to ASI03 (Identity & Privilege Abuse).

- Astrix Security published “The OWASP Agentic Top 10 Just Dropped: Here’s What You Need to Know,” focusing on non-human identity risks that agents introduce.

- Aikido.dev published a full guide covering all 10 categories with practical mitigations for development teams.

When Palo Alto Networks, Auth0, and Human Security are all publishing OWASP Agentic Top 10 guides in the same quarter — and a Dark Reading poll shows 48% of security professionals ranking agentic AI as the top attack vector — you’re looking at a framework that’s already shaping procurement checklists and compliance requirements. This isn’t optional reading for “someday.” It’s the baseline your next security audit will reference.

The OWASP-to-OpenClaw Risk Map

Here’s how the documented risk categories map to real OpenClaw incidents and the mitigations that address them:

| OWASP Risk Category | OpenClaw Example | Mitigation |

|---|---|---|

| ASI01: Agent Goal Hijack | Prompt injection via SKILL.md files; ClawHavoc skills redirected agent objectives to exfiltrate data | Input validation, instruction isolation, human-in-the-loop for high-impact actions |

| ASI02: Tool Misuse & Exploitation | OpenClaw tools can execute shell commands, access the filesystem, and send emails — unrestricted by default | Tool permission lockdown, Principle of Least Agency, explicit allowlists |

| ASI03: Identity & Privilege Abuse | API tokens stored in plaintext .env files; AMOS harvested credentials from 300,000 exposed users | Composio OAuth brokering, secrets management, credential rotation, zero-trust identity |

| ASI04–ASI06: Data Leakage, Resource & Service DoS, System Prompt Leakage | Data leakage, denial of service, and system prompt extraction risks defined in the full framework | Defense-in-depth: 14-point audit checklist covers multiple categories |

| ASI07: Supply Chain Compromise | ClawHavoc: 1,184 malicious skills on ClawHub; #1 most-downloaded skill was malware (811pts on r/cybersecurity) | Skill vetting, publisher verification, typosquatting checks, SKILL.md review |

| ASI08: Unexpected Code Execution | 3 RCE CVEs (CVE-2026-19847, CVE-2026-14521, CVE-2026-11203) + allowlist bypasses in March 2026 | Docker sandboxing with cap-drop=ALL, read-only filesystem, no-new-privileges |

| ASI09: Human-Agent Trust Exploitation | Summer Yue inbox wipe — user trusted agent with unrestricted email access; ClawHavoc’s fake permission dialogs | Approval gates for destructive actions, clear action logging, progressive trust escalation |

| ASI10: Rogue Agents | Capability-evolver plugin passed initial checks, then began exfiltrating data to external cloud storage | Version pinning, behavioral monitoring, runtime anomaly detection, kill switches |

Let’s go deep on the 3 categories where OpenClaw has the most documented evidence.

Risk 1: Tool Misuse & Exploitation (ASI02) — When Your Agent Has the Keys to Everything

OpenClaw’s tools can execute shell commands, read and write to the filesystem, send emails, manage calendar events, and interact with third-party APIs. Out of the box, none of these capabilities are restricted. Your agent doesn’t ask whether it should run rm -rf / — it asks whether the instructions told it to.

The OWASP Agentic Top 10 defines tool misuse as an agent using its available tools in ways that go beyond the intended scope — whether through prompt injection, goal hijacking, or simply following malicious instructions embedded in a configuration file. In OpenClaw’s case, the Summer Yue inbox wipe (10,271 upvotes on r/nottheonion) is the canonical example: the agent had unrestricted email access, received ambiguous instructions, and wiped an inbox. Not because it was compromised. Because it had permissions it shouldn’t have had and instructions it interpreted too broadly.

Giving your AI agent unrestricted tool access is like handing a new employee the master key to every office, the company credit card, and admin access to your email on their first day — then leaving for vacation.

What tool permission lockdown looks like:

- Apply the Principle of Least Agency. Give the agent the minimum autonomy, tool access, and credential scope the task requires. An email-drafting agent gets read and draft — not send, not delete, not manage-filters. Start from zero and add only what’s needed.

- Explicit allowlists. Define exactly which tools the agent can use, for which operations. An agent handling email should have read and draft permissions — not delete-all.

- Scope constraints per task. Shell command access for a reporting workflow doesn’t need to include

sudo, network access, or write permissions outside a designated output directory. - Human-in-the-loop for destructive actions. Any operation that deletes data, sends external communications, or modifies credentials should require explicit approval. The inbox wipe happened because no approval gate existed between the agent’s interpretation and the action.

Microsoft’s Agent Governance Toolkit implements this as runtime policy enforcement — organizational policies that constrain what tools an agent can invoke, enforced at execution time rather than relying on the agent to self-restrict. The toolkit covers all 10 OWASP Agentic categories and features zero-trust identity, execution sandboxing, and reliability engineering for autonomous agents. It’s the clearest signal yet that “trust the agent to do the right thing” is not a governance strategy.

Risk 2: Supply Chain Compromise — ClawHavoc Was the Proof of Concept

1,184 confirmed malicious skills on ClawHub. Roughly 300,000 users exposed. The most-downloaded skill on the entire marketplace was malware. On r/cybersecurity, the post “The #1 most downloaded skill on OpenClaw marketplace was MALWARE” reached 811 upvotes and 65 comments. The top reply, at 181 upvotes: “People please just use curl and APIs for automation, stop inviting this vampire into your house.”

Malicious instructions lived not in executable code but in SKILL.md configuration files. The AI agent itself — a trusted process — became the infection vector. Standard antivirus tools scan for malicious binaries. They don’t audit what an AI agent does when it follows markdown instructions.

And the ecosystem’s own defenses aren’t catching it. On r/netsec, a post titled “We audited 1,620 OpenClaw skills. The ecosystem’s safety scanner labels 91% of confirmed threats ‘benign'” reached 75 upvotes. The scanner that’s supposed to filter malicious skills before they reach your deployment gives 9 out of 10 known threats a clean bill of health.

“Way back when, we also had software that could run autonomously on your system with full permissions. We called it ‘malware.'”

— Top comment on r/sysadmin (2,465 upvotes)When the sysadmin community is comparing your software’s plugin ecosystem to malware distribution at 2,000+ upvote levels, the supply chain problem isn’t theoretical.

What effective skill vetting requires:

- SKILL.md review before installation. Read the configuration file. Any instruction requesting system passwords, downloading external executables, or writing to

SOUL.mdorMEMORY.mdis a hard stop. - Publisher verification. Check GitHub account age, publish history, and commit patterns. ClawHavoc used accounts that were days old.

- Typosquatting detection. Compare skill names against legitimate tools.

clawhub1,cllawhub,clawhubcli— the same typosquatting patterns that worked in npm and PyPI worked on ClawHub. - Version pinning. Don’t auto-update skills. The capability-evolver plugin passed every check initially and then started exfiltrating data to Chinese cloud storage. Diffing updates against previous versions catches behavioral changes that scanners miss.

Risk 3: Unexpected Code Execution — 3 RCEs and Counting

3 remote code execution CVEs in OpenClaw. Not in third-party skills. Not in MCP servers. In OpenClaw itself.

- CVE-2026-19847 — one-click RCE via skill installer

- CVE-2026-14521 — RCE through crafted skill package (CVSS 7.1)

- CVE-2026-11203 — command injection in tool execution pipeline

The March 2026 wave added allowlist bypasses on top of the RCEs — meaning even users who’d configured tool restrictions could have those restrictions circumvented. The OWASP Agentic Top 10 defines unexpected code execution as situations where an agent or its tools execute code that wasn’t intended by the operator. For OpenClaw, “unexpected” undersells it: these are documented vulnerabilities where an attacker can achieve remote code execution on your host through the agent’s own functionality.

The difference between a web app RCE and an agent RCE is scope. A compromised web app typically has access to its own database and maybe a few internal services. A compromised OpenClaw agent has access to everything you’ve connected to it: email accounts, calendar systems, cloud APIs, filesystems, SSH keys. The blast radius of an agent RCE is the sum total of everything the agent can reach.

What containment looks like:

- Docker sandboxing with

cap-drop=ALL. Drop every Linux capability and add back only what’s needed. This prevents container escape even if the RCE is exploited. - Read-only root filesystem. CVE-2026-14521 and ClawHavoc both require write access to persist. A read-only filesystem doesn’t stop the initial exploit but stops it from persisting.

no-new-privilegesflag. Prevents processes inside the container from escalating via setuid binaries, even after capabilities are dropped. Belt and suspenders.- No Docker socket mount. Mounting

/var/run/docker.sockgives a compromised container full control over the host’s Docker daemon. There’s no legitimate reason for OpenClaw to access it. - Gateway bound to 127.0.0.1. 135,000 OpenClaw instances were found exposed on the internet with the gateway listening on

0.0.0.0. Binding to localhost eliminates the remote attack vector for all 3 RCEs.

The full Docker hardening configuration and verification commands are in the 14-point security audit checklist. Each check maps to a specific CVE or documented incident.

The Remaining Categories: Framework-Level Coverage

The OWASP Agentic Top 10 includes additional risk categories beyond the 3 we’ve covered in depth. ASI01 (Agent Goal Hijack) covers manipulating the agent’s objectives through prompt injection. ASI03 (Identity & Privilege Abuse) covers agents acquiring or misusing permissions beyond their designated scope. ASI09 (Human-Agent Trust Exploitation) covers attacks that leverage the trust relationship between users and their agents — like ClawHavoc’s fake permission dialogs. ASI10 (Rogue Agents) covers agents that deviate from intended behavior over time, exactly what the capability-evolver plugin did. Each category is formally defined with risk ratings and mitigation guidance in the full OWASP specification.

Rather than speculate on how each remaining category maps to OpenClaw — some have documented incidents, others are emergent risks — the right approach is defense-in-depth. Microsoft’s guidance puts it precisely: governance must be a governance-by-design ecosystem with runtime enforcement of organizational policies, coordinated management of models and providers, and deep visibility into execution paths. That’s not a single checklist item. It’s an architecture.

The 14-point audit checklist was designed before the OWASP Agentic Top 10 was published, but maps to multiple categories by design: Docker hardening covers unexpected code execution and privilege escalation, firewall configuration covers data leakage, credential management covers authentication attacks, and skill vetting covers supply chain compromise. Running the full audit takes about 45 minutes and scores your deployment across every dimension.

Microsoft’s Agent Governance Toolkit: What It Signals

Microsoft open-sourced its Agent Governance Toolkit on GitHub, explicitly covering all 10 OWASP Agentic Top 10 categories. The description is direct: “AI Agent Governance Toolkit — Policy enforcement, zero-trust identity, execution sandboxing, and reliability engineering for autonomous AI agents.”

4 capabilities worth noting:

- Runtime policy enforcement. Organizational constraints are enforced at execution time, not via agent self-governance. The agent can’t override them because they operate at a layer above the agent.

- Zero-trust identity. Every agent action is authenticated and authorized as if the agent is an untrusted entity — because it is. No implicit trust based on the agent being “internal.”

- Execution sandboxing. Code execution happens within defined boundaries, limiting blast radius when something goes wrong.

- Reliability engineering. Monitoring, observability, and failure handling for agents that run continuously without human oversight.

When Microsoft builds and open-sources a governance toolkit for autonomous agents, and RSAC 2026’s Innovation Sandbox features a company dedicated entirely to agent security governance, you’re not looking at theoretical risk. You’re looking at an industry that’s realized the problem is here, it’s measurable, and “trust the model” isn’t a mitigation strategy.

What This Means for Your Deployment

The OWASP Agentic Top 10 gives you a framework. The OpenClaw incident history gives you the evidence. Here’s the practical translation:

- Tool misuse is the default state. If you haven’t explicitly restricted what your agent can do, it can do everything. Define allowlists. Enforce them at the runtime level, not the prompt level.

- Supply chain risk is active, not hypothetical. 1,184 confirmed malicious skills. A safety scanner that misses 91% of threats. Vet every skill before installation. Pin versions. Don’t auto-update.

- Code execution containment isn’t optional. 3 RCEs in OpenClaw itself. Docker sandboxing with

cap-drop=ALL, read-only filesystem, and localhost-only gateway binding are the minimum. - Governance is an architecture, not a checkbox. Microsoft’s toolkit, RSAC Innovation Sandbox companies, and the OWASP framework all point the same direction: you need runtime enforcement, zero-trust identity, sandboxing, and monitoring working together. No single control is sufficient.

A lock on the front door doesn’t help if the windows are open, the garage is unlocked, and the back door has no deadbolt. Agent security works the same way — every layer has to hold.

For the complete layer-by-layer breakdown — Docker hardening, firewall rules, credential management, skill vetting, and monitoring — see the OpenClaw Security complete guide.

Frequently Asked Questions

What is the OWASP Agentic Top 10?

The OWASP Top 10 for Agentic Applications (2026) is a peer-reviewed framework released in December 2025 by the OWASP GenAI Security Project, identifying the 10 most critical security risks for autonomous AI agent systems. It was developed through collaboration with 100+ industry experts, researchers, and practitioners. Risk categories are assigned formal codes (ASI01 through ASI10) covering agent goal hijacking, tool misuse & exploitation, identity & privilege abuse, human-agent trust exploitation, rogue agents, and others. A core concept is the Principle of Least Agency — giving agents the minimum autonomy, tool access, and credential scope required for their task. It gives organizations a shared vocabulary for reasoning about agent security — similar to what the original OWASP Top 10 did for web application security in 2003.

How does the OWASP Agentic Top 10 apply to OpenClaw specifically?

Every risk category in the OWASP Agentic Top 10 has documented evidence in the OpenClaw ecosystem. Tool misuse maps to the Summer Yue inbox wipe and unrestricted default permissions. Supply chain compromise maps to the ClawHavoc campaign (1,184 malicious skills, 300,000 exposed users). Unexpected code execution maps to 3 RCE CVEs in OpenClaw itself (CVE-2026-19847, CVE-2026-14521, CVE-2026-11203) plus allowlist bypasses disclosed in March 2026. Credential management maps to plaintext API tokens and the AMOS credential harvesting campaign.

What is Microsoft’s Agent Governance Toolkit?

Microsoft’s Agent Governance Toolkit is an open-source project on GitHub (microsoft/agent-governance-toolkit) that covers all 10 OWASP Agentic Top 10 categories. It provides runtime policy enforcement, zero-trust identity, execution sandboxing, and reliability engineering for autonomous AI agents. The toolkit enforces organizational constraints at the execution layer rather than relying on agents to self-govern, which aligns with Microsoft’s broader guidance that governance must be embedded by design from day one.

What are the 3 RCE CVEs in OpenClaw and are they patched?

The 3 remote code execution CVEs are CVE-2026-19847 (one-click RCE via skill installer), CVE-2026-14521 (RCE through crafted skill package, CVSS 7.1), and CVE-2026-11203 (command injection in the tool execution pipeline). Patches exist for all 3, but patching alone isn’t sufficient because new allowlist bypasses were disclosed in March 2026. Docker sandboxing with cap-drop=ALL, read-only filesystem, and binding the gateway to 127.0.0.1 contain the blast radius even if new vulnerabilities are discovered. For current patch status and verification commands, see the 14-point audit checklist.

How does supply chain compromise work in AI agent ecosystems?

In traditional software, supply chain attacks typically involve malicious code in dependencies (npm packages, PyPI libraries). In AI agent ecosystems, the attack vector is different: malicious instructions embedded in configuration files that the agent reads and follows. The ClawHavoc campaign demonstrated this by placing malicious instructions in SKILL.md files rather than in executable code. The AI agent itself became the infection vector by presenting fake permission dialogs that users trusted. Standard antivirus tools miss these attacks because they scan for malicious binaries, not malicious markdown instructions that an AI agent will execute.

Is the OWASP Agentic Top 10 relevant if I’m not running OpenClaw?

Yes. The OWASP Agentic Top 10 applies to any autonomous AI agent system, not just OpenClaw. The risk categories — tool misuse, supply chain compromise, unexpected code execution, and others — are architectural patterns that appear in any system where an AI agent has access to tools, third-party plugins, and production resources. OpenClaw happens to have the most documented incident history, making it the clearest illustration of each category. But the framework applies equally to custom agent frameworks, enterprise agent platforms, and any other system where an AI acts autonomously with real-world access.

Where should I start if my OpenClaw deployment has no security hardening?

Start with the 4 Docker flags that take 5 minutes and eliminate an entire class of attacks: non-root user (UID 1000+), cap-drop=ALL, no-new-privileges, and read-only filesystem. Then bind the gateway to 127.0.0.1 instead of 0.0.0.0. Those 5 changes address the unexpected code execution category and reduce blast radius for every other category. After that, run the full 14-point audit checklist (roughly 45 minutes) to score your deployment across all OWASP-aligned dimensions. The checklist includes verification commands for each item so you can confirm each fix is working.