Tag: Ollama

-

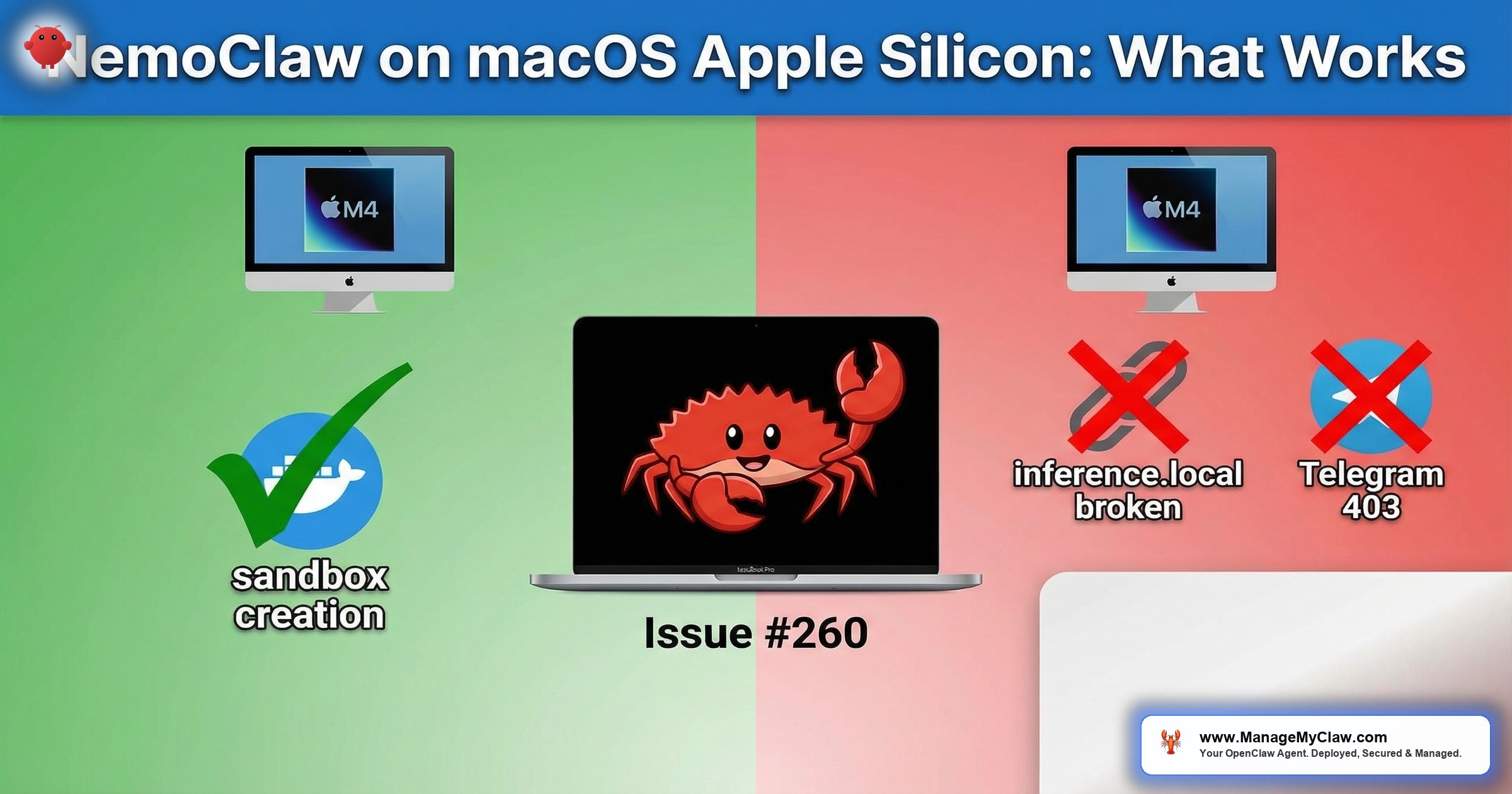

NemoClaw on macOS/Apple Silicon: What Works, What Doesn’t

“NemoClaw on my M3 MacBook Pro: Docker Desktop works, the sandbox starts, policies apply. Then I tried local inference and…

-

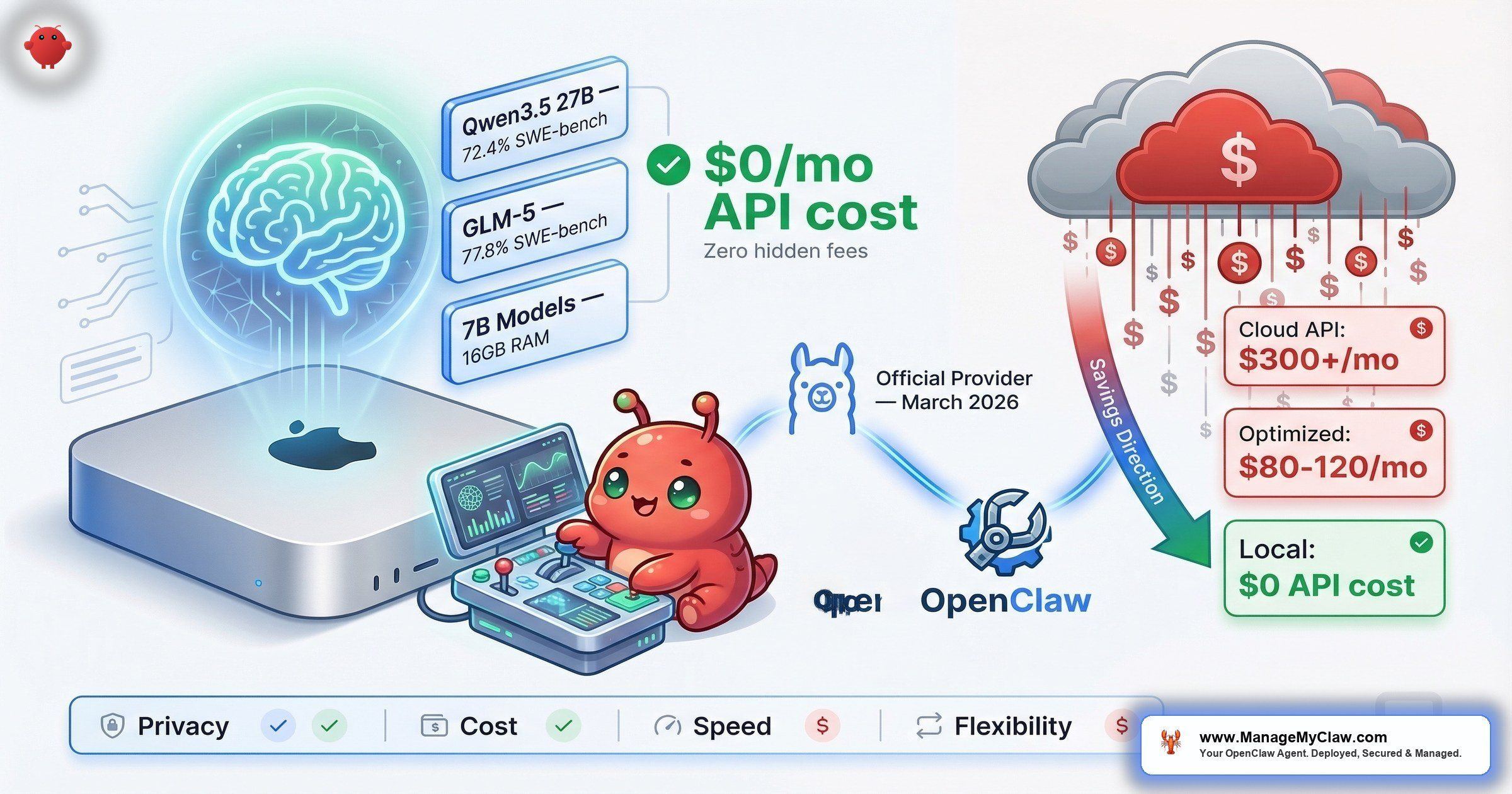

OpenClaw + Ollama: Running Your AI Agent on Local LLMs for Free

$300+/month in API fees. Then Ollama became an official OpenClaw provider — and the cheapest tier of model routing became…