“Every company in the world needs an OpenClaw strategy.”

— Jensen Huang, CEO of NVIDIA, GTC 2026 Keynote • March 16, 2026

Gartner projects that 40% of enterprise applications will include AI agents by the end of 2026. Dark Reading reports that 48% of cybersecurity professionals already rank agentic AI as their number-one attack vector. NVIDIA’s answer to that collision — autonomous AI agents scaling faster than the security infrastructure around them — arrived on March 16, 2026, at GTC.

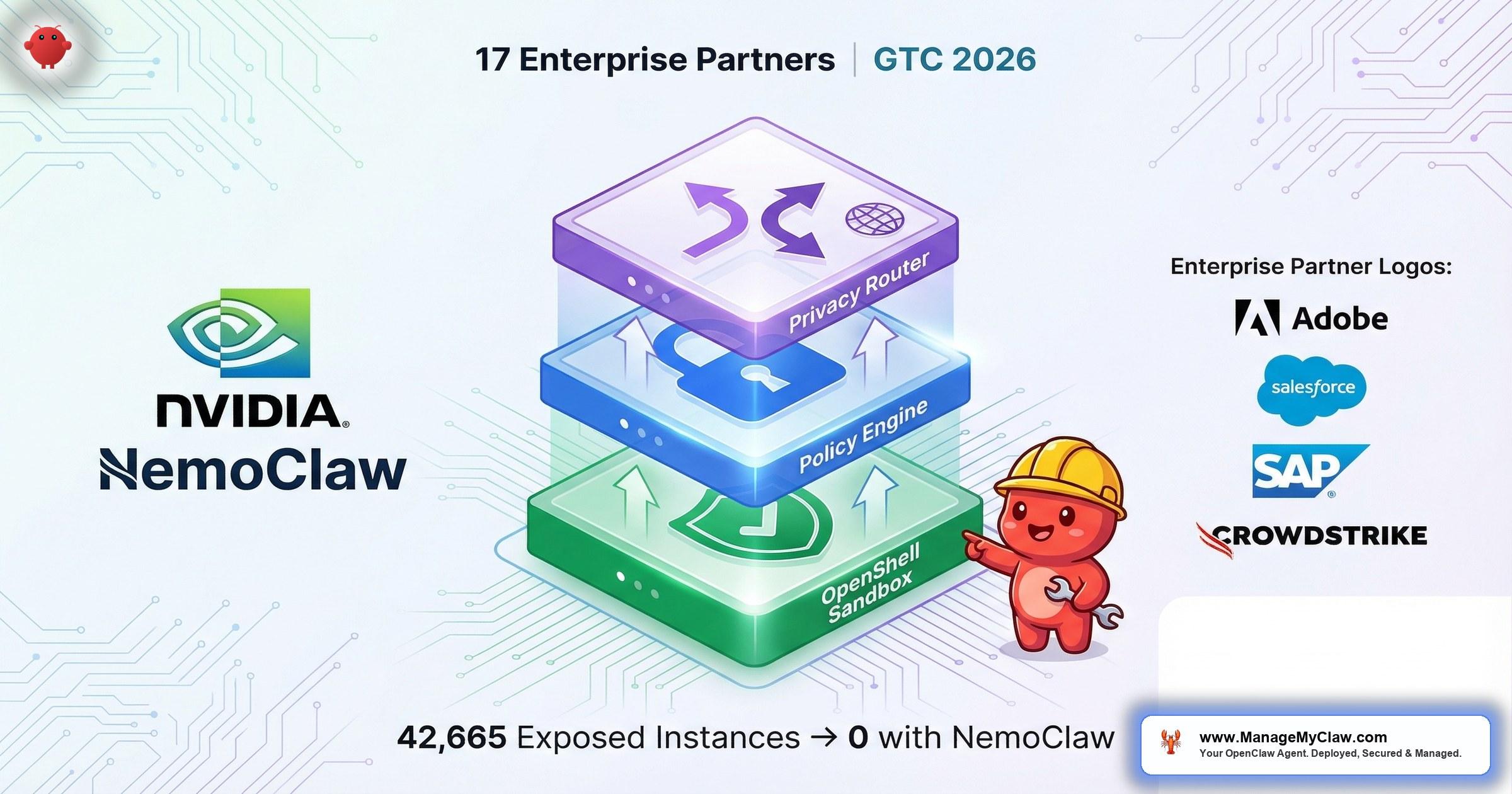

The product is called NemoClaw. It is open-source (Apache 2.0), backed by 17 launch partners including Adobe, Salesforce, SAP, and CrowdStrike, and it adds three security controls that OpenClaw never had: a kernel-level sandbox, an out-of-process policy engine, and a privacy router that keeps sensitive data off cloud APIs.

If you are a VP of Engineering, CTO, or CISO who heard the GTC announcement and wants to understand what NemoClaw actually does — not marketing language, but architecture — this is the post to read. We will walk through each of the three controls, explain why they matter for enterprise governance, map them to the OWASP Agentic Security Initiative, and be transparent about what NemoClaw does not yet solve.

If your board brought up “NVIDIA’s AI agent security thing” in a meeting this week, you are not alone. Half the CISOs we talk to got the same question.

Why NVIDIA Built a Security Layer for AI Agents

OpenClaw is the fastest-growing open-source AI agent framework in history. It is also, by default, one of the most exposed.

Security researcher Maor Dayan documented 42,665 publicly exposed OpenClaw instances. 93.4% had authentication bypass — meaning anyone on the internet could connect, issue commands, and exfiltrate data. These were not test environments. They were production deployments running real workflows with real credentials.

The enterprise urgency is not hypothetical. CrowdStrike’s 2026 Global Threat Report documented an 89% year-over-year increase in AI-enabled attacks. The OWASP Agentic Security Initiative (ASI) published its Top 10 risks specifically for autonomous AI agents, identifying threats from excessive agency (ASI-01) to insecure output handling (ASI-05) that vanilla OpenClaw does nothing to mitigate.

When 48% of your CISO peers rank agentic AI as the top attack vector, the question is no longer “should we secure our AI agents?” It is “how fast can we get controls in place before the next board meeting?”

Jensen Huang himself called OpenClaw “the next ChatGPT” at GTC — a framing that signals NVIDIA expects agent-framework adoption to follow the same explosive trajectory as conversational AI (Storyboard18). That comparison is not hyperbole from a product manager; it is a market-sizing statement from the CEO of the company supplying the compute infrastructure behind both movements.

The enterprise adoption timeline is tighter than most organizations realize. For large enterprises targeting meaningful agent-driven revenue in Q1 2027, pilots need to be underway now — March 2026. Mid-market organizations have slightly more runway, with pilots feasible as late as Q4 2026, but the governance groundwork should begin immediately (Development Corporate analysis). The challenge, however, is not purely technical: enterprise adoption requires “clearing compliance and governance reviews that both platforms have not yet faced at scale” and “change management infrastructure that neither company has deployed” (el-balad.com industry analysis). Security and governance are the adoption bottlenecks, not capability.

NVIDIA’s response was not a whitepaper or a best-practices guide. It was a production security stack, announced at the biggest GPU conference on Earth, with Fortune 500 companies already signed on as launch partners. That is a market-level signal about where enterprise AI governance is heading.

“NemoClaw is designed to solve the hardest problem in agentic AI: how do you let an autonomous agent take actions on your behalf without giving it the keys to everything?”

— NVIDIA NemoClaw Technical Documentation, March 2026What NemoClaw Actually Is

NemoClaw is not a single product. It is a security stack composed of three layers that wrap around OpenClaw at the operating system level. Understanding this distinction matters, because NemoClaw does not replace OpenClaw — it governs it.

| Layer | Component | Function |

|---|---|---|

| Runtime Isolation | OpenShell (kernel-level sandbox) | Deny-by-default process sandboxing with Landlock filesystem, seccomp filters, and network namespaces |

| Governance | Out-of-process policy engine | YAML-based policies evaluated at 4 levels: binary, destination, method, path |

| Data Privacy | Privacy router | Routes sensitive data to local Nemotron models, complex reasoning to cloud APIs |

The critical architectural decision: all three controls operate outside the agent’s process. This is not a system prompt telling the agent to behave. This is OS-level enforcement that the agent cannot modify, override, or circumvent — even if compromised.

Under the hood, OpenShell is distributed as a single Rust binary requiring Linux kernel 5.13 or later for Landlock LSM support (DeepWiki). The runtime architecture uses a K3s pod inside a Docker container — lightweight Kubernetes orchestration that is production-ready without the overhead of a full cluster (earezki.com Dev Journal). NVIDIA positions OpenShell as “Layer 0 Security for Agentic DevOps” — the foundational layer beneath all other agent security controls, analogous to how a hypervisor sits beneath all guest operating systems.

NVIDIA’s own documentation describes OpenShell as “alpha software — single-player mode” with a scope of “proof-of-life: one developer, one environment, one gateway.” Multi-tenant enterprise deployment is a stated future goal, not current capability. Evaluators should plan pilots accordingly.

Think of it this way. Most AI agent security today is the equivalent of posting a compliance policy on the company intranet and trusting every employee to follow it. NemoClaw is the equivalent of badge-access doors, network segmentation, and DLP — controls that enforce policy regardless of whether the individual complies voluntarily.

NemoClaw is Apache 2.0 licensed. While the privacy router performs best with NVIDIA GPUs for local Nemotron inference, the sandbox and policy engine are hardware-agnostic — they run on NVIDIA, AMD, Intel, and CPU-only configurations. Minimum requirements: 4 vCPU, 8 GB RAM, Linux. Windows WSL2 is experimental. macOS has partial support (no local inference).

OpenShell: Kernel-Level Sandboxing

OpenShell is the foundation of the NemoClaw security stack. It provides process-level sandboxing that operates at the kernel level — not at the application level, not at the container level, but at the boundary between user space and the operating system itself.

The sandbox follows a deny-by-default model. When an AI agent starts inside OpenShell, it has zero permissions. Every capability — filesystem access, network egress, binary execution, inter-process communication — must be explicitly granted through the policy engine. An agent cannot read a file, open a network connection, or execute a binary unless a policy rule permits it.

This is the architectural pattern behind Google’s gVisor and AWS’s Firecracker, adapted specifically for autonomous AI agents. The enforcement mechanisms include:

- Landlock LSM — Linux Security Module that restricts filesystem access at the kernel level, regardless of the process’s user permissions

- Seccomp-BPF filters — system call filtering that blocks dangerous syscalls (e.g.,

ptrace,mount,reboot) before they reach the kernel - Network namespaces — complete network isolation where each sandbox has its own network stack, with egress controlled by policy rules

Static vs. Dynamic Policy Enforcement

OpenShell distinguishes between two policy classes. Static policies — filesystem access and process execution — are locked at sandbox creation and enforced by Landlock LSM and seccomp. Once the sandbox is running, these rules cannot be modified. Dynamic policies — network access — are hot-reloadable at runtime via an HTTP CONNECT proxy, allowing security teams to adjust network egress rules without restarting the agent session (NVIDIA documentation).

Network interception uses an OPA (Open Policy Agent) policy evaluation system behind the HTTP CONNECT proxy, enabling granular L7 enforcement: per-binary (e.g., git vs. curl), per-endpoint, and per-method control over every agent network action (MarkTechPost, earezki.com Dev Journal). Each agent session receives its own ephemeral sandbox — only directories explicitly mounted via policy are visible to the agent. Everything else, including the host filesystem, is invisible by default (NVIDIA documentation).

Running K3s inside a Docker container provides lightweight Kubernetes orchestration without a full cluster, but it introduces tradeoffs that enterprise evaluators should understand: additional startup latency, higher memory overhead compared to a bare container, and increased debugging complexity when issues span the K3s, Docker, and host kernel boundaries. For organizations with existing container-based sandboxing, the added complexity should be weighed against the agent-specific security controls that OpenShell provides (NVIDIA community feedback).

Why This Matters for Enterprise

Consider the OWASP ASI-01 (Excessive Agency) risk: an AI agent takes actions beyond its intended scope. With vanilla OpenClaw, the agent runs with whatever permissions its host process has. If that process runs as root — which happens more often than any CISO wants to admit — the agent inherits root access to the entire system.

OpenShell eliminates that risk category entirely. The agent’s permissions are defined by policy, not by its host process. Even if the agent is manipulated through prompt injection or a compromised skill, the kernel-level sandbox prevents escalation. The agent can break things inside its sandbox — that is expected and contained. It cannot break out.

If your security team has ever rejected an AI agent deployment because “it runs with too many permissions,” OpenShell is the technical control that changes the conversation from “no” to “yes, with these constraints.”

The Out-of-Process Policy Engine

The second control is a YAML-based policy engine. On the surface, that sounds unremarkable — plenty of tools use YAML for configuration. What makes NemoClaw’s policy engine architecturally significant is where it runs: out of process.

Most AI agent guardrails today operate inside the agent’s own runtime. The agent’s system prompt says “don’t access these files.” The agent’s internal safety layer checks outputs before they execute. The problem: if the agent is compromised — through prompt injection, a malicious skill, or an adversarial input — those internal guardrails are compromised too. A compromised agent can modify its own system prompt. It cannot modify a policy engine running in a separate process with separate memory space.

How the 4-Level Evaluation Works

Every action an agent attempts passes through four evaluation layers before it executes:

- Binary level — Is this executable allowed? The agent can run

python3but notcurlunless the policy explicitly permits it. Unsigned or unreviewed binaries are blocked by default. - Destination level — Where is this action targeting? The agent can write to

/app/workspace/but not/etc/. It can connect toapi.openai.combut notpastebin.com. - Method level — What operation is being attempted? Read vs. write. GET vs. POST. List vs. delete. Granular enough to allow an agent to read a database but prevent it from dropping tables.

- Path level — Which specific resource path is being accessed? The agent can read

/app/data/reports/but not/app/data/credentials/.

The out-of-process architecture means your policy definitions survive agent compromise. In a compliance audit, you can demonstrate that governance controls are structurally independent of the system being governed — the same separation-of-duties principle that SOC 2 and ISO 27001 require for human access controls, applied to AI agents.

Policies are declarative YAML spanning four enforcement domains: filesystem access, network connections, process execution, and inference routing (DEV Community). The model is deny-by-default — each agent starts with zero permissions, and every capability must be explicitly approved in policy (Particula.tech). Policies are version-controlled in Git and can be deployed per agent, per department, or per workflow. Policy updates apply live at sandbox scope without restarting the agent. For organizations with 10, 50, or 100 agents running simultaneously, this is the difference between ad-hoc permission management and actual governance at scale.

The out-of-process architecture has a critical security property: even a fully compromised agent with arbitrary code execution within its sandbox cannot modify, disable, or bypass its own policies. The policy engine runs in a separate process with separate memory space — enforcement happens before any agent action reaches the operating system. This is not a design aspiration; it is a structural guarantee of the out-of-process model (NVIDIA documentation).

Generic container isolation (Docker, Podman) was designed for application workloads, not autonomous agents. NemoClaw’s policy engine adds agent-specific controls that containers lack: 4-domain declarative policies, per-binary execution control, L7 network interception with OPA evaluation, and inference routing governance. As Katonic.ai’s analysis frames it, this is the difference between isolating a process and governing an autonomous actor (Katonic.ai).

This directly addresses OWASP ASI-03 (Insufficient Access Control). The policy engine does not rely on the agent to self-enforce access rules. It intercepts actions at the OS level before they execute, logs every decision, and creates the audit trail that your compliance team needs.

The Privacy Router: Where Your Data Actually Goes

The third control addresses a problem that every enterprise deploying AI agents faces: data residency. When your AI agent processes a customer contract, a financial report, or an employee record, where does that data go? With most OpenClaw deployments, the answer is “to whichever cloud LLM API you configured” — and your organization may not have visibility into how that provider handles the data downstream.

NemoClaw’s privacy router adds a classification and routing layer between the agent and the model. At the transport level, the HTTP CONNECT proxy mediates every inference call, routing each request based on user-defined privacy policies (NVIDIA GitHub). The system creates a versioned blueprint — a sandboxed environment where every network request, file access, and inference call is governed by declarative policy (Second Talent implementation guide). The architecture is straightforward:

- Sensitive data stays on your infrastructure. The privacy router sends it to local Nemotron models running on-premise or on your own GPU hardware. The data never leaves your network boundary.

- Complex reasoning tasks that do not involve sensitive data route to cloud frontier models (Claude, GPT-4, Gemini) for maximum capability.

- PII detection classifies data before routing, stripping personally identifiable information from any request that goes to a cloud API.

The privacy router has already drawn significant analyst attention. DeepLearning.ai’s The Batch noted that “NVIDIA’s enterprise-focused NemoClaw gives OpenClaw a security boost,” positioning the privacy router as the component that makes cloud-local hybrid inference practical for regulated industries. Quartz framed the broader story as “NVIDIA NemoClaw brings OpenClaw agents to the enterprise” — with the privacy router as the enabler that enterprise compliance teams were waiting for.

hostednemoclaw.com already exists as a managed hosting option for NemoClaw deployments. This is an early indicator that the managed services market around NemoClaw is forming — organizations that cannot or prefer not to self-host will have commercial alternatives. Enterprise evaluators should factor this into build-vs-buy decisions.

The Partner Ecosystem

NVIDIA did not launch NemoClaw alone. The 17 launch partners represent the enterprise software landscape that will define how AI agents integrate into production workflows:

| Category | Partners | Significance |

|---|---|---|

| Enterprise Software | Adobe, Atlassian, SAP, Salesforce, ServiceNow, Dassault Systèmes | Where enterprise workflows live |

| Security & Infrastructure | CrowdStrike, Cisco, Red Hat, Cohesity | Where enterprise security is enforced |

| Industry-Specific | IQVIA, Amdocs, Cadence, Siemens, Synopsys | Healthcare, telecom, semiconductor, manufacturing |

| System Integrators | Accenture, Wipro, Infosys | Building agent templates for enterprise deployment |

The CrowdStrike partnership deserves specific attention. CrowdStrike published a Secure-by-Design Blueprint that integrates its Falcon platform directly into OpenShell. This means Falcon’s endpoint detection, identity protection, and AI-based threat detection (AIDR) can monitor agent behavior inside the NemoClaw sandbox — the same security telemetry your SOC already uses, extended to cover AI agents.

Two additional integrations announced at GTC extend NemoClaw’s enterprise reach. Box integration allows agents to use the Box file system as their primary working environment — bringing enterprise document management, access controls, and audit trails directly into the agent’s sandbox (NVIDIA newsroom). Trend Micro’s TrendAI Vision One integration, announced the same day, adds governance, risk visibility, and runtime enforcement into the agent lifecycle, providing a second enterprise security vendor (alongside CrowdStrike) with native NemoClaw support (Trend Micro).

The open-source community has also taken notice. AwesomeAgents.ai characterized the release as “NVIDIA Open-Sources the Sandbox AI Agents Should Have Had” — framing NemoClaw not as a competitive product but as infrastructure that the entire agent ecosystem was missing.

For CISOs already running CrowdStrike: NemoClaw does not require ripping out your existing security stack and replacing it. It integrates with what you already have. That is an architectural decision that accelerates procurement by months.

How NemoClaw Maps to OWASP ASI Risks

The OWASP Agentic Security Initiative provides the most rigorous framework for evaluating AI agent security controls. Here is how NemoClaw’s three controls map to the ASI Top 10:

| OWASP ASI Risk | NemoClaw Control | Coverage |

|---|---|---|

| ASI-01: Excessive Agency | OpenShell kernel sandbox (deny-by-default) | Full — agent cannot exceed granted permissions |

| ASI-02: Prompt Injection | Out-of-process policy engine | Partial — prevents action execution, does not prevent injection itself |

| ASI-03: Insufficient Access Control | 4-level policy evaluation | Full — binary, destination, method, path enforcement |

| ASI-04: Insecure Data Handling | Privacy router + PII detection | Full — data classification before routing |

| ASI-05: Insecure Output Handling | Policy engine (method-level) | Partial — blocks disallowed actions, does not validate output content |

| ASI-06: Inadequate Sandboxing | OpenShell kernel-level isolation | Full — Landlock + seccomp + network namespaces |

| ASI-07: Supply Chain | Binary-level policy | Partial — blocks unsigned binaries, does not audit skill code |

NemoClaw provides strong coverage for 4 of the top 7 ASI risks and partial mitigation for 3. No single tool covers all 10 — and OWASP does not expect one to. But this is the most comprehensive agent-specific security stack available in open source today.

What NemoClaw Does Not Solve (Yet)

Enterprise decision-makers deserve a clear-eyed view, not vendor hype. Here is what NemoClaw does not address as of March 2026:

- Alpha software, single-player mode — NVIDIA’s own documentation describes the current scope as “one developer, one environment, one gateway.” This is proof-of-life, not production infrastructure. Multi-tenant enterprise deployment is a stated future goal, not current capability (NVIDIA documentation).

- No published performance benchmarks — Latency impact, throughput overhead, and resource consumption under load have not been publicly benchmarked. Enterprise evaluators should run their own performance testing during pilots.

- K3s-inside-Docker adds overhead — The architectural choice of running K3s inside Docker introduces startup latency and memory overhead compared to simpler container-based sandboxing. For latency-sensitive workloads, this tradeoff should be measured against direct container approaches.

- Sandbox isolation is not bulletproof — Community reports indicate early bypass attempts on consumer hardware configurations. As with any sandbox, the security model depends on correct kernel configuration and policy authoring.

- Linux only — Full functionality requires Linux kernel 5.13 or later. Windows WSL2 is experimental. macOS offers partial support with no local inference capability.

- Privacy router requires GPU for full value — The privacy router’s local inference capability depends on GPU hardware. A Dell Pro Max GB10 (DGX Spark) starts at $4,756.84. CPU-only configurations can run the sandbox and policy engine but not local model inference.

- No built-in compliance documentation — NemoClaw provides the technical controls but does not generate SOC 2 evidence packages, HIPAA mapping documents, or audit-ready reports. That layer must be built on top.

- Configuration complexity — Installing NemoClaw is one command:

curl -fsSL https://www.nvidia.com/nemoclaw.sh | bash. Configuring it for production — writing YAML policies, tuning privacy router tables, integrating with existing SIEM, building multi-agent governance — takes weeks of specialized work.

None of these limitations invalidate NemoClaw. They define the gap between downloading open-source software and running it in a production enterprise environment — a gap that exists with every infrastructure tool from Kubernetes to Terraform. The honest assessment is that NemoClaw solves a real and urgent problem, but it solves it today at alpha maturity with single-developer scope. Enterprise planning should account for both the capability and the maturity level.

What Your Organization Needs to Run NemoClaw

Before your team evaluates NemoClaw, here is the infrastructure baseline:

Minimum Requirements

- Operating system: Linux (Ubuntu, RHEL, or equivalent)

- Compute: 4 vCPU, 8 GB RAM minimum

- Hardware: Runs on NVIDIA, AMD, Intel, or CPU-only — hardware agnostic for sandbox and policy engine

- GPU (optional): Required only for privacy router local inference with Nemotron models

- Platform support: Linux full, Windows WSL2 experimental, macOS partial

| Deployment Scenario | Hardware | NemoClaw Capability |

|---|---|---|

| Full stack (sandbox + policy + privacy) | Linux server with NVIDIA GPU or Dell Pro Max GB10 ($4,756.84) | All 3 controls including local Nemotron inference |

| Governance only (sandbox + policy) | Any Linux server — 4 vCPU, 8 GB RAM, no GPU required | Kernel sandbox + policy engine, cloud-only LLM routing |

| Cloud deployment | Cloud VM with GPU instance (AWS p4d, GCP A2, Azure NC) | Full stack in cloud — higher per-hour cost, faster to provision |

The hardware-agnostic design is a deliberate enterprise decision by NVIDIA. Your organization does not need to purchase NVIDIA GPUs to get the sandboxing and policy governance benefits. The privacy router’s local inference is the only component that benefits from GPU hardware.

The Enterprise Adoption Signal

NemoClaw is not just a product release. It is a signal about where the enterprise AI agent market is heading.

When NVIDIA commits R&D resources to AI agent security, when CrowdStrike publishes a Secure-by-Design Blueprint, when Accenture, Wipro, and Infosys begin building agent templates — these are not speculative bets. These are infrastructure companies preparing for a market they believe is imminent.

The implication for enterprise leaders: the window between “AI agents are experimental” and “AI agents are in production across your industry” is closing. Organizations that establish governance frameworks now — while NemoClaw is still in early access — will be production-ready when the stack reaches general availability. Organizations that wait will face a 6-to-12-month catch-up timeline while competitors are already deploying.

This is not a technology adoption decision. It is a governance readiness decision. The technology will mature on NVIDIA’s timeline. Your organization’s ability to deploy it securely depends on how much groundwork you do before that happens.

48% of cybersecurity professionals rank agentic AI as their #1 attack vector (Dark Reading). 42,665 exposed OpenClaw instances are already in the wild, 93.4% with authentication bypass. 17 Fortune-class companies have committed to NemoClaw.

Meanwhile, Gartner projects 40% of enterprise applications will include AI agents by end of 2026. The gap between AI agent adoption and AI agent governance is widening — not closing.

Where to Start: A Practical Framework

If you are evaluating NemoClaw for your organization, here is a phased approach that accounts for the alpha-stage reality while building toward production readiness:

-

1

Audit your current OpenClaw exposure. How many instances are running in your organization? Who deployed them? What permissions do they have? What data are they accessing? Most enterprises we work with discover shadow AI agent deployments they did not know existed.

-

2

Map your requirements to the OWASP ASI Top 10. Not every organization needs all 3 NemoClaw controls on day one. If data residency is your primary concern, the privacy router is your priority. If excessive permissions keep your security team up at night, start with OpenShell’s sandbox.

-

3

Run a controlled pilot. Deploy NemoClaw on a single agent, single workflow, in a non-production environment. Validate the sandbox isolation. Test the policy engine against your actual use cases. Measure what works and what does not.

-

4

Build the governance layer. NemoClaw provides the technical controls. Your organization still needs compliance documentation, audit procedures, incident response plans, and multi-agent governance policies. This is the layer that turns open-source software into enterprise infrastructure.

-

5

Plan for GA. NemoClaw is alpha today. When it reaches general availability, your organization should be ready to move from pilot to production in weeks, not months. The groundwork you do now determines that timeline.

ManageMyClaw Enterprise provides NemoClaw implementation, security hardening, and managed care for organizations that need to move through these phases with expert support rather than figuring it out from documentation alone. Our assessment starts at $2,500 and delivers an architecture review, OWASP ASI gap analysis, and a prioritized remediation plan — with no commitment to implementation required.

Frequently Asked Questions

Is NemoClaw production-ready for enterprise deployment?

Not yet. NemoClaw is in alpha/early-access preview as of March 2026. NVIDIA’s own documentation warns to “expect rough edges.” The core security primitives — OpenShell sandbox, policy engine, privacy router — work today, but the stack has not reached general availability with enterprise SLAs from NVIDIA. The 17 launch partners (including Adobe, SAP, and CrowdStrike) signal strong enterprise commitment, but production deployment should start with a controlled pilot.

Does NemoClaw require NVIDIA hardware?

No. The sandbox and policy engine are hardware-agnostic and run on NVIDIA, AMD, Intel, or CPU-only configurations. The minimum requirements are 4 vCPU and 8 GB RAM on Linux. NVIDIA GPUs are only required if you want to run the privacy router’s local Nemotron models for on-premise inference. A Dell Pro Max GB10 (DGX Spark) starts at $4,756.84 for that use case.

How does NemoClaw differ from putting OpenClaw in a Docker container?

Docker provides container-level isolation using Linux namespaces and cgroups. OpenShell adds kernel-level enforcement with Landlock filesystem controls, seccomp-BPF system call filtering, and network namespaces — plus a 4-level policy engine and audit trail. The critical difference: the policy engine runs out of process, meaning a compromised agent cannot modify its own security policies. Docker alone does not provide that separation.

What OWASP risks does NemoClaw address?

NemoClaw provides full coverage for OWASP ASI-01 (Excessive Agency), ASI-03 (Insufficient Access Control), ASI-04 (Insecure Data Handling), and ASI-06 (Inadequate Sandboxing). It provides partial mitigation for ASI-02 (Prompt Injection), ASI-05 (Insecure Output Handling), and ASI-07 (Supply Chain). See our OWASP Agentic Top 10 guide for the complete framework.

Can our team deploy NemoClaw without outside help?

NemoClaw installs in one command. Configuring it for enterprise production — writing YAML policies for your specific workflows, configuring privacy router tables, integrating with your SIEM and CrowdStrike Falcon, building compliance documentation, and setting up multi-agent governance — typically takes 2 to 6 weeks of specialized work. ManageMyClaw’s assessment tier ($2,500) can give your team an architecture review and remediation plan before you decide whether to build in-house or engage implementation support.

What is the CrowdStrike Secure-by-Design Blueprint?

CrowdStrike published an integration blueprint that connects Falcon endpoint detection, identity protection, and AI-based threat detection (AIDR) directly into OpenShell’s sandbox. This means your existing CrowdStrike deployment can monitor AI agent behavior with the same telemetry your SOC already uses — no separate monitoring tool required.